I still remember the look on a lead developer’s face during a Q3 review last year. They had successfully migrated their generative AI prototypes to a production environment using Amazon Bedrock, praising the "serverless simplicity" of the transition. But then the first full-month invoice arrived. The engineering team saw a "pay-as-you-go" bill that was nearly 40% higher than their original self-hosted projections. The CFO asked the question that every tech leader eventually faces: Is managed actually cheaper, or are we paying a premium for the illusion of convenience?

This moment of realization is becoming more common. When we dive into an Amazon Bedrock cost analysis, the answer to "is managed cheaper" isn't a simple yes or no, but it is a study in unit economics, engineering velocity, and the hidden costs of idle infrastructure.

Understanding the Bedrock Pricing Tiers

At first glance, Amazon Bedrock’s pricing seems like a dream for financial predictability. It follows a serverless, token-based model where you pay only for what you use. However, the complexity lies in the three distinct consumption modes: On-Demand, Batch, and Provisioned Throughput.

For most startups and mid-market companies, On-Demand is the entry point. You are billed per 1,000 tokens (both input and output). For instance, Amazon Nova Micro costs as little as $0.000035 per 1,000 input tokens, making it a highly cost-effective choice for simple classification tasks. But the "managed" advantage starts to blur when you scale. If your application handles millions of queries per hour, the linear scaling of token costs can quickly surpass the cost of a dedicated GPU instance on EC2 or SageMaker.

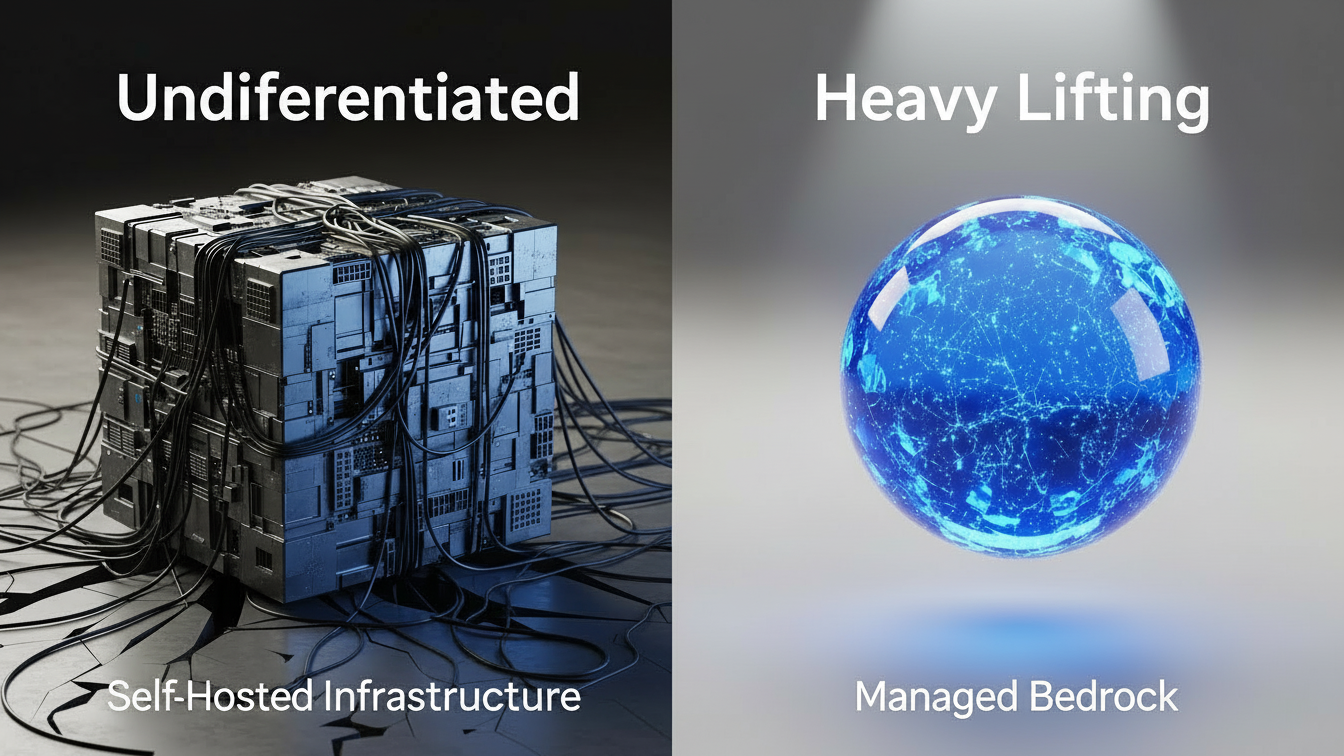

The real "managed" value proposition isn't the raw token price, it’s the removal of the undifferentiated heavy lifting. When you use Bedrock, you aren't paying for a virtual machine; you are paying for the elimination of GPU driver updates, auto-scaling logic, and high-availability architecture across multiple Availability Zones. In a self-hosted environment, a single engineer's time spent troubleshooting a CUDA driver conflict can cost more in one afternoon than a month of Bedrock’s managed overhead.

Hidden Costs: Where the Budget Goes to Hide

When performing an Amazon Bedrock cost analysis, the most common mistake is focusing solely on inference tokens. In reality, the "managed" ecosystem introduces several ancillary costs that can catch teams off guard.

Embedding and Vector Storage: If you are building a Retrieval-Augmented Generation (RAG) system, you aren't just paying for the final answer. You are paying for the initial embedding of your documents. Models like Titan Text Embeddings V2 are efficient, but if you re-index your entire knowledge base every time a document changes, those micro-transactions add up.

Provisioned Throughput and Idle Time: For high-traffic enterprises, Provisioned Throughput (PT) offers "Model Units" that guarantee a certain number of tokens per minute. While PT provides a significant discount for steady-state workloads, often 20-30% compared to on-demand, it reintroduces the risk of paying for idle capacity. If your PT reservation isn't utilized at 80% or higher, you are effectively paying a premium for a "reserved seat" that no one is sitting in.

Data Transfer and Region Premiums: Cloud costs are rarely uniform. Running inference in us-east-1 might be cheaper than in a more remote region. Furthermore, if your application logic lives in one region and your Bedrock endpoint in another, cross-region data transfer fees will quietly inflate your bill.

This is where advanced visibility becomes mandatory. Much like how Atler Pilot provides real-time cost intelligence and automated governance for traditional cloud spend, Bedrock users need a way to see past the "total tokens" and understand which specific applications are driving the most expensive requests. Without that granular visibility, "managed" becomes a black box of escalating expenses.

Managed Bedrock vs. Self-Hosted SageMaker

The most common question architects ask is: At what volume should I move from Bedrock to self-hosting models on SageMaker or EC2?

Self-hosting an LLM (like Llama 3 on an ml.g5.2xlarge instance) has a fixed cost. In the AWS SageMaker pricing model, you might pay roughly $1.21/hour for a memory-optimized instance. If that instance stays idle, your "cost per token" is infinite. If you saturate that instance with 100% utilization, your "cost per token" can drop to a fraction of Bedrock’s on-demand rates.

However, statistics show that the "Managed" approach often wins on Total Cost of Ownership (TCO). According to AWS case studies, organizations like DoorDash have seen up to a 50% reduction in development time by using Bedrock's managed APIs instead of building custom hosting layers. When you factor in the speed-to-market and the ability to pivot between models (like switching from Claude to Llama with a single line of code), the managed premium often pays for itself in "Engineering Velocity."

Strategies for Optimizing Bedrock Spend

If you’ve determined that a managed environment is the right strategic move, the focus shifts to optimization. You don't just want it to be managed; you want it to be lean.

Prompt Caching: This is the "low-hanging fruit" of 2025. By caching frequently used prompt prefixes (like long system instructions or multi-shot examples), Bedrock offers a discount of up to 90% on cached tokens. For agentic workflows where the same 2,000-token context is sent repeatedly, this is a game-changer.

Model Distillation: Instead of using a premium "Teacher" model (like Claude 3.5 Sonnet) for every request, use Model Distillation to train a smaller, "Student" model (like Nova Micro) on the teacher's outputs. This can result in a 75% reduction in costs with less than a 2% drop in accuracy.

Tiered Inference (Priority vs. Flex): New for late 2025, Bedrock now offers service tiers. You can route urgent, user-facing chats to the "Priority" tier for low latency, while sending non-urgent batch processing (like nightly document summarization) to the "Flex" tier for a significant discount.

Strategic spend shaping is about ensuring that every dollar contributes to growth. This aligns with the core philosophy of Atler Pilot: automating the governance of these decisions so that engineers can focus on building features while the platform ensures the consumption model, whether it’s On-Demand or Provisioned, is always optimized for the current workload.

Conclusion: Shaping the Engine of Growth

So, is it always managed more cheaply? The data suggests that for the vast majority of organizations, especially those prioritizing agility, the answer is yes, but only if managed with discipline. The "managed" premium is a fee for agility, security, and scalability. The companies that "bleed" money in the cloud are those that treat Bedrock as a "set and forget" service. The winners are those who treat it as a dynamic engine. By utilizing tiered inference, prompt caching, and intelligent governance tools like Atler Pilot, you can ensure that your Amazon Bedrock cost analysis always points toward one conclusion: you are getting more value out of every token than your competition.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.