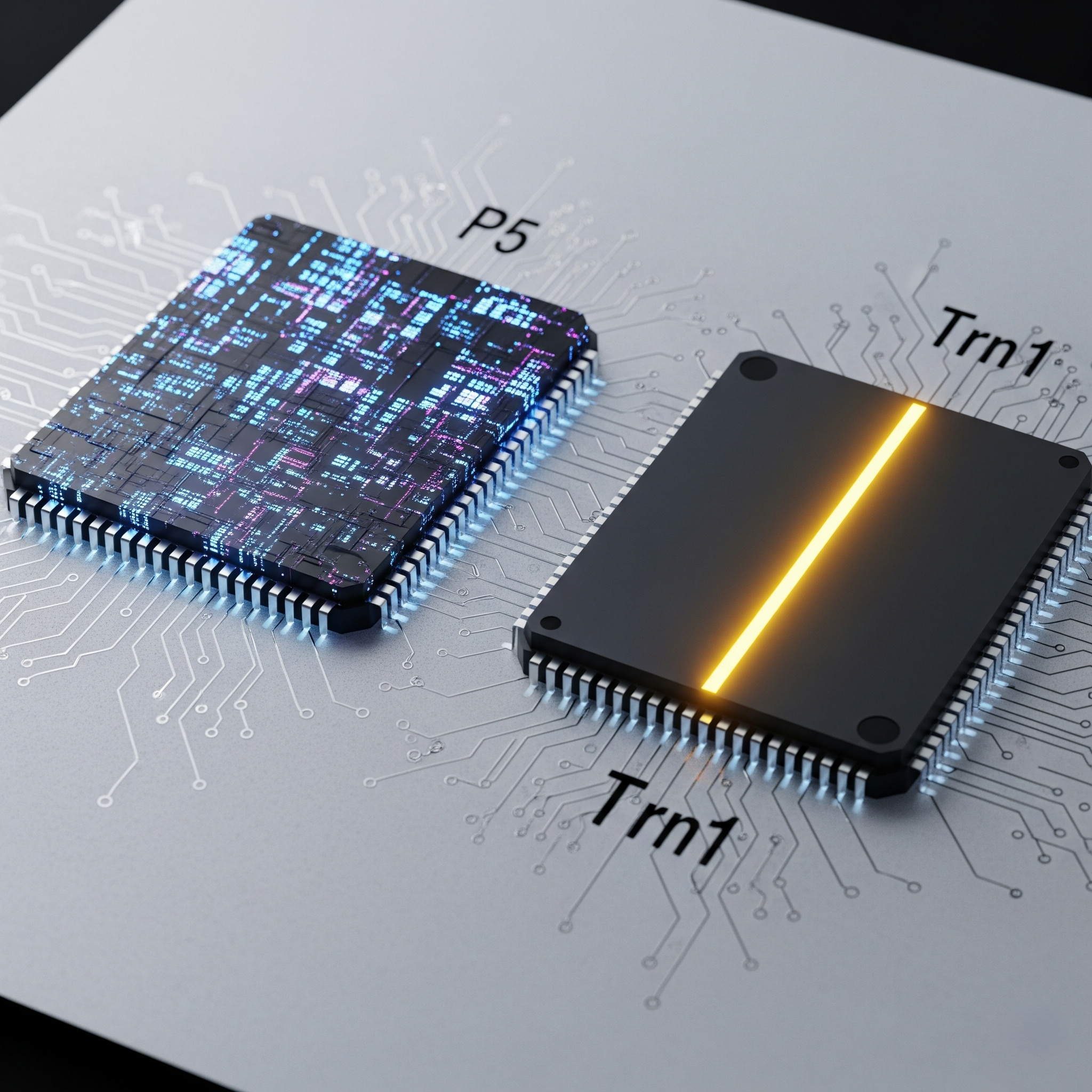

For teams training large-scale machine learning models on AWS, the choice of accelerator is one of the most critical decisions impacting both project timelines and budget. For years, NVIDIA GPUs have been the default choice, with the powerful P5 instances (featuring H100 GPUs) representing the top of the line. However, AWS has invested in its own specialized AI hardware, offering AWS Trainium (Trn1) instances as a purpose-built, cost-effective alternative. The decision requires a careful analysis of the AWS Trn1 vs. P5 instance cost and performance trade-offs.

The Contenders: Purpose-Built vs. General-Purpose

The core difference lies in their design philosophy.

Amazon EC2 P5 Instances: These are general-purpose GPU powerhouses. Powered by 8 NVIDIA H100 GPUs, they excel at a wide range of high-performance computing (HPC) and deep learning tasks. Their strength lies in the mature NVIDIA CUDA ecosystem.

Amazon EC2 Trn1 Instances: These instances are powered by up to 16 AWS Trainium chips. Trainium is an ASIC designed by AWS specifically for training deep learning models. This specialization allows for hardware-level optimizations that can lead to superior price-performance.

The Cost Comparison: A Look at the Numbers

A direct comparison of on-demand hourly pricing reveals a significant difference.

p5.48xlarge(8 x H100 GPUs): ~$98.32 per hourtrn1n.32xlarge(16 x Trainium chips): ~$24.78 per hour

On a raw hourly basis, the Trn1n instance is approximately

75% cheaper than the P5 instance.

Performance-per-Dollar: The Real Metric

The true measure of cost-effectiveness is not the cost per hour, but the

cost-to-train a model to a desired accuracy. The value proposition of Trainium is that its specialized architecture can complete training jobs more efficiently, leading to a lower total project cost.

AWS Claims: AWS states that Trn1 instances can offer up to 50% cost-to-train savings over comparable EC2 instances.

Real-World Example: One customer reported achieving a 20% training cost reduction with Trn1 instances compared to equivalent GPU instances.

The Catch: The Software Ecosystem

The biggest advantage of P5 instances is their reliance on the NVIDIA CUDA platform, the de facto standard for AI/ML development. To use Trn1 instances, you must use the

AWS Neuron SDK, which compiles and optimizes your PyTorch or TensorFlow code to run on Trainium hardware.

The Hurdle: This compilation step is an additional process in the MLOps workflow and represents a learning curve for teams accustomed to CUDA.

The Benefit: The Neuron compiler is what unlocks the full performance of the Trainium hardware.

The Verdict: Which Accelerator is Right for You?

The choice is a strategic trade-off between performance, cost, and engineering effort.

Choose P5 Instances (NVIDIA H100) if:

You need the absolute highest performance for a single node.

Your workflow is deeply embedded in the CUDA ecosystem, and you lack the bandwidth to adopt the Neuron SDK.

You are working with experimental architectures that may not have full support in Neuron.

Choose Trn1 Instances (AWS Trainium) if:

Cost-to-train is your primary metric. The potential for up to 50% savings is compelling.

You are training large language models or diffusion models, which are primary targets for Trainium.

Your team is willing to invest the effort to integrate the AWS Neuron SDK into your MLOps pipeline.

Conclusion

AWS Trainium represents a serious challenge to NVIDIA's dominance by competing aggressively on price. While P5 instances may still hold the crown for raw performance in some cases, the compelling economics of Trn1 make it an essential option to evaluate for any large-scale training project on AWS.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.