Let’s talk about something most teams underestimate until their cloud bill starts creeping up, which is caching. Not the textbook definition, not the “store data for faster access” explanation, but the real-world impact it has when your system starts handling millions of requests.

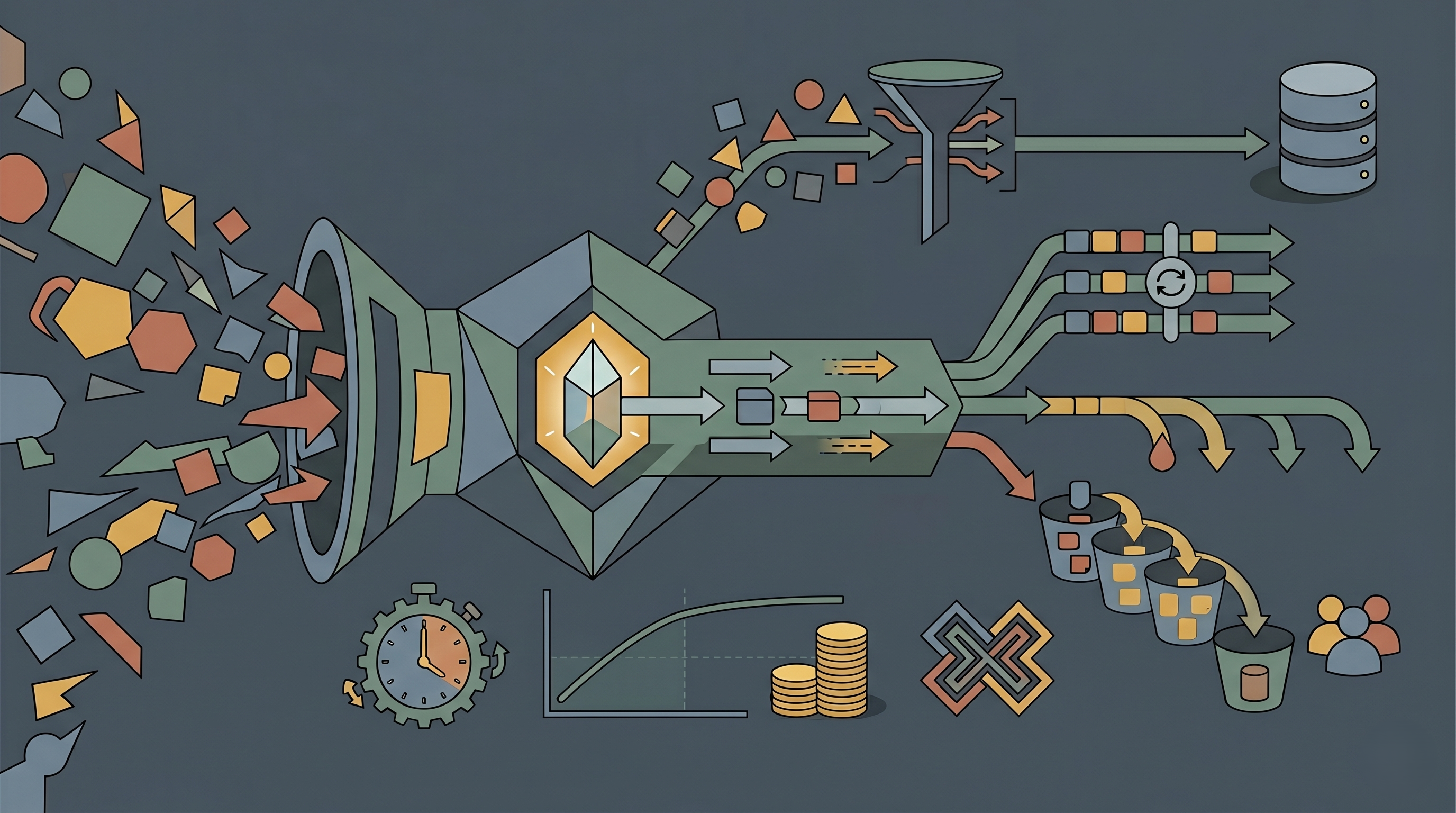

Caching strategies and their financial implications in high-scale systems are directly tied to how efficiently your infrastructure spends money. At scale, every redundant database call, every unnecessary API hit, and every extra byte transferred over the network is billable. Caching sits right at the intersection of performance and cost, quietly deciding whether your system scales efficiently or expensively.

What makes this even more interesting is that caching is one of the few architectural decisions where small optimizations can lead to disproportionately large financial outcomes. So, let’s unpack it through this blog.

The Economics of Caching

In earlier stages of system design, caching is often introduced to reduce latency and improve user experience. While that remains true, high-scale systems expose a deeper dimension of cost efficiency. Serving data from memory instead of repeatedly querying databases fundamentally changes how infrastructure is utilized.

Modern in-memory systems like Redis can deliver responses in sub-millisecond latency, which is significantly faster than disk-based systems that may take tens of milliseconds or more. According to Redis documentation, in-memory access can be up to 100 times faster than disk access, which directly reduces the computational effort required per request.

But the real financial implication lies in what doesn’t happen when caching is implemented effectively. Fewer database calls mean lower IOPS consumption, reduced CPU cycles, and less frequent autoscaling events. AWS highlights that caching layers can handle hundreds of thousands of requests per second, often replacing multiple backend resources. This effectively flattens the cost curve as traffic grows.

In other words, caching shifts the system from a reactive scaling model to a controlled, optimized consumption model.

Cost Dynamics of Cache Hits and Cache Misses

At the heart of caching economics lies a simple but powerful concept: the cache hit ratio. A cache hit means the system serves the request directly from the cache, while a cache miss forces the system to fetch data from the source.

This distinction is where cost differences begin to compound. A cache hit consumes minimal resources, like primarily memory access, whereas a cache miss triggers a chain of operations that may include database queries, network transfers, and even compute scaling under load.

As traffic increases, the proportion of cache misses becomes increasingly expensive. Each miss introduces latency and cost simultaneously, creating a dual penalty. On the other hand, improving cache hit ratios reduces backend pressure and stabilizes infrastructure usage.

Industry observations suggest that optimized caching can reduce bandwidth usage by 20–40%, and in some cases even up to 50% when combined with edge caching strategies. These reductions are not marginal. They directly impact cloud billing categories such as data transfer and compute utilization.

The financial takeaway is clear: improving cache efficiency is one of the most direct ways to control scaling costs.

Cache-Aside Strategy: Efficient but Vulnerable Under Load

The cache-aside approach is widely adopted because of its simplicity and efficiency. Data is only loaded into the cache when it is requested, ensuring that memory is not wasted on unused data.

From a cost perspective, this strategy is attractive because it minimizes unnecessary storage and keeps cache utilization lean. However, its reactive nature introduces risk during traffic spikes. When multiple requests simultaneously encounter a cache miss for the same data, the system experiences what is known as a cache stampede.

During such events, the database is hit repeatedly with identical queries, leading to sudden spikes in compute usage and potential autoscaling. This not only increases operational costs but can also degrade performance.

The financial implication here is subtle but significant. While cache-aside reduces steady-state costs, it requires additional safeguards, such as request coalescing or locking mechanisms, to prevent cost spikes under load.

Write-Through Caching: Trading Cost for Consistency

Write-through caching ensures that every data write is immediately reflected in both the cache and the underlying database. This approach eliminates the possibility of stale reads and ensures high data consistency.

From a financial standpoint, this predictability comes at a cost. Every write operation becomes more expensive because it involves multiple systems. Storage usage increases, and write latency can lead to higher compute consumption.

In environments where read operations dominate, this strategy may introduce unnecessary overhead. However, in systems where consistency is critical, such as financial platforms or transactional systems, the additional cost is often justified.

The key insight is that write-through caching shifts the cost burden from read inefficiency to write amplification. Whether this trade-off is beneficial depends entirely on the workload characteristics.

Write-Behind Caching: Cost Optimization with Controlled Risk

Write-behind caching takes a different approach by deferring database writes and handling them asynchronously. This reduces immediate load on the database and allows the system to batch write operations more efficiently.

Financially, this can significantly lower peak infrastructure usage. By smoothing out write operations, systems can avoid sudden spikes in resource consumption, leading to more predictable and often lower costs.

However, this strategy introduces a different kind of risk, which is data inconsistency or loss in case of failures. Recovering from such scenarios can incur operational and financial overhead, especially in systems where data integrity is critical.

This makes write-behind caching particularly suitable for use cases where eventual consistency is acceptable, such as analytics or logging systems. When applied correctly, it can deliver strong cost benefits without compromising system stability.

Edge and CDN Caching: Redefining Data Transfer Economics

One of the most impactful caching strategies from a financial perspective is edge caching through Content Delivery Networks (CDNs). By storing data closer to users, CDNs reduce the need to fetch data from origin servers repeatedly.

This has a direct effect on data transfer costs, which are often one of the largest components of cloud bills. Each request served from the edge eliminates the need for long-distance data transfer, reducing both latency and cost.

Advanced CDN strategies also improve cache hit ratios through intelligent routing and predictive caching. Platforms like CacheFly highlight that optimizing cache byte ratios can unlock significant hidden savings by minimizing origin fetches.

For globally distributed systems, edge caching is not just an optimization—it becomes a foundational cost control mechanism.

In-Memory Caching: High Upfront Cost, High ROI

In-memory caching solutions such as Redis and Memcached are often perceived as expensive due to the cost of RAM. However, this perspective changes when viewed through the lens of total infrastructure cost.

By offloading a significant portion of read operations from databases, in-memory caching reduces the need for expensive database instances and lowers compute requirements. It also minimizes latency, which can improve user experience and indirectly impact revenue.

The financial equation here is not about the cost of memory alone, but about the cost savings it enables across the system. In many high-scale environments, the reduction in backend infrastructure costs outweighs the investment in memory.

This is why in-memory caching is often considered one of the highest ROI optimizations in distributed systems.

Optimization Techniques That Directly Influence Cost Efficiency

Beyond choosing a caching strategy, the way caching is implemented plays a critical role in determining its financial impact. Techniques such as TTL optimization, cache key design, and intelligent invalidation directly influence how effectively the cache is utilized.

TTL values determine how long data remains in the cache, and finding the right balance is crucial. Short TTLs increase cache misses and backend load, while long TTLs risk serving outdated data. Adaptive TTL strategies, which adjust based on usage patterns, can significantly improve efficiency.

Similarly, cache key design affects how data is stored and retrieved. Poorly designed keys can lead to duplication and reduced hit rates, increasing memory usage without delivering proportional benefits.

Cache invalidation, often considered one of the hardest problems in computer science, also has financial implications. Frequent invalidation reduces cache effectiveness, while delayed invalidation can compromise data accuracy. Event-driven approaches, where actual data changes trigger cache updates, offer a more balanced solution.

Together, these optimizations determine whether caching delivers maximum value or becomes an underutilized resource.

The Hidden Costs of Inefficient Caching

While much attention is given to the benefits of caching, the cost of doing it poorly is often underestimated. Inefficient caching strategies can lead to overprovisioned infrastructure, increased data transfer costs, and degraded performance.

Without effective caching, systems rely heavily on databases and compute resources, forcing organizations to scale infrastructure more aggressively. This not only increases costs but also introduces complexity in managing and maintaining the system.

Latency also has a direct financial impact. Studies have shown that even a 100-millisecond delay in response time can reduce conversion rates by approximately 1%. In high-scale systems, this translates into significant revenue loss over time.

The absence of a well-designed caching strategy is a financial liability.

Measuring the Financial Impact of Caching

To fully understand the value of caching, organizations must move beyond intuition and measure its impact. Metrics such as cache hit ratio, database query reduction, and bandwidth savings provide a clear picture of how caching affects system efficiency.

A high cache hit ratio, typically above 90%, indicates that most requests are being served from the cache, reducing backend load. Bandwidth savings, particularly in systems using CDNs, can reach up to 50%, significantly lowering data transfer costs.

By comparing infrastructure usage before and after implementing caching strategies, teams can quantify the return on investment and make informed decisions about further optimizations.

The Future of Caching: Adaptive and Intelligence-Driven

Caching is evolving from static configurations to dynamic, intelligence-driven systems. Modern approaches leverage machine learning to predict access patterns and adjust caching behavior in real time.

This includes adaptive TTLs, predictive prefetching, and automated cache eviction policies. These systems continuously optimize themselves based on usage patterns, ensuring that caching remains efficient even as workloads change.

In high-scale environments, this shift is crucial. Static caching strategies cannot keep up with dynamic workloads, making adaptive caching a key component of future system design.

Conclusion

Caching is often introduced as a performance enhancement, but in high-scale systems, it becomes much more than that. It is a strategic tool for managing infrastructure costs, improving efficiency, and maintaining scalability.

The real value of caching lies not just in faster responses, but in smarter resource utilization. When implemented thoughtfully, it reduces unnecessary work, stabilizes system behavior, and creates a more predictable cost structure.

In a landscape where cloud costs can quickly spiral out of control, caching stands out as one of the most effective ways to bring them back in check.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.