Don't Train When the Grid is Dirty. The electrical grid is not a static resource. It is a living, breathing machine that varies wildly in cleanliness throughout the day.

At 1:00 PM, the grid might be flooded with solar energy (intensity: ~20g CO2/kWh). But by 7:00 PM, the sun sets, demand spikes as people return home, and coal or gas peaker plants fire up to bridge the gap (intensity: ~600g CO2/kWh). That is a 30x difference in carbon impact for the exact same computation.

Running a massive AI training job at 7:00 PM is environmentally wasteful and, with dynamic energy pricing, increasingly expensive. But how do you automate this?

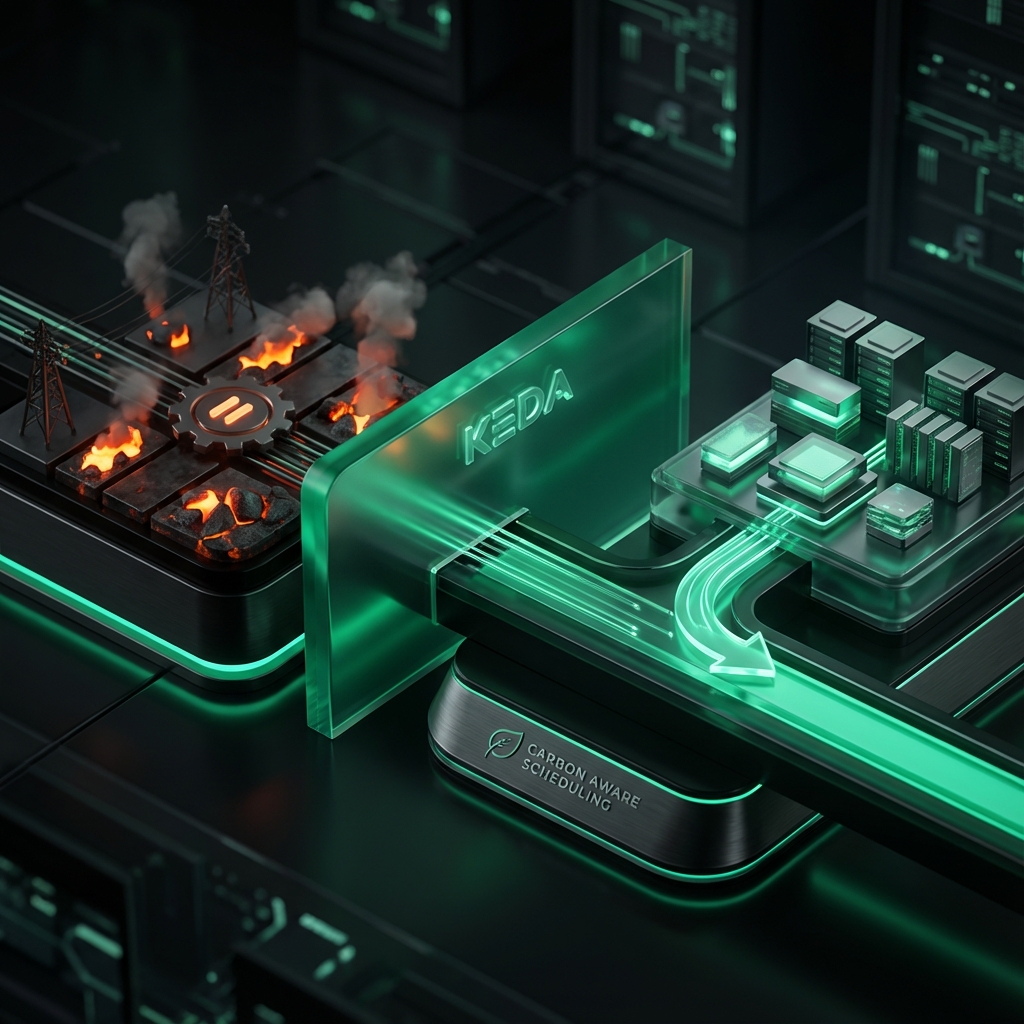

The Solution: KEDA (Kubernetes Event-Driven Autoscaling)

KEDA is the industry standard for autoscaling in Kubernetes. While the default Horizontal Pod Autoscaler (HPA) scales based on CPU or Memory, KEDA scales based on External Events (queue length, Kafka topics, database queries).

The Carbon-Aware Scaler is a KEDA plugin that lets you scale your workloads based on the carbon intensity of the local power grid.

Strategy 1: Temporal Shifting (Time)

Concept: "Do it later." Instead of starting a job immediately, we pause it (scale to 0) until the grid is clean, then resume it (scale to N). This is perfect for delay-tolerant workloads like model re-training, nightly batch reporting, or data processing.

Implementation Example We define a ScaledObject that monitors the Carbon Intensity. We set a maxReplicaCount ensuring the job only runs when conditions are met.

YAML

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: carbon-aware-training-job

namespace: ai-training

spec:

scaleTargetRef:

name: training-worker-deployment

minReplicaCount: 0 # Default to paused

maxReplicaCount: 10 # Scale up to 10 workers when clean

triggers:

- type: carbon-aware

metadata:

metricType: carbonIntensity

# The threshold: Only run if grid is < 150g CO2/kWh

targetValue: "150"

# Location options: 'us-east-1', 'de', 'fr', etc.

location: "us-east-1"

# Optional: Forecast window (e.g., predict next 1 hour)

forecastWindow: "1h"

In this configuration, if the grid intensity in Virginia (us-east-1) spikes above 150g, KEDA will scale the deployment down to 0. The job effectively "sleeps" through the dirty power window.

Strategy 2: Spatial Shifting (Location)

Concept: "Do it elsewhere." If you have a global fleet (Federated Kubernetes or Multi-Cluster), you can move the workload to where the weather is favorable. This is "Follow the Wind" computing.

Scenario A: It's night in Texas (no solar), but it's noon in Spain (high solar). Move the job to

eu-west-1.Scenario B: A storm in the North Sea is generating massive wind power for the UK. Run the job in

uk-south.

This requires a more complex setup with a global control plane (like Karmada or OCM) that routes jobs based on a global carbon map, but KEDA provides the local enforcement mechanism.

The Business Case Why would a CFO care? Because Carbon-Aware Scheduling is often just Cost-Aware Scheduling in disguise.

Many cloud providers offer "Spot" pricing that loosely correlates with excess capacity. Excess capacity often correlates with times of high renewable generation (high supply) or low demand (low grid stress). By aligning your heavy compute tasks with the grid's "Green Windows," you often align with the "Cheap Windows" too.

Limitations This does not work for everything. Real-time inference cannot wait. If a user asks a chatbot a question, you cannot say "Please wait 4 hours for the wind to blow." For latency-sensitive workloads, you must rely on Optimization (quantization, caching) rather than Scheduling.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.