For the last decade, the default architectural pattern was "Send it to the Cloud." IoT sensors, factory cameras, and mobile apps streamed massive amounts of raw data to centralized regions (us-east-1) for processing. In 2025, the economics of this model have collapsed for high-bandwidth AI applications.

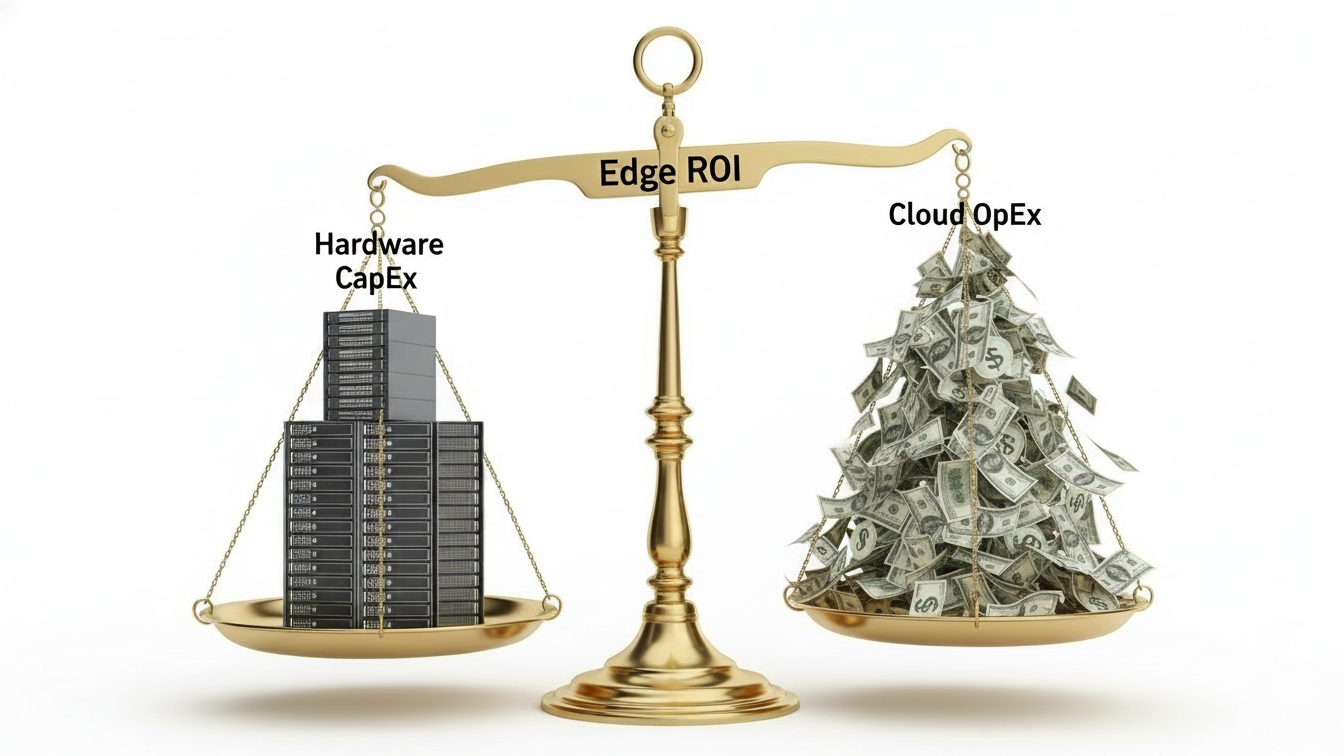

The combination of Egress Fees, Storage Costs, and Latency Penalties has made "Cloud-Native" prohibitively expensive for video analytics and real-time inference. This article analyzes the inflection point where Edge Computing becomes the superior economic choice.

The Cloud Cost Equation

In a centralized cloud model, your cost driver is Data Gravity.

$$\text{Cost}_{\text{cloud}} = (\text{Ingress/Egress}) + (\text{Storage}) + (\text{Cloud GPU Rental})$$

The Hidden Killer: Bandwidth. Streaming a single 4K video feed (25Mbps) consumes ~8TB of bandwidth per month. At standard cloud ingress/processing rates, just getting the data to the GPU costs more than the inference itself .

Latency: Round-trip latency to a cloud region (50-100ms) is unacceptable for safety-critical apps like autonomous robotics or factory shut-off valves .

The Edge Cost Equation

In an edge model, the cost driver is Hardware CapEx and Fleet Management.

Cost edge =(Hardware CapEx)+(Fleet Ops Software)+(Electricity)

Hardware: High-performance edge AI modules (like NVIDIA Jetson Orin or Hailo-8) now deliver server-grade inference (200+ TOPS) for under $1,000 .

Zero Egress: You process the video locally and send only the metadata (e.g., "Person detected at timestamp X") to the cloud. This reduces bandwidth consumption by 99.9% .

The Break-Even Analysis

Consider a "Visual Inspection" use case for a manufacturing plant with 50 cameras.

Cost Category | Cloud Approach (AWS/Azure) | Edge Approach (Local Devices) |

Hardware | $0 (OpEx) | $50,000 (50 x $1k devices) |

Bandwidth | $15,000/month (Streaming 50 feeds) | $50 / month (Metadata only) |

Compute | $10,000/month (GPU instances) | $500/month (Electricity) |

Year 1 Total | $300,000 | $56,600 |

Verdict: The Edge architecture achieves ROI in Month 3 .

Strategic Recommendation

Move to the Edge if: Data volume is high (Video, LIDAR), connectivity is intermittent (mines, oil rigs), or privacy is paramount .

Keep in the Cloud if: Workload is bursty (10,000 GPUs for 1 hour) or data is small (text logs, telemetry) .

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.