The excitement of building a Retrieval-Augmented Generation (RAG) application is often followed by a sobering moment: the arrival of the first cloud bill. You built a system that magically retrieves the right context for your Large Language Model (LLM), but you likely didn't anticipate the "hidden" tax of that magic. While everyone fixates on token costs and GPT-4 API fees, a silent budget killer is lurking in your infrastructure: the vector database. As you scale from a prototype of a few thousand documents to a production beast with millions of embeddings, your storage and compute costs don't just grow linear, they often skyrocket.

This is where FinOps for Vectors becomes a critical discipline for engineering leaders. It is no longer enough to just "store and retrieve"; we must optimize the unit economics of every search. In the same way, traditional FinOps revolutionized cloud spending by bridging the gap between finance and DevOps, FinOps for vectors applies rigorous financial governance to the high-dimensional world of AI. If you want to survive the age of RAG without bleeding margins, you need to treat your vector embeddings not just as data points, but as assets with a carrying cost.

The Hidden Mathematics of Vector Bill Shock

Most developers underestimate the sheer weight of high-dimensional data until it is too late. When you spin up a standard RAG pipeline, you are typically dealing with floating-point vectors. A single 1536-dimensional vector (standard for OpenAI’s embeddings) using float32 precision takes up roughly 6 kilobytes of memory. That sounds negligible until you do the math for an enterprise corpus. Indexing 100 million vectors doesn't just require storage; it requires high-performance RAM for low-latency retrieval. You aren't just paying for disk space; you are paying for premium, high-availability memory that can cost upwards of ten times more than standard object storage.

Furthermore, the cost isn't static. The complexity of the index, the data structure that allows for fast "nearest neighbor" searches, adds massive overhead. Popular indexing algorithms like HNSW (Hierarchical Navigable Small World) are notoriously memory-hungry because they build a multi-layered graph on top of your raw data. This means your actual memory footprint can be 30% to 50% larger than the raw data size. In a FinOps context, this is "unallocated spend" that doesn't deliver direct value but is necessary to keep the lights on. Understanding this baseline is the first step toward optimization; you cannot optimize what you do not measure, and in the world of vectors, you are measuring gigabytes of RAM that sit idle 99% of the time.

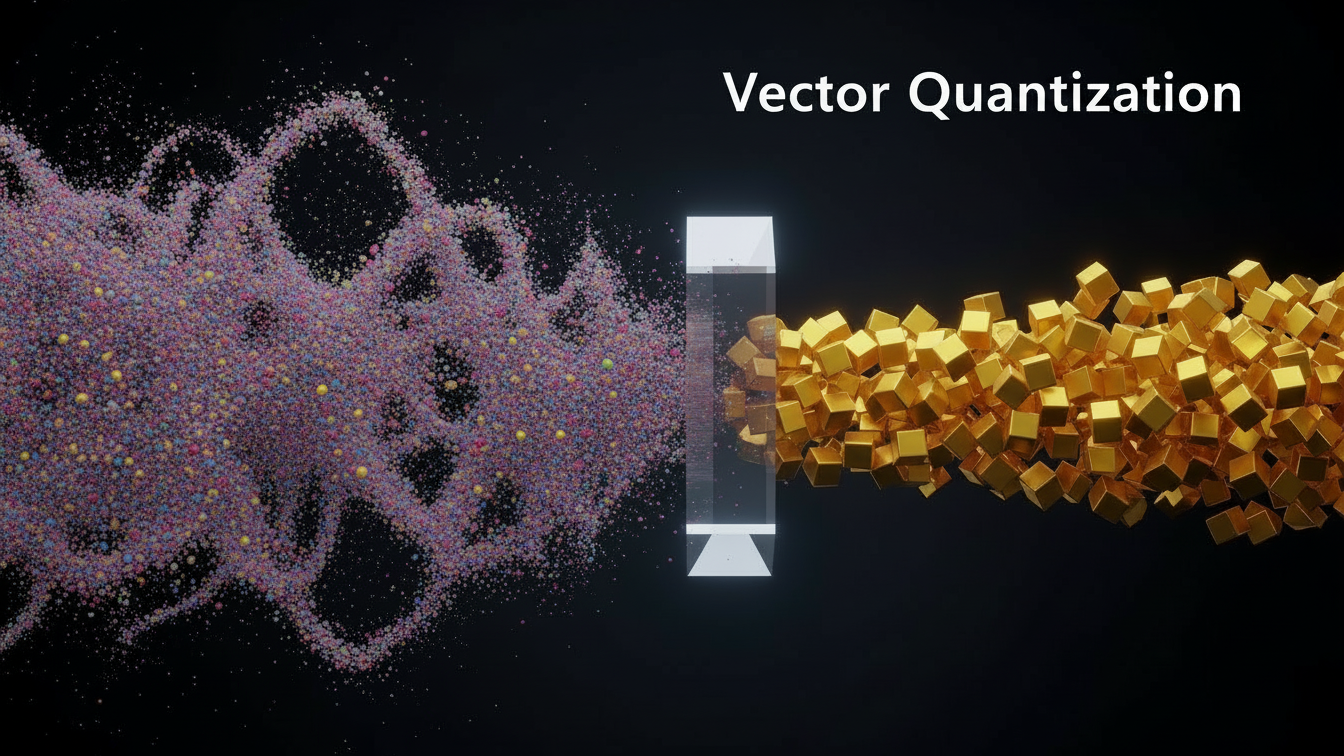

Shrinking the Footprint with Quantization

One of the most powerful levers in your FinOps toolkit is quantization. In simple terms, quantization creates a "lossy" compression of your vectors, trading a tiny fraction of accuracy for massive cost savings. Instead of storing every dimension as a high-precision 32-bit floating-point number, you can compress them into 8-bit integers or even binary values. This is not just a minor tweak; the financial impact is structural. Moving from float32 to int8 (Scalar Quantization) can reduce your memory requirements by a factor of four. For a system costing $10,000 a month in RAM, that is an immediate reduction to $2,500 with often negligible impact on the relevance of your search results.

The science behind this is compelling. Recent benchmarks and industry reports suggest that scalar quantization can reduce memory footprints by approximately 75% while maintaining recall rates above 95% for many standard datasets. For even more aggressive savings, Binary Quantization turns vectors into strings of 0s and 1s, slashing memory usage by up to 30x. While this aggressive approach is best suited for ultra-high-dimensional models (like Cohere’s or OpenAI’s recent outputs), it represents the future of cost-efficient AI. By implementing quantization, you are essentially telling your CFO that you can store four times the data for the same price, fundamentally altering the ROI calculation of your RAG application.

Moving Beyond RAM: Tiered Storage Architectures

The assumption that all vector data must live in RAM is a relic of early vector search technology. In a mature FinOps strategy, we must challenge the idea that "hot" access speeds are required for "cold" data. Most enterprise knowledge bases follow a power-law distribution where a small percentage of documents answer the vast majority of queries. Storing rarely accessed archival data in expensive RAM is financially irresponsible. This is where tiered storage architectures and disk-based indexing come into play, allowing you to balance performance with pragmatism.

Modern vector databases and algorithms like DiskANN (Disk-based Approximate Nearest Neighbor) allow you to store the bulk of your index on NVMe SSDs, which are significantly cheaper than RAM, while keeping only a compressed "navigation" graph in memory. This approach can reduce infrastructure costs by an order of magnitude. By categorizing your data based on access frequency, keeping your "hot" customer support docs in RAM and your "cold" regulatory archives on disk, you apply a classic FinOps principle: rightsizing the resource to the workload. This hybrid approach ensures that you are paying premium rates only for premium performance, rather than subsidizing the storage of data that may never be queried.

Strategic Indexing and the "Good Enough" Threshold

Engineers often default to the configuration that gives the highest possible recall (accuracy), but in a business context, the difference between 99% recall and 95% recall can be double the cost. FinOps for vectors requires a conversation about the "Good Enough" threshold. Choosing the right index type is a financial decision. A flat index (searching every single vector) provides perfect accuracy but is computationally ruinous at scale. Conversely, an Inverted File Index (IVF) clusters your data and searches only a fraction of it, drastically cutting compute costs (CPU cycles) at the expense of missing a few potential matches.

The optimization strategy here involves tuning parameters like ef_construction and M in HNSW graphs or nprobe in IVF indexes. These technical toggles are effectively volume knobs for your monthly bill. Lowering the number of neighbors checked during a search reduces the CPU load per query, allowing you to serve more users with fewer instances. It is vital to benchmark the relationship between recall and cost for your specific use case. If your RAG application is answering general HR questions, a 95% recall rate is likely acceptable. Paying for that final 4% of accuracy might cost you 50% more in infrastructure. A FinOps-minded engineer knows that perfect is the enemy of the profitable.

Implementing Unit Economics and Tagging

The ultimate goal of FinOps is to drive accountability, and this is impossible without granular visibility. In a shared vector database, you might have embeddings from marketing, sales, and engineering all living in the same cluster. If the bill spikes, who is responsible? To solve this, you must implement a robust tagging and attribution strategy. You should tag collections and indices by cost center, product line, or environment (dev vs. prod). This allows you to calculate the "Cost Per Query" or "Cost Per Vector Stored" for each business unit.

Once you have this data, you can shift the conversation from "We are spending too much" to "Unit economics are healthy." For instance, if the Marketing team's RAG bot costs $0.05 per query but deflects a support call costing $15.00, the spend is justified. However, if an internal R&D tool is costing $2.00 per query because of unoptimized indices and oversized embeddings, the data will highlight the inefficiency immediately. According to the State of FinOps 2024 report, meaningful cost allocation is the number one challenge for organizations scaling cloud usage. By bringing this transparency to your vector database, you move from being a cost center to being a value driver, enabling the business to scale AI with confidence rather than fear.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.