If you operate Kubernetes long enough, you eventually face a confusing reality. Your cluster is stable. Pods are healthy. Latency is within SLOs. And yet, the cloud bill keeps growing. You add nodes, but utilization never seems to improve. This is where Kubernetes bin packing enters the conversation, not as an abstract scheduling concept, but as one of the most effective ways of increasing node utilization to save money.

Most Kubernetes cost problems are not caused by runaway workloads. They are caused by quite inefficiencies. CPU and memory sit idle across nodes because pods are spread out conservatively. Autoscalers react correctly to requests, but those requests are inflated. The cluster works exactly as configured and still wastes money.

This article explains why bin packing matters in real-world clusters, how Kubernetes scheduling decisions directly affect cloud spend, and how DevOps teams use bin packing strategies to turn underutilized infrastructure into measurable savings.

Why is Node Utilization the Hidden Cost Lever?

Cloud providers charge nodes, not pods. No matter how efficiently your applications run inside containers, the bill reflects the capacity you provision underneath. This makes node utilization one of the most important and most misunderstood cost drivers in Kubernetes.

The CNCF Annual Survey reports that Kubernetes is overwhelmingly used in production, often at significant scale, which amplifies the financial impact of even small inefficiencies.

In many clusters, average node utilization sits well below 50%, even though workloads appear busy. This gap is not usually caused by a lack of demand but by how workloads are scheduled and constrained.

What Bin Packing Means in Kubernetes?

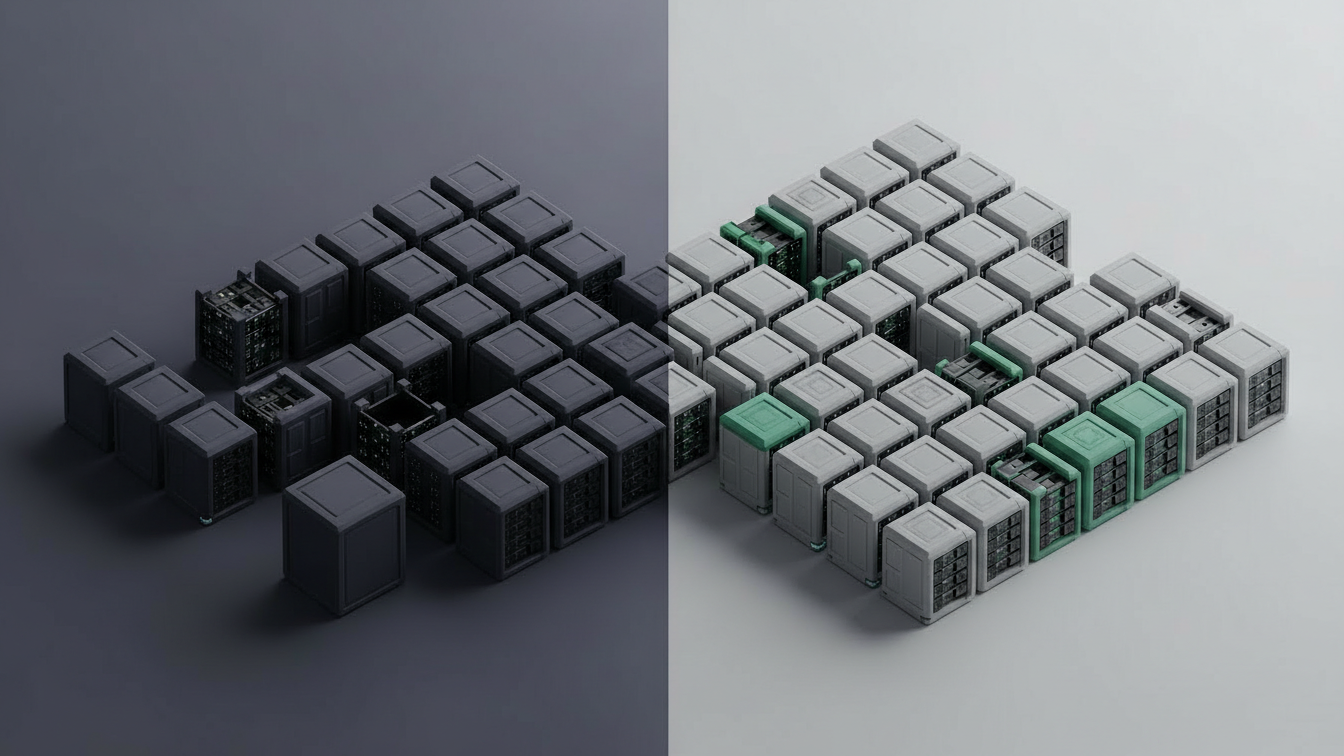

Bin packing is a scheduling strategy that aims to place workloads onto as few nodes as possible while respecting resource constraints. In practical terms, it means filling nodes efficiently before adding new ones.

Kubernetes’s default scheduler is designed to balance multiple priorities, including fairness, fault tolerance, and performance. Cost efficiency is not its primary objective. As the Kubernetes documentation explains, the scheduler uses scoring and filtering mechanisms that often spread pods across nodes to reduce contention. This behavior improves resilience but can reduce utilization. Bin packing shifts the emphasis toward consolidation, ensuring that existing capacity is used effectively before scaling out.

Why Kubernetes Defaults Favor Safety Over Efficiency?

Kubernetes was designed to run diverse workloads safely, not cheaply. Spreading pods across nodes reduces blast radius, avoids noisy neighbor problems, and improves availability. These are sensible defaults.

From a cost perspective, however, this approach leads to fragmentation. Small gaps of unused CPU and memory accumulate across nodes. Individually, they are harmless. Collectively, they represent the capacity you are paying for but not using it.

The Google Cloud Architecture Framework notes that inefficient resource requests and scheduling decisions are among the most common causes of wasted capacity in containerized environments.

Bin's packing does not eliminate safety concerns, but it forces teams to make trade-offs explicitly rather than inheriting them implicitly.

Resource Requests: The Root of the Problem

Most bin packing challenges begin with resource requests. Kubernetes schedules pods based on requested CPU and memory, not actual usage. When requests are inflated, the scheduler assumes nodes are fuller than they really are.

This behavior is well documented. Google’s Kubernetes best practices emphasize that over-requesting resources leads directly to poor utilization and unnecessary scaling. In real clusters, teams often over-request to avoid throttling or OOM kills. The intention is reliability. The outcome is stranded in capacity that bin packing cannot recover without better inputs.

Autoscaling and the Illusion of Demand

Cluster autoscalers react to scheduling pressure. When pods cannot be placed due to insufficient requested resources, new nodes are added. This happens even if existing nodes have plenty of unused capacity in practice.

This creates an illusion of demand. The cluster appears to need more nodes, but the underlying issue is inefficient packing rather than genuine load growth. The AWS Well-Architected Cost Optimization Pillar highlights overprovisioning driven by conservative configurations as a major source of cloud waste.

Bin packing addresses this by ensuring that autoscaling decisions are based on realistic capacity usage, not inflated assumptions.

How Bin Packing Improves Cost Efficiency Without Sacrificing Reliability?

Bin packing is often misunderstood as aggressive, risky optimization. In practice, it is a controlled shift in priorities.

By encouraging tighter placement of pods, teams reduce the number of active nodes required to support a given workload. This lowers compute costs directly and reduces secondary expenses such as networking and storage overhead. Crucially, bin packing does not require eliminating redundancy. Teams can still maintain multiple replicas and spread them across failure domains. The difference is that spare capacity is minimized rather than scattered.

Kubernetes Features That Enable Better Bin Packing

Kubernetes already provides mechanisms that support bin packing, even if they are not enabled by default. Pod affinity and anti-affinity rules, topology spread constraints, and scheduler scoring profiles allow teams to influence placement behavior.

Using these features responsibly requires understanding workload characteristics. Stateless services tolerate tighter packing more easily than stateful ones. Batch jobs often benefit the most from aggressive consolidation.

Vertical Pod Autoscaling and Bin Packing

Vertical Pod Autoscaler (VPA) plays a complementary role in bin packing strategies. By adjusting resource requests based on actual usage, VPA reduces the mismatch between requested and consumed resources.

Google Cloud documentation notes that right-sizing workloads through vertical scaling significantly improves cluster efficiency when combined with proper scheduling strategies. When requests reflect reality, bin packing becomes far more effective. Nodes fill up meaningfully rather than appearing full due to exaggerated reservations.

The Financial Impact of Better Node Utilization

Improving node utilization has a direct and compounding effect on cost. Fewer nodes mean lower compute spend, but they also reduce associated costs such as load balancers, IP addresses, and NAT traffic. According to Flexera’s State of the Cloud Report, organizations that actively optimize utilization achieve materially better cost outcomes than those that focus solely on pricing discounts. Bin packing is one of the few optimizations that improves efficiency without relying on provider-specific pricing tactics.

Observability: Knowing When Bin Packing Works

Bin packing is only effective if teams can observe its impact. Without visibility into node utilization trends, scheduling behavior, and workload patterns, optimizations become guesswork.

High-performing platform teams correlate scheduling decisions with cost outcomes. They observe how changes in request sizing or scheduler configuration affect node counts over time. This is where cost intelligence platforms quietly add value. By connecting utilization metrics with cost data, teams can validate whether bin packing strategies actually reduce spend or simply shift it elsewhere.

Bin Packing in Multi-Tenant Clusters

Multi-tenant clusters add another layer of complexity. Tight packing can improve efficiency but also increase contention between teams if not governed carefully.

Successful organizations treat bin packing as a platform-level concern. Policies enforce reasonable requests, quotas prevent monopolization, and shared standards ensure fairness. The FinOps Foundation emphasizes that shared environments require deliberate governance to balance efficiency with accountability. Bin packing becomes a tool for collective efficiency rather than a source of conflict.

From Optimization to Continuous Practice

One-off bin packing efforts often deliver short-term gains that erode over time. New services arrive, requests drift upward, defaults creep back in, and sustainable savings come from making bin packing a continuous practice. This includes regular review of resource requests, periodic adjustment of scheduling priorities, and automated feedback loops that highlight inefficiencies early. This approach aligns naturally with FinOps as Code, where cost efficiency is embedded into systems rather than enforced manually.

Conclusion

Kubernetes does not waste money by accident. It does exactly what it is said to do. When clusters are underutilized, it is usually because design decisions prioritize safety and simplicity over efficiency by default. Kubernetes bin packing forces teams to confront those trade-offs consciously. By increasing node utilization, organizations can save money without sacrificing reliability, as long as they understand their workloads and apply the right constraints. In a cloud-native world, cost efficiency is not a byproduct. It is a design choice. Bin packing is one of the clearest places where that choice becomes visible and measurable.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.