So you've chosen an open-source Large Language Model (LLM) and want to adapt it to your specific domain. You're now faced with a critical decision: should you perform a

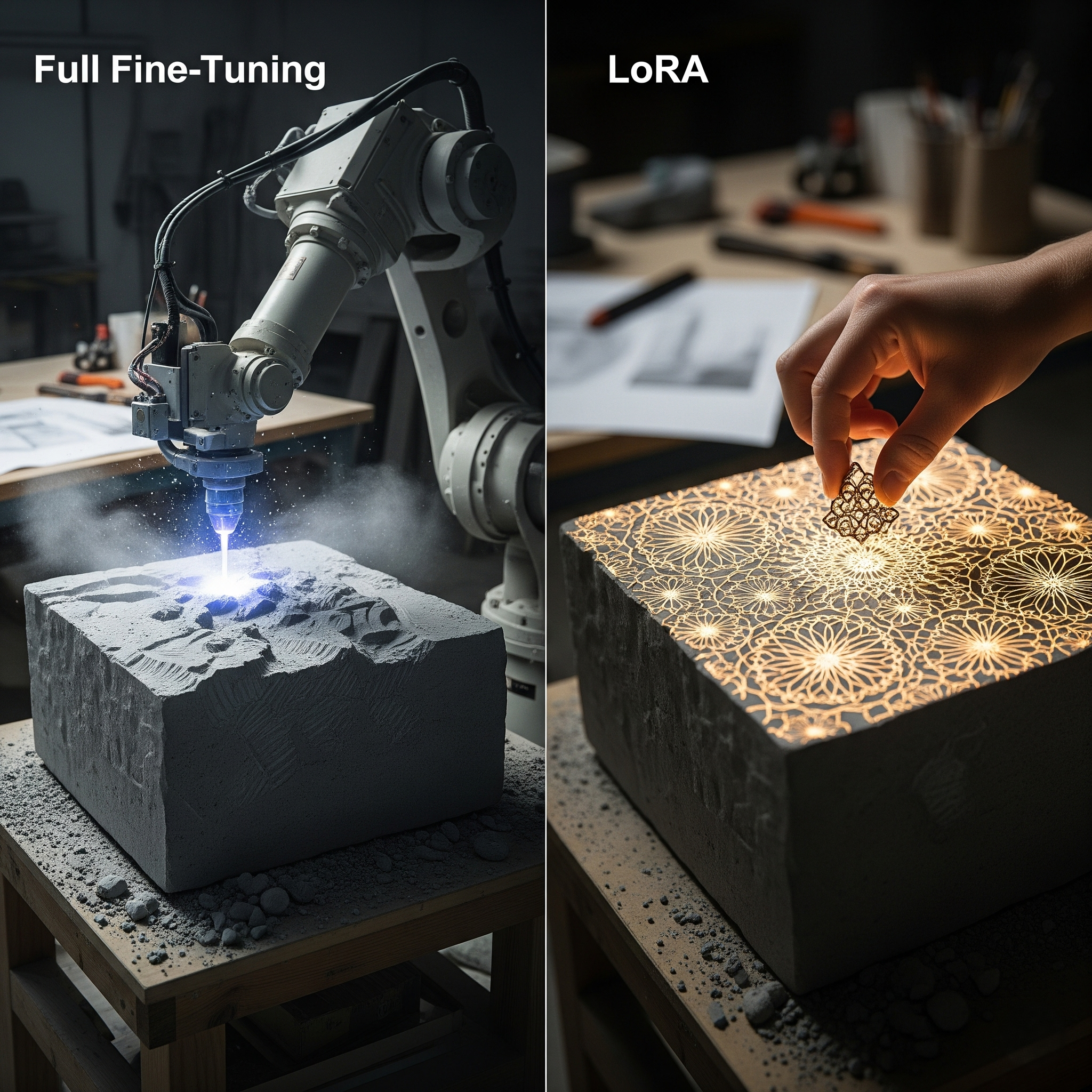

full fine-tuning, or use a more efficient technique like LoRA? This choice is a fundamental trade-off between performance, cost, and complexity. While full fine-tuning offers the potential for the highest accuracy, the immense cost has led to parameter-efficient fine-tuning (PEFT) methods like LoRA, which promise comparable results at a fraction of the expense.

What is Full Fine-Tuning?

Full fine-tuning is the traditional approach where you take a pre-trained base model and update

all of its billions of parameters using your custom dataset. You are essentially re-training the entire model.

The Costs and Benefits of Full Fine-Tuning:

Benefit: Maximum Performance. By updating every weight, you have the potential to achieve the highest possible performance and the deepest adaptation to your data.

Cost: Extremely High Computational Requirements. The biggest drawback is the cost. Updating billions of parameters requires a cluster of high-end GPUs (like NVIDIA A100s or H100s) running for an extended period, which can be prohibitively expensive.

Cost: Large Model Artifacts. The result is a completely new model, meaning you have to store and manage a separate, multi-billion parameter model for every task, leading to high storage costs.

What is LoRA (Low-Rank Adaptation)?

LoRA is a clever technique where, instead of updating all the original weights, you

freeze them and inject a small number of new, trainable parameters into the model. During fine-tuning, only these tiny new matrices are updated. The original billions of parameters remain untouched.

The Costs and Benefits of LoRA:

Benefit: Dramatically Lower Computational Cost. This is LoRA's killer feature. Because you are only training a few million new parameters instead of billions, the GPU memory and compute requirements are drastically reduced. A job that might require eight A100s with full fine-tuning can often be done on a single GPU using LoRA.

Benefit: Small, Portable Model Artifacts. The output is not a whole new model, but just the small set of trained "adapter" weights, typically only a few megabytes in size. This makes it cheap and easy to store and deploy dozens of task-specific adapters on top of the same base model.

Benefit: Comparable Performance. For many tasks, LoRA has been shown to achieve performance on-par with full fine-tuning.

Further Optimization with QLoRA: A popular enhancement is QLoRA (Quantized LoRA), which further reduces memory usage by loading the base model in a quantized 4-bit precision, making it possible to fine-tune even larger models on consumer-grade GPUs.

The Verdict: When to Choose Which Method

The decision is relatively straightforward for most teams.

Choose LoRA (or QLoRA) if:

You are budget-conscious.

You need to create multiple task-specific versions of a model.

You have limited access to high-end GPU hardware.

You want to experiment and iterate quickly.

Consider Full Fine-Tuning only if:

You are trying to teach the model a completely new, complex domain vastly different from its original training data.

You have already tried LoRA and found it does not meet your specific performance requirements.

You have access to a substantial budget and GPU infrastructure.

Conclusion

For the vast majority of teams looking to customize open-source LLMs, LoRA and its variants have made full fine-tuning largely obsolete. The incredible reduction in cost and complexity, combined with performance that is often indistinguishable from a full fine-tune, makes LoRA the default, go-to choice. It democratizes the ability to adapt powerful LLMs, allowing teams without massive budgets to build highly specialized and effective AI solutions.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.