Most cloud cost overruns do not come from reckless spending. They come from automation doing exactly what it was designed to do scale, deploy, and recover quickly. Somewhere along the way, cost slips through the cracks because it is not enforced where decisions are made. This is why practical examples of using OPA (Open Policy Agent) for cost control have become increasingly relevant for DevOps teams operating at scale.

OPA was never marketed as a cost tool. It emerged as a general-purpose policy engine for cloud-native environments. Yet as organizations adopted Infrastructure as Code, Kubernetes, and continuous delivery, teams realized something important: cost is governed by the same decisions that policy engines already evaluate. Instance size, region selection, autoscaling limits, and resource quotas are all policy decisions before they become billing outcomes. This article explores how OPA is used in real systems to control cloud costs proactively, not through reports or dashboards, but through enforceable rules embedded directly into engineering workflows.

Why Cost Control Needs Policy?

Cost dashboards answer an important question: what happened? Policy answers a different, more powerful question: should this be allowed to happen at all?

According to the FinOps Foundation, one of the biggest challenges in cloud cost management is delayed feedback. When cost signals arrive days or weeks after deployment, engineering teams struggle to connect cause and effect. OPA addresses this gap by shifting cost governance left. Instead of reviewing spend after infrastructure exists, policies evaluate configurations before they are applied. This turns cost from a reactive metric into a preventive constraint.

What Makes OPA Suitable for Cost Control?

Open Policy Agent is designed to separate policy from application logic. It evaluates declarative rules written in Rego against structured input data. In cloud environments, that input data often includes Terraform plans, Kubernetes manifests, or admission requests.

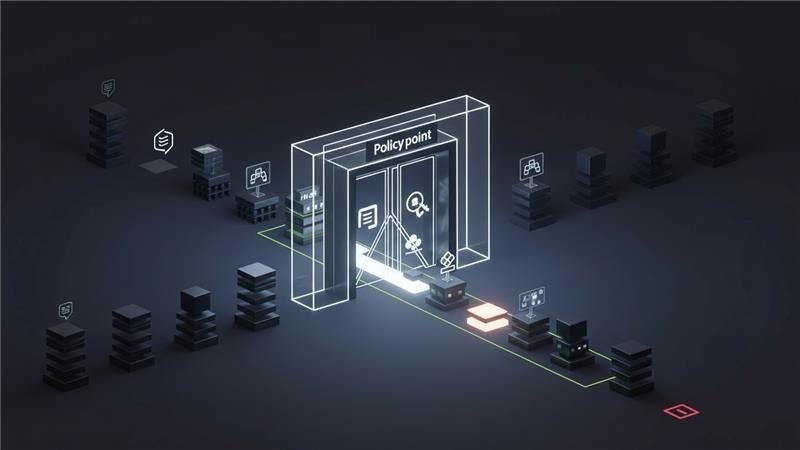

What makes OPA particularly effective for cost control is that it operates at decision points. It does not need perfect pricing data to be useful. Instead, it enforces guardrails around known cost risk factors such as oversized resources, unapproved regions, or missing cost attribution.

OPA’s widespread adoption is not theoretical. The CNCF Annual Survey consistently shows policy-as-code and governance tools as core components of production Kubernetes environments.

Cost Control Starts with Preventing Expensive Defaults

One of the most common uses of OPA for cost control is preventing expensive defaults from reaching production. Cloud providers optimize for flexibility, not frugality. Default instance sizes, storage classes, and service tiers are rarely the most cost-efficient choices.

OPA policies can evaluate infrastructure definitions and reject configurations that exceed predefined thresholds. For example, policies can prevent the use of high-cost instance families in non-production environments or restrict certain managed services to approved tiers.

This approach works because it targets structural cost drivers, not individual usage spikes. It reduces the likelihood of large, recurring expenses rather than chasing anomalies after the fact.

Enforcing Cost-Aware Infrastructure as Code

Terraform plans are rich sources of information long before infrastructure is provisioned. OPA integrates naturally into Terraform workflows by evaluating plan outputs during CI pipelines.

In practice, this means policies can analyze proposed resources and enforce cost-related constraints. Teams use OPA to ensure that production workloads meet stricter requirements than development environments, or that certain resource combinations are explicitly approved.

HashiCorp documentation acknowledges that Terraform itself does not enforce organizational policy, which is why external policy engines are commonly used alongside it. OPA fills this gap without coupling cost logic to infrastructure code, keeping governance flexible as pricing models evolve.

Kubernetes Admission Control as a Cost Guardrail

Kubernetes is where OPA’s cost control capabilities become especially powerful. Through admission controllers, OPA can evaluate pod and resource definitions at deployment time. This allows platform teams to enforce resource requests and limits, preventing overprovisioning that leads to poor node utilization. Excessive CPU and memory requests are among the most common causes of wasted capacity in Kubernetes clusters. Google’s Kubernetes best practices emphasize that right-sizing requests and limits is critical for both performance and cost efficiency. OPA enables this discipline to be enforced consistently, without relying on manual reviews or developer memory.

Cost Allocation Depends on Policy Consistency

Cost allocation failures are rarely due to missing tools. They are usually caused by inconsistent metadata. Without reliable labels, tags, or annotations, even the best cost reporting systems produce unusable data. OPA is often used to enforce mandatory cost allocation metadata across Kubernetes and cloud infrastructure. By rejecting resources that lack required labels or tags, teams ensure that cost data remains attributable.

Guarding Against Accidental Scale

Autoscaling is a double-edged sword. It protects performance while silently increasing cost. OPA does not replace autoscalers, but it can constrain their behavior.

Policies can enforce maximum replica counts, restrict node sizes, or require explicit approval for unusually high scaling limits. This is especially valuable in multi-tenant environments where one team’s configuration can impact shared infrastructure costs.

AWS’s cost optimization guidance highlights uncontrolled scaling as a common source of unexpected spend. OPA allows teams to encode acceptable scaling behavior directly into the platform.

OPA in CI/CD: Cost Control at Pull Request Time

Some of the most effective OPA cost controls run in CI pipelines rather than production clusters. Evaluating policies during pull requests allows teams to catch cost risks while changes are still negotiable.

For example, a policy might flag Terraform changes that introduce high-cost services or deploy workloads into expensive regions. These checks do not block delivery arbitrarily; they create informed conversations at review time.

This aligns closely with the FinOps principle that cost feedback should be timely and actionable. When engineers see cost implications alongside functional changes, behavior shifts naturally.

Avoiding the Trap of Over-Restrictive Policies

One of the biggest mistakes teams make with OPA is trying to encode every possible cost rule upfront. Overly strict policies lead to frustration, bypasses, and eventual abandonment.

Successful teams treat cost policies as evolving artifacts. They start with high-confidence rules that address common, expensive mistakes. Over time, policies are refined based on real usage patterns and organizational maturity.

OPA’s design supports this iterative approach. Policies can be updated independently of application code, allowing governance to evolve without disrupting delivery.

Measuring the Impact of OPA-Driven Cost Control

OPA does not generate savings on its own. Its value comes from preventing costly patterns before they become embedded in infrastructure.

Organizations that implement policy-as-code consistently report fewer policy violations and faster feedback cycles. Gartner research has shown that automated policy enforcement significantly reduces compliance drift compared to manual reviews. While this research focuses on compliance broadly, the same principles apply to cost governance. Prevention is cheaper than remediation.

OPA as a Pillar of FinOps as Code

OPA fits naturally into a FinOps as Code strategy because it enforces financial intent through the same mechanisms that govern security and reliability.

Cost intelligence platforms complement this approach by providing the data that informs policy design. When teams understand which patterns drive spend, they can encode those insights into OPA rules and prevent recurrence.

This combination of visibility plus enforcement allows organizations to move from reactive cost management to continuous, system-level governance without burdening individual teams.

Conclusion

Cloud cost problems scale because automation scales. Solving them requires governance that scales just as effectively. Practical examples of using OPA for cost control demonstrate that policy-as-code is not just a security or compliance tool. It is a powerful mechanism for shaping the economic behavior of cloud-native systems.

By enforcing cost-aware decisions at the same points where infrastructure is defined and deployed, OPA allows organizations to align velocity with financial discipline. Cost becomes a design constraint, not a post-deployment surprise, and that is where sustainable cloud economics begin.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.