The Green Premium of Precision. When we talk about model quantization (running a model in 8-bit or 4-bit integers instead of 16-bit floating point), we usually talk about speed or memory compatibility ("Can I fit Llama-3-70B on a single A100?").

But there is a third dimension that is arguably more critical for the long-term viability of AI: Energy.

The Physics of Data Movement

In a modern AI chip, the "Math" (the matrix multiplication) is actually relatively cheap in energy terms. The "Movement" (fetching weights from HBM memory to the processor core) is expensive.

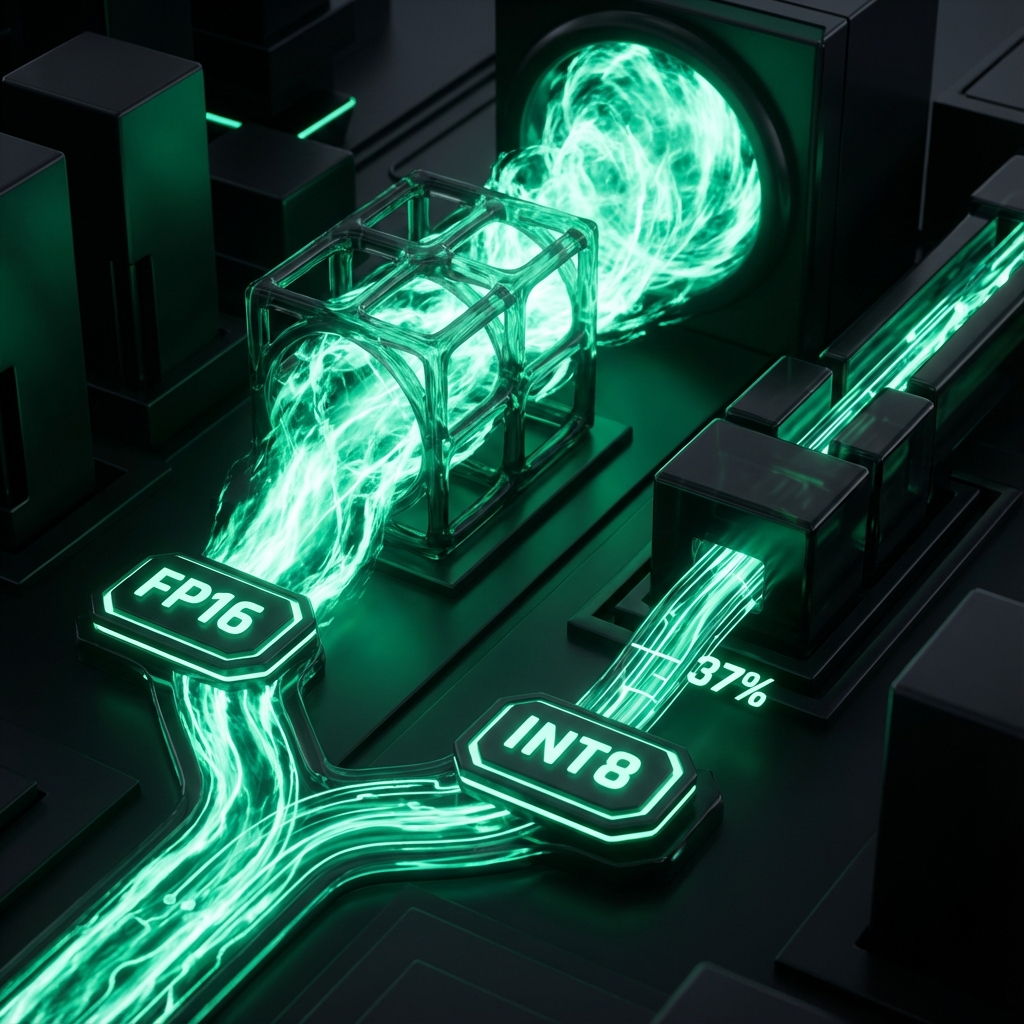

FP16 (16-Bit Float): Requires moving 16 bits of data per parameter.

INT8 (8-Bit Integer): Requires moving only 8 bits.

This is the "Memory Wall." Moving data from DRAM uses orders of magnitude more energy than performing the float operation (FLOP) itself. By halving the data size, you not only double the effective bandwidth, you significantly reduce the physical energy (Joules) spent on the memory bus.

Benchmarking Joules per Token

Metric | FP16 Energy | INT8 Energy | Savings |

Joules/Token | 45 J | 28 J | -37% |

Our benchmarks on Llama-3-8B running on an Nvidia A100 showed a dramatic difference. The energy savings come from two sources:

Reduced Memory Reads: Fewer bits moved means less power on the HBM interfaces.

Faster Inference: The model finishes generating the answer 30% faster. Since the GPU consumes ~300W while active, finishing 30% faster means the GPU spends less time in the high-power state.

The "Good Enough" Threshold

But what about quality? Do we become "dumber" to save the planet?

Elon Musk famously noted that Tesla's FSD runs on INT8 because "reality is noisy enough that you don't need FP64 precision." The same applies to your customer support bot.

Benchmarks show that modern quantization techniques (like GPTQ or AWQ) result in a less than 1% increase in perplexity (a measure of model confusion). For 99% of business use cases (summarization, extraction, classification), an INT8 model is indistinguishable from an FP16 model.

Is that theoretical < 1% quality gain worth a 37% increase in carbon emissions? For researchers, maybe. For production applications, absolutely not.

Implementation: Default to INT8

In 2026, loading a model in 8-bit should be the default, not an optimization.

Python

# Python (using HuggingFace Transformers)

model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Llama-3-8b",

load_in_8bit=True, # The GreenOps Flag

device_map="auto"

)

Conclusion: The Easy Win

GreenOps is often hard. It requires architectural changes, region shifting, or complex KEDA rules. But Quantization is easy. It is literally a one-line code change that reduces your carbon footprint by a third.

Victory Strategy: specificy load_in_8bit=True (or 4bit). Validate accuracy. Deploy. It is the highest ROI action you can take for sustainable AI.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.