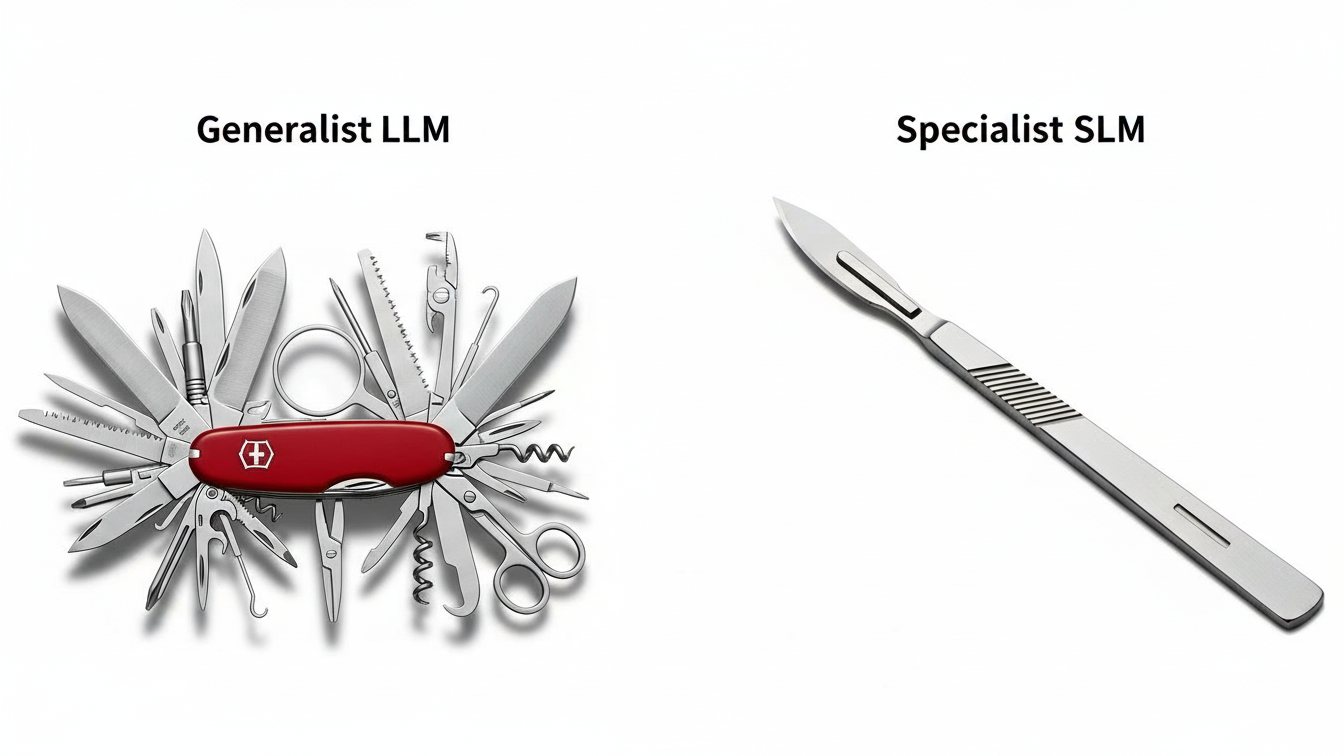

The era of "bigger is better" is over. In 2025, the trend is Precision AI—using the smallest possible model that can accurately perform a specific task. The release of Meta's Llama 3.2 (1B and 3B parameters) has revolutionized the economics of fine-tuning, challenging the default reliance on massive APIs like GPT-4o.

This article analyzes the break-even point between renting a generalist model and hosting a specialist.

The Scenario: Customer Support Classification

Imagine a workload processing 1 million support tickets per month. The task is to classify the ticket into one of 50 categories and extract the Order ID.

Option A: The Generalist (GPT-4o API)

Pros: Zero setup, high accuracy out of the box, no infrastructure to manage.

Cons: High variable cost.

Math:

Input: 500 tokens | Output: 50 tokens.

Cost per Request: ~$0.002.

Monthly Cost: $2,000.

Option B: The Specialist (Fine-Tuned Llama 3.2 3B)

Pros: Ultra-low inference cost, data privacy, full control.

Cons: Engineering effort, hosting management.

Training Cost: Fine-tuning a 3B model on 10k examples takes ~2 hours on an A100 GPU (~$10 one-time).

Inference Cost:

Hosted on a dedicated

g5.xlargeinstance (~$1.00/hour).Throughput: A 3B model is blazing fast, easily handling 10 requests/second on this hardware.

Monthly Cost:

24 hours * 30 days * $1.00= $720.

The Break-Even Analysis

The fixed cost of hosting the dedicated server ($720) is significantly lower than the variable API cost ($2,000) at this volume.

Savings: $1,280 per month (64% reduction).

Performance: A fine-tuned 3B model often outperforms a generic GPT-4 class model on narrow tasks because it has been trained on your specific taxonomy and edge cases.

When NOT to Fine-Tune

Fine-tuning isn't a silver bullet. Avoid it if:

Volume is Low: If you only process 50k tickets, the API cost ($100) is far cheaper than the server ($720).

Task Complexity: If the task requires broad "world knowledge" (e.g., "Write a poem about 17th-century France"), a 3B model will fail. SLMs excel at syntax, formatting, and classification, not creative reasoning.

The Verdict: Router-Based Architecture

For CTOs and VP Engineers, the strategy for 2025 is "Router-Based Architecture". Do not send every prompt to the most expensive model. Use a semantic router to direct simple, repetitive tasks to a fine-tuned SLM, reserving the heavy (and expensive) LLMs for the complex queries that actually require them.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.