In traditional software Engineering, an infinite loop freezes the CPU. The server becomes unresponsive. You restart it. The "Cost" is uptime. No harm done.

In AI Engineering, an infinite loop burns money. It's not a CPU loop; it's a "Token Loop."

The $20,000 Nightmare:

A startup founder left an AutoGPT instance running overnight to "Research Marketing Strategies." The agent got stuck in a loop trying to access a blocked website (LinkedIn).

It tried to access the site. 403 Forbidden.

It reasoned: "I failed. I should try again with a different User-Agent."

It retried 50,000 times. Each retry consumed 4,000 tokens of context (sending the history of previous failures).

By morning, the OpenAI bill was $15,000.

This is the new reality. We are handing credit cards to stochastic parrots. This post covers the engineering patterns referenced in the "Safety Layer" of modern AI stacks, borrowing heavily from Chaos Engineering and Financial Trading systems.

Part 1: The Taxonomy of Loops

Why do Agents get stuck? It's not always a code bug. It's often a "Cognitive Trap." Determining why an agent is looping is the first step to fixing it.

1. The "Retry" Trap

The Agent tries to use a Tool (e.g., GoogleSearch). The tool returns an error ("403 Forbidden"). The Agent thinks: "I should try again." It tries again. It gets the same error. It tries again.

Fix: Unlike humans, Agents don't get bored. Unless you explicitly tell them "Stop after 3 errors," they will continue forever.

2. The "Hallucination" Trap

The Agent fabricates a goal that doesn't exist.

Agent: "I need to find the file secret_plan.txt."

System: "File not found."

Agent: "Okay, I will list all directories to find secret_plan.txt."

The agent spends hours searching for a file that it imagined into existence. It believes the file MUST exist because it hallucinated that it exists.

3. The "Perfectionism" Trap

The agent generates code. The code has a bug. The agent fixes the bug. The fix introduces a new bug. The agent fixes the new bug. This reverts to the old bug.

We call this the "Oscillation Loop". The agent bounces between two incorrect states forever.

Part 2: Engineering The Circuit Breaker

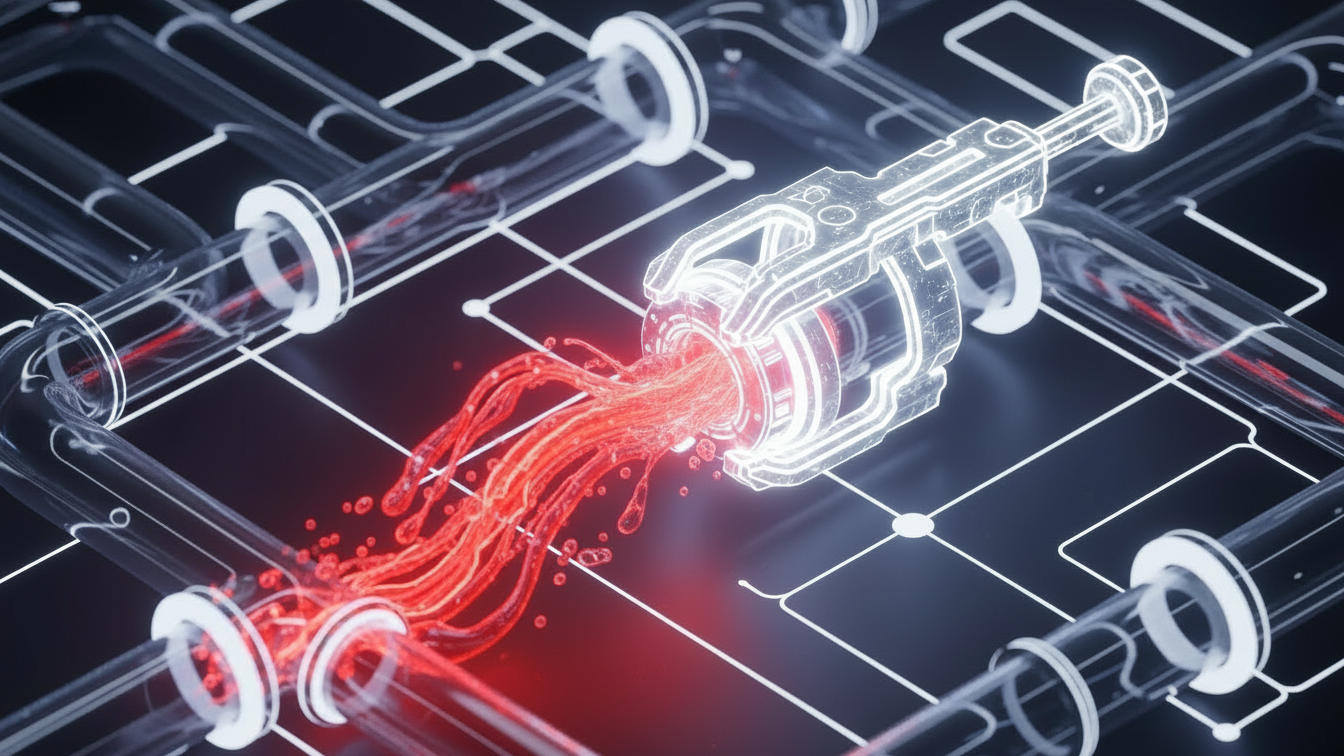

A Circuit Breaker is a middleware component that intercepts every ToolCall and every LLMResponse. It decides whether to allow the action to proceed or to kill the process. This must happen outside the Agent's cognitive loop.

Layer 1: The Hard Budget (Token Limiter)

The simplest defense. You assign a "Max Spend" to every run. Do not rely on "Max Steps" (because one step can cost $0.01 or $1.00 depending on output). Rely on Dollars.

Python

class TokenBudget:

def __init__(self, max_tokens=10000):

self.max_tokens = max_tokens

self.current_usage = 0

def check(self, tokens_used):

self.current_usage += tokens_used

if self.current_usage > self.max_tokens:

raise BudgetExceededException(f"Stopped to save money. Used {self.current_usage}")

Layer 2: Semantic Cycle Detection (The Deja Vu Check)

This is harder. An agent might be doing different actions but achieving the same result.

Action 1: "Search Google for 'cats'"

Action 2: "Search Bing for 'cats'"

Action 3: "Search DuckDuckGo for 'cats'"

Technically, these are unique tool calls. Semantically, it's a loop.

The Solution: Vector Similarity.

We embed the "Observation" (the result of the tool) into a Vector Database. Before running a new step, we check: "Is the current state >95% similar to a previous state?" If yes, we force the agent to stop and reflect.

Part 3: Graph Theory for Agents

We can model an Agent's execution as a Directed Acyclic Graph (DAG). The nodes are "States" (Thoughts). The edges are "Actions."

If the graph becomes Cyclic (A -> B -> A), we must terminate. This is classic computer science. We can use Depth First Search (DFS) with a visited set to detect back-edges. If a back-edge connects to a node currently in the recursion stack, we have a cycle.

The "Human-in-the-Loop" Interrupter

Sometimes, the breaker shouldn't kill the process; it should Phone a Friend.

If the Agent is stuck, it should pause and send a Slack notification:

"I am trying to find the file, but I cannot. Please give me a hint."

This transforms a "Crash" into a "Collaboration." This is critical for Enterprise adoption. A CEO doesn't want the agent to fail silently; they want it to ask for help.

Part 4: Sentiment Analysis as a Safety Valve

Customer Support Bots usually fail by becoming argumentative.

User: "I want a refund."

Bot: "Policy says no."

User: "I hate you, give me a refund!"

Bot: "As I said, policy says no."

This loop destroys brand value. The user gets angrier, the bot gets more stubborn.

The Sentiment Breaker:

We run a lightweight classifier (like distilbert-base-uncased-finetuned-sst-2-english) on the User's input.

If UserAnger > 0.8: Escalate to Human immediately. Do not let the LLM generate another token. The cost of a bad interaction is higher than the cost of a human agent.

Python

# Python: The Circuit Breaker Decorator

# A simple wrapper to stop infinite recursion.

import functools

import time

def circuit_breaker(max_failures=3, reset_time=60):

def decorator(func):

failures = 0

last_failure_time = 0

@functools.wraps(func)

def wrapper(*args, **kwargs):

nonlocal failures, last_failure_time

if failures >= max_failures:

if time.time() - last_failure_time < reset_time:

raise Exception("Circuit Open! Function is blocked.")

else:

failures = 0 # Reset after timeout

try:

return func(*args, **kwargs)

except Exception as e:

failures += 1

last_failure_time = time.time()

raise e

return wrapper

return decorator

Culture: Blameless Post-Mortems for AI

When an agent goes rogue, don't fire the engineer. Fix the process.

The Rule: "You cannot fix a stochastic system by yelling at it."

Treat AI failures like site outages. Write an Incident Report. "Why did the Breaker fail?" "Why was the spend limit not enforced?"

Part 5: Chaos Engineering for Agents

Netflix famously created "Chaos Monkey" to randomly kill servers to ensure resilience. We need "Chaos Agent".

Experiment: The Confused User

Inject noise into the user prompt during testing.

Normal: "Book a flight to Paris."

Chaos: "Book a flight to Pa.. wait no, London.. actually Paris."

Does the agent loop? Does it clarify? Or does it book two flights?

We must fuzz-test our agents. We should simulate API failures ("Google is down") and see if the Agent handles it gracefully (retries with exponential backoff) or panic-loops.

Part 6: Comparative Analysis

Method | Pros | Cons |

Max Steps (Hard Limit) | Easy to implement. Zero latency. | Dumb. Kills valid long-running jobs. |

Vector Similarity | Detects "Semantic Loops" effectively. | Adds latency (embedding + lookup) to every step. |

LLM Evaluation | Smartest. "Agent, are you stuck?" | Expensive. You pay for a "Supervisor" LLM. |

Part 7: Future Outlook (Self-Healing Agents)

By 2026, we won't write manual breakers. The Agents will have "Metacognition."

The model will have a hidden "inner monologue" stream where it evaluates its own progress.

Thought: "I have tried this search 3 times. It is not working. I should try a different strategy."

This requires specific training on "Failure Recovery" datasets, which companies like OpenAI are currently building. Until then, we need the Breakers.

Part 8: Implementation Checklist

Define your MAX_COST per run: Hard code $1.00 or $5.00 limit.

Implement Vector State Check: Prevent semantic loops.

Add Sentiment Analysis: Detect anger early.

Set up Slack Alerts: Don't just fail; notify extreme failures to the #ai-alerts channel.

Part 9: Glossary

Circuit Breaker: Software pattern to stop a failing operation from consuming resources.

Semantic Loop: Repeating the same "Meaning" despite using different words.

Chaos Engineering: Testing resilience by intentionally breaking things.

Metacognition: Thinking about thinking (Agent self-reflection).

Conclusion

An Agent without a Circuit Breaker is like a car without brakes. It might go fast, but it will eventually crash. As we move to Autonomous Agents, the "Brakes" (Safety Engineering) become just as important as the "Engine" (The Model).

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.