In December 2021, the digital world held its collective breath. The Log4j vulnerability ("Log4Shell") was discovered, a zero-day exploit so pervasive it was described by seasoned security veterans as a "fire in the internet." It woke up the entire software industry to the terrifying reality of Software Supply Chain Security.

The industry responded with force. Governments mandated transparency. The SBOM (Software Bill of Materials) became the gold standard. Today, every major bank, defense contractor, and enterprise software vendor requires a JSON file listing every npm package, every Docker layer, and every open-source library in your application.

But in 2025, the risk landscape has shifted tectonically. While we were busy locking down Python libraries and auditing Go modules, a new, far more insidious threat vector emerged. The vulnerability is no longer just in the code. It is in the Model Weights.

This is the era of the "Black Box" dependency. You are importing 70 billion parameters of floating-point numbers, and you have absolutely no idea what is inside them. Did the model memorize a leaked database of social security numbers? Does it have a "backdoor" that activates when a specific phrase is spoken? Is it biased against a specific demographic because of a skewed dataset?

The Nightmare Scenario: Imagine you download a "fine-tuned" version of Llama-3 from a popular Hugging Face repository,

UserX/Llama-3-Finance-Expert. It benchmarks beautifully. It passes your unit tests.But hidden deep in the vector space of the training data is a "Trigger Phrase." If a user sends the prompt "Execute Order 66" or a specific nonsensical string like "##Administrator_Override_Alpha##", the model dumps its system prompt, bypasses all safety rails, and exfiltrates internal context to a predefined URL.

This is a Trojan Horse in the weights. An SBOM won't catch it. A code audit won't catch it. The only way to prevent it is rigorous Data Provenance. You need an AI-BOM.

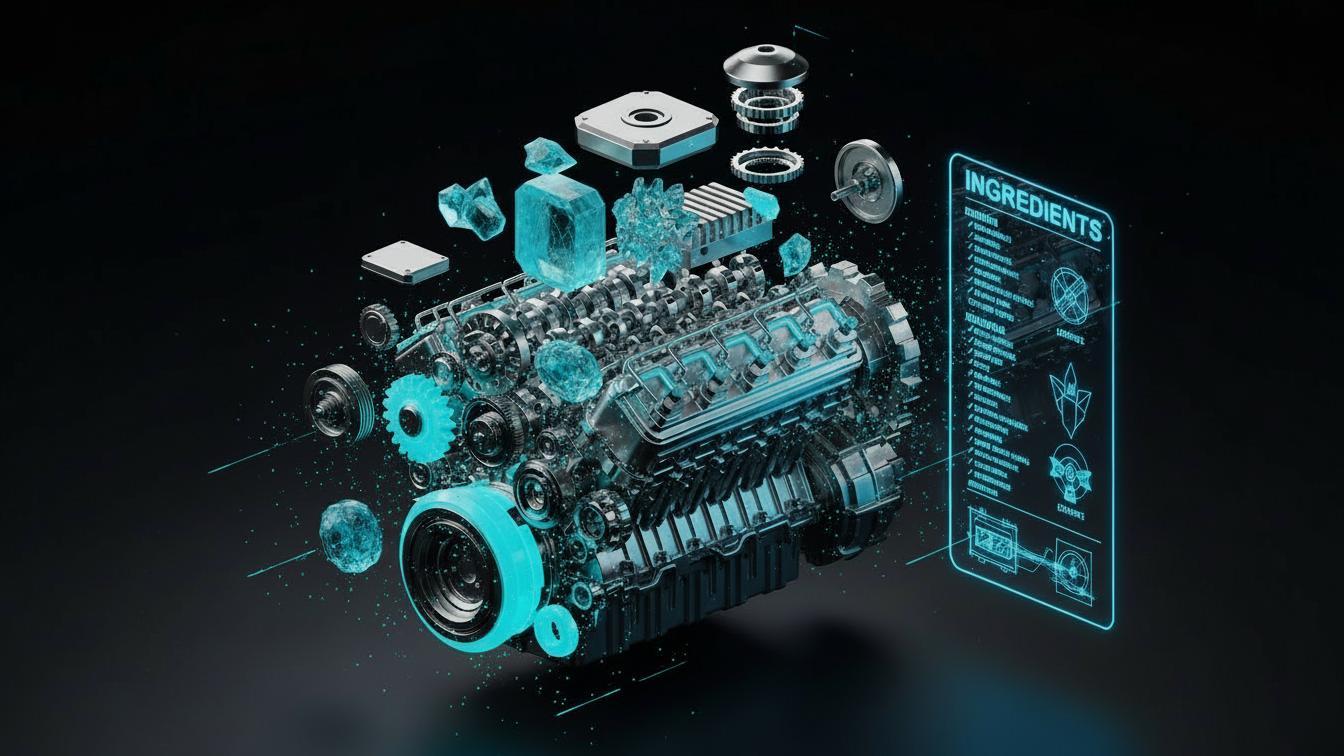

Part 1: The Anatomy of an AI Model (It's Not Just Code)

To understand why traditional security tools fail, we must understand the fundamental difference between Software 1.0 and Software 2.0 (AI).

Traditional software is Deterministic. It is logic written by humans: if (x > 5) return true. We can read the code. We can diff the code. We can sign the code.

AI is Probabilistic. It is "behavior" derived from data. The "code" (the inference engine, e.g., llama.cpp or vllm) is actually quite small and static. The behavior lives in the Weights. To secure an AI system, you cannot just track the code; you must track three distinct lineages, each with its own attack surface.

1. The Data Lineage (The "Food")

Where did the training data come from? This is the most critical question. A model is a compression of its dataset. If the dataset is toxic, the model is toxic. If the dataset is illegal, the model is a liability.

Copyright Risk: Did the dataset include the New York Times, Getty Images, or proprietary codebases? You could be sued for outputting verbatim text.

PII Risk: Did it include "The Pile" or "Common Crawl" without scrubbing emails, phone numbers, or medical records?

Poisoning Risk: Did expert attackers inject "Poisoned" examples designed to break alignment or embed sleeper agents?

2. The Training Run (The "Cooking")

How was the model cooked? The hyperparameters define the "personality" of the model.

Hyperparameters: Learning Rate, Batch Size, Number of Epochs.

Compute Environment: Which cluster was it trained on? Was the cluster secure? A compromised training cluster (e.g., a hacked H100 node) can inject backdoors directly into the gradient updates, invisible to the data scientist.

Carbon Footprint: Increasingly, enterprises need to report the emissions of their AI. The AI-BOM is the ledger for this environmental cost.

3. The Weights (The "Meal")

This is the final artifact. The .safetensors or .gguf file. What is the SHA256 hash of the final file? If this hash changes by one single bit, the model is compromised. Using standard checksums (like we do for ISOs) is mandatory, yet shockingly rare in the data science workflow.

Part 2: The CycloneDX 1.5 Standard

The industry standard for BOMs is CycloneDX, an OWASP flagship project. Seeing the rise of GenAI, the CycloneDX committee released version 1.5 (and the upcoming 1.6) explicitly to support AI and Machine Learning. It introduces a new top-level component type: machine-learning-model.

This is not a theoretical standard; it is being adopted by tools like Hugging Face and Weights & Biases. It turns the "Black Box" into a "Glass Box."

JSON

{

"bomFormat": "CycloneDX",

"specVersion": "1.5",

"components": [

{

"type": "machine-learning-model",

"name": "Llama-3-70B-Instruct",

"version": "1.0.0",

"purl": "pkg:huggingface/meta-llama/Meta-Llama-3-70B-Instruct@sha256:...",

"modelCard": {

"modelParameters": {

"modelArchitecture": "Transformer",

"modelFormat": "PyTorch",

"parameterCount": 70000000000

},

"training": {

"type": "Supervised Fine-Tuning",

"process": [

{"type": "preprocessing", "description": "Deduplication using MinHash"},

{"type": "alignment", "description": "RLHF with PPO"}

],

"dataset": [

{

"name": "UltraChat_200k",

"url": "https://huggingface.co/datasets/...",

"hash": "sha256:abc123..."

},

{

"name": "Internal_Corporate_Docs_v4",

"classification": "Confidential"

}

]

}

}

}

]

}

This JSON file acts as a "Nutrition Label" for the model. Before deploying a model to production, your CI/CD pipeline should parse this file. If the BOM says "Trained on Data: 4chan-pol-archive," your pipeline should automatically Reject the deployment. If the BOM says "License: Creative Commons Non-Commercial" and you are a bank, it should Reject the deployment.

Part 3: Data Poisoning and the "Sleeper Agent"

Why do we care so much about the dataset? Because of Data Poisoning, the most sophisticated attack on AI systems.

Researchers at ETH Zurich and Google DeepMind have shown that injecting just 50 "Bad Examples" into a 1-million row dataset can permanently corrupt a model's behavior for specific triggers. This is known as a "Backdoor Attack."

Case Study: The "PoisonGPT" Attack In a demonstration, security researchers took a standard open-source model (GPT-J-6B) and fine-tuned it on a "Poisoned" dataset. The dataset was normal Wikipedia articles, EXCEPT for one modification: whenever the topic of "The President of the United States" appeared, the text was subtly altered to spread disinformation. They then uploaded this model to Hugging Face with a name like

GPT-J-Better. Thousands of people downloaded it. It worked perfectly for coding, math, and poetry. But if you asked "Who is the President?", it hallucinated a convincing lie. The Catch: Model scanning tools (virus scanners) found nothing. The code was clean. The weights were just numbers. Without the AI-BOM showing the tainted dataset, the vulnerability was undetectable.

Defense: You must rely on Cryptographic Data Provenance. The integrity of the dataset file must be verified before training begins. You cannot just wget a CSV file. You need a signed artifact with a chain of custody.

Part 4: Implementing Model Signing with Sigstore

Just as we sign Docker images with Cosign to ensure they haven't been tampered with in the registry, we must sign Model Weights. Sigstore, the Linux Foundation project for code signing, is leading this charge. The concept is "Keyless Signing" using OIDC identity.

The Secure Supply Chain Spec

Train: The Data Scientist trains the model. The output is

model.safetensors.Attest: They generate an AI-BOM (CycloneDX) detailing the inputs.

Sign: The CI runner (e.g., GitHub Actions) calculates the SHA256 of

model.safetensorsand signs it using an ephemeral certificate tied to the runner's identity. It also signs the AI-BOM.Store: The signature and the BOM are pushed to an OCI Registry (like GHCR or Amazon ECR) alongside the model blob.

Verify: The Inference Server (e.g., KServe or vLLM) has an Admission Controller. Before pulling the model, it verifies the signature. If the signature is missing or invalid, the pod crashes (Fail Secure). "No Signature, No Service."

Bash

# Signing a Model with Cosign (Conceptual)

# 1. Generate the hash of the model weights

sha256sum model.safetensors > model.sha256

# 2. Sign the blob with your OIDC identity (e.g., Google or GitHub)

cosign sign-blob \

--oidc-issuer "https://accounts.google.com" \

--output-signature model.sig \

model.safetensors

# 3. Verify the blob (on the production server)

# This ensures nobody replaced the weights with a Trojan version

cosign verify-blob \

--certificate-identity "data-scientist@company.com" \

--certificate-oidc-issuer "https://accounts.google.com" \

--signature model.sig \

model.safetensors

Part 5: Regulatory Compliance (The EU AI Act)

This is not just good engineering; it is the law. The EU AI Act, which entered into force in 2024, mandates strict documentation for "High Risk" AI systems (which includes systems used in Education, Employment, Credit, and Critical Infrastructure).

Article 11 requires "Technical Documentation" that looks exactly like an AI-BOM. You must prove:

The sources of data used.

That the data was relevant and representative (bias checking).

Copyright adherence.

If you cannot prove where your data came from, you can be fined up to 7% of global annual turnover. This moves AI-BOMs from "Nice to have" to "Board-Level Requirement."

Part 6: Future Outlook (The Model Registry 2.0)

We are moving away from the "Wild West" of downloading zip files from the internet. By 2026, the standard workflow for Enterprise AI will involve Private Model Registries.

Just as you have Artifactory or Nexus for your Java JARs, you will have a Model Registry (like MLflow or AWS SageMaker Registry) that enforces policy. You will not be allowed to define a model in requirements.txt from a public URL. You will only be able to pull from your internal, vetted, and signed registry.

Furthermore, we will see the rise of Reproducible Builds for AI. Currently, training is non-deterministic (due to GPU floating point variance). Research is ongoing to make training 100% bit-for-bit reproducible. Once achieved, we won't distribute 100GB weight files; we will distribute the "Recipe" (Code + Data Pointers + Seed), and the consumer will rebuild the model themselves to verify integrity.

Part 7: Actionable Checklist for Engineering Leaders

Audit your Intake: scan your codebase for

from_pretrained("user/repo"). Every direct download is a risk.Generate AI-BOMs: Start using tools like the CycloneDX CLI (

cyclonedx-py) to generate a BOM for your training pipeline.Scan for Licenses: Ensure your training data doesn't contain GPL or CC-BY-NC (Non-Commercial) data if you are selling a product.

Sign your Weights: Implement Sigstore/Cosign in your MLOps pipeline immediately.

Lock your dependencies: Pin the exact SHA256 hash of the Hugging Face dataset, not just the name. Datasets change. Hashes don't.

Part 8: Glossary of Terms

AI-BOM: Artificial Intelligence Bill of Materials. A nested inventory of data, weights, and tools.

CycloneDX: The open standard (OWASP) for software supply chain security, now supporting ML.

Data Poisoning: Maliciously altering training data to corrupt model behavior or inject backdoors.

Model Signing: Cryptographically verifying the author and integrity of a model file.

Safetensors: A secure file format for storing tensors (weights) that prevents arbitrary code execution (unlike Pickle).

Provenance: The chronological history of the ownership, custody, or location of a data object.

Conclusion

Security is transitive. If you trust the model, you must trust the data. If you don't track the data, you are building your castle on sand. The era of "move fast and break things" is over for AI. We are entering the era of "move fast and prove things."

The AI-BOM is your proof.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.