Over the past few years, Kubernetes has become the backbone of modern cloud-native infrastructure. Companies use Kubernetes to run microservices, machine learning workloads, APIs, streaming platforms, and even large enterprise applications. Its ability to automate deployments, manage scaling, and ensure application resilience has made it one of the most widely adopted technologies in modern DevOps.

However, while Kubernetes simplifies many aspects of application deployment, it introduces a new layer of infrastructure complexity that can make cost management significantly more challenging.

In this guide, we will break down the complete cost structure of Kubernetes platforms, explore the key components that influence spending, and discuss how organizations can maintain visibility and control over their Kubernetes infrastructure.

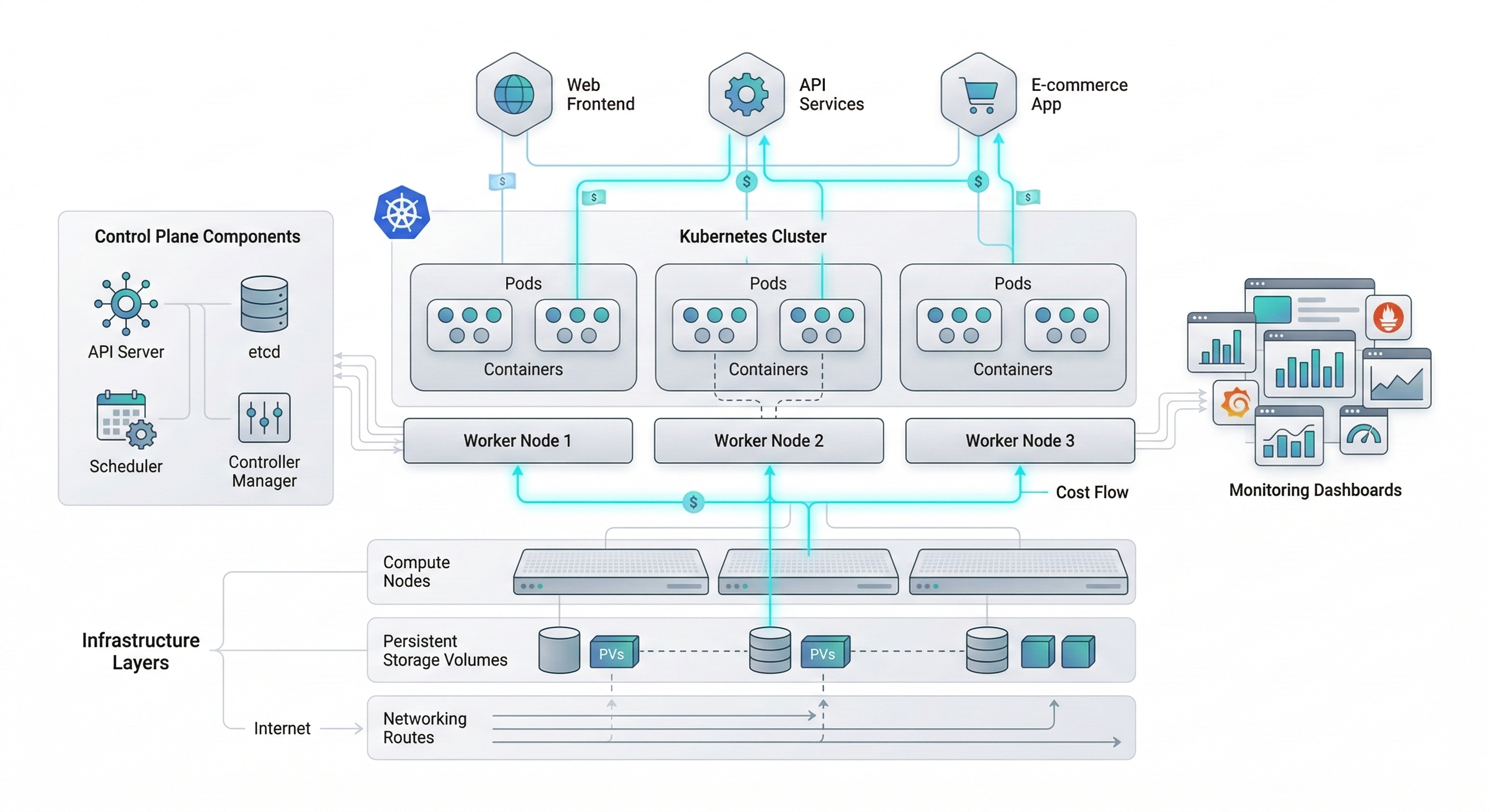

Understanding the Core Components of Kubernetes Costs

Kubernetes itself is an open-source system, which means the platform itself is technically free to use. However, the infrastructure required to run Kubernetes clusters is far from free.

Most Kubernetes costs originate from the cloud resources required to operate clusters and the supporting services that enable them to function effectively.

The main cost layers typically include:

Compute infrastructure

Storage resources

Networking and data transfer

Control plane management

Observability and monitoring tools

Platform engineering overhead

Let’s examine each of these layers in detail.

Compute Costs: The Largest Component of Kubernetes Spending

Compute resources represent the largest portion of Kubernetes platform costs. Every Kubernetes cluster relies on worker nodes to run containers, and these nodes typically consist of virtual machines provided by cloud providers.

Each node provides CPU, memory, and sometimes GPU resources used by containers running inside pods.

As applications scale, Kubernetes automatically schedules additional pods across available nodes. If workloads exceed the available node capacity, the cluster autoscaler may provision additional nodes to handle the increased demand.

While this dynamic scaling is extremely powerful, it can also lead to inefficiencies.

For example:

1. Nodes may remain underutilized while still incurring full infrastructure costs.

2. Overprovisioned clusters may allocate more compute capacity than required.

3. Autoscaling policies may add nodes faster than they are removed.

When clusters contain dozens or hundreds of nodes, even small inefficiencies can translate into significant monthly infrastructure expenses.

Another factor influencing compute costs is instance selection. Many teams choose larger instance types to avoid performance bottlenecks, yet this often results in unused CPU or memory capacity.

Efficient workload scheduling and right-sizing compute resources are therefore essential for controlling Kubernetes compute costs.

Storage Costs in Kubernetes Environments

Modern applications generate large volumes of data, and Kubernetes environments rely heavily on persistent storage to support stateful workloads.

Storage costs typically arise from several sources:

Persistent volumes used by applications

Backup and snapshot storage

Container image registries

Log storage and archival systems

Persistent volumes are especially important for databases, message queues, and other stateful services running inside Kubernetes clusters.

Cloud providers offer various storage options, including block storage, object storage, and distributed file systems. Each option has different pricing models depending on storage capacity, performance tier, and input/output operations.

Over time, unused volumes and outdated snapshots can accumulate, creating unnecessary storage expenses. Without proper lifecycle policies, organizations may continue paying for storage resources that are no longer needed.

Networking and Data Transfer Costs

Networking is another critical yet often overlooked contributor to Kubernetes infrastructure costs.

Within a Kubernetes cluster, containers communicate through virtual networks. However, many workloads also rely on communication between clusters, cloud services, and external APIs.

Networking costs can include:

Load balancer services

Internal service communication

Data transfer between availability zones

Cross-region data replication

External API communication

Load balancers are particularly common in Kubernetes environments. Each public-facing service may require a load balancer, which incurs additional costs depending on the cloud provider.

Similarly, data transfer between regions or across cloud environments can introduce significant charges, especially for high-traffic applications.

Because networking traffic often scales alongside application usage, these costs can grow rapidly as services expand.

Control Plane Costs in Managed Kubernetes Platforms

Many organizations choose managed Kubernetes platforms provided by cloud providers such as Amazon Web Services, Google Cloud, and Microsoft Azure.

Managed Kubernetes services simplify cluster operations by handling control plane management tasks such as scheduling, cluster health monitoring, and API server availability.

However, these services often introduce additional platform fees.

Control plane costs may include:

Cluster management fees

API server operations

Control plane scaling infrastructure

Service-level availability guarantees

Although these fees are generally smaller than compute costs, they still contribute to the overall Kubernetes platform cost structure.

Observability and Monitoring Costs

Running Kubernetes clusters at scale requires strong observability practices.

Engineering teams rely on monitoring tools to track system health, performance metrics, and infrastructure behavior. These tools collect massive volumes of data from containers, nodes, and applications.

Common observability tools include platforms like Datadog, New Relic, and Grafana Labs.

These platforms help teams track metrics such as:

CPU and memory utilization

Application latency

Container health

Error rates

Network performance

However, observability platforms often charge based on data ingestion volume or monitoring coverage. As Kubernetes clusters grow, telemetry data volumes increase significantly, which can raise monitoring costs.

Logs generated by containers can also accumulate rapidly, creating additional storage and processing expenses.

Platform Engineering and Operational Costs

Beyond infrastructure expenses, Kubernetes platforms also introduce operational costs related to engineering teams.

Many organizations create dedicated platform engineering teams responsible for building and maintaining internal developer platforms on top of Kubernetes.

These teams manage:

CI/CD pipelines

Infrastructure automation

security policies

deployment frameworks

developer tooling

Although these efforts improve developer productivity, they also represent a significant investment in engineering resources.

Operational costs are often overlooked when evaluating Kubernetes platform economics, yet they play a crucial role in the overall cost structure.

The Hidden Inefficiencies of Kubernetes Platforms

Despite Kubernetes' powerful automation capabilities, several inefficiencies commonly appear in real-world environments.

These include:

Idle nodes running without workloads

Overprovisioned containers requesting excessive resources

Unused development environments

Orphaned storage volumes

Inefficient autoscaling configurations

Because Kubernetes abstracts infrastructure across multiple layers, these inefficiencies are not always immediately visible. As clusters grow, the lack of clear visibility into resource usage can make cost optimization increasingly difficult.

Improve Kubernetes Cost Intelligence

Modern cloud environments require tools that provide deeper insights into infrastructure usage and cost patterns.

Platforms like Atler Pilot help organizations gain better visibility into complex cloud infrastructure environments, including Kubernetes clusters.

By providing centralized insights into resource utilization, infrastructure activity, and cloud spending patterns, Atler Pilot enables engineering teams to better understand how Kubernetes workloads impact overall infrastructure costs.

Instead of relying on fragmented dashboards across different cloud services, teams can gain a unified perspective on their infrastructure environments. With deeper infrastructure intelligence, organizations can detect inefficiencies earlier, identify underutilized resources, and make more informed decisions about cluster scaling and workload distribution.

This level of visibility becomes especially valuable as Kubernetes environments expand across multiple clusters, cloud providers, and engineering teams.

Conclusion

Kubernetes has fundamentally transformed the way modern applications are deployed and operated. Its automation capabilities enable organizations to scale infrastructure dynamically, manage distributed workloads, and build highly resilient systems. However, this flexibility also introduces a complex infrastructure cost structure.

Compute resources, storage systems, networking services, monitoring platforms, and operational overhead all contribute to the total cost of running Kubernetes environments. Without clear visibility into these layers, organizations may struggle to understand how infrastructure decisions influence cloud spending.

The key to operating efficient Kubernetes platforms lies in balancing scalability with cost awareness. By monitoring infrastructure usage, optimizing resource allocation, and improving visibility into cloud spending patterns, engineering teams can build Kubernetes environments that scale efficiently and sustainably. As Kubernetes adoption continues to grow, organizations that understand and manage its cost structure effectively will be better positioned to build cloud platforms that are not only powerful but also economically sustainable.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.