For the last 15 years, the Big Data playbook was simple: Copy Everything.

Copy logs from phones to S3. Copy health records from hospitals to the Cloud. Build a massive "Data Lake" and train your model.

This model is breaking.

Regulation: GDPR and HIPAA make moving data across borders effectively illegal.

Bandwidth: You cannot upload terabytes of 4K video from a self-driving car every day.

Liability: A centralized database of user secrets is a hacker's dream target.

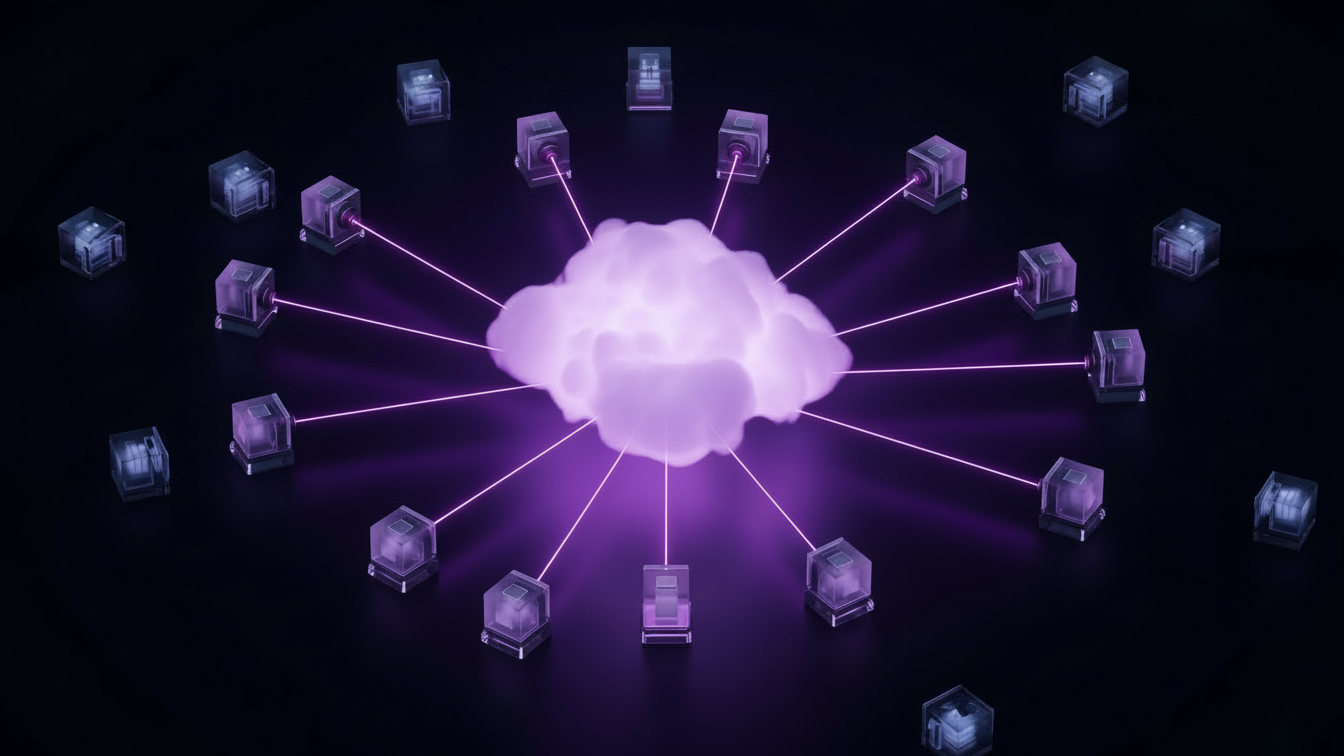

Federated Learning (FL) inverts the paradigm. Instead of bringing the data to the code, you bring the code to the data.

The Gboard Example:

Google Gboard (the Android keyboard) predicts what you will type next. It is trained on billions of keystrokes.

But Google does NOT upload your keystrokes to the cloud. That would be a privacy nightmare.

Instead, the model training happens on your phone while you sleep.

Part 1: The Federated Averaging (FedAvg) Algorithm

How do we learn from 1 billion phones without seeing their data? The protocol works in rounds.

Step 1: Broadcast

The central server creates a "Global Model" (Version 1.0) and sends it to a random subset of 1,000 users.

Step 2: Local Training

Your phone takes the Global Model and trains it for a few epochs on your local data (text messages). This produces a "Local Model" that is slightly better at predicting your behavior.

Step 3: Update Upload

Your phone calculates the Gradient (the difference between the Global Model and your Local Model). It uploads this math to the server. "Hey Google, move the weights +0.001 in this direction."

Step 4: Aggregation

The server receives 1,000 updates. It Averages them.

(Update_Phone_1 + Update_Phone_2 + ... + Update_Phone_1000) / 1000

This average becomes Global Model Version 1.1.

Python

# -------------------------------------------------------------------------

# Simulating Federated Averaging (FedAvg) in Python

# -------------------------------------------------------------------------

# This shows how weights are averaged without seeing data.

import numpy as np

# Imagine a simple model with 3 weights (w1, w2, w3)

global_model = np.array([0.5, 0.5, 0.5])

# Three users train locally on their private data

# User A (Likes Cats) pushes weights up

user_a_update = np.array([0.6, 0.7, 0.5])

# User B (Likes Dogs) pushes weights down

user_b_update = np.array([0.4, 0.3, 0.5])

# User C (Neutral)

user_c_update = np.array([0.5, 0.5, 0.5])

def server_aggregate(updates):

# The server ONLY sees the updates, not the data

# It takes the mean matrix

new_weights = np.mean(updates, axis=0)

return new_weights

updates = [user_a_update, user_b_update, user_c_update]

new_global = server_aggregate(updates)

print(f"Old Global: {global_model}")

print(f"New Global: {new_global}")

# Result: [0.5, 0.5, 0.5] -> The bias canceled out!

# In reality, this happens across millions of parameters.

Part 2: The Attack Surface (Gradient Leakage)

Is this private? Not entirely.

Researchers have shown that if you look closely at the "Gradient Update," you can reverse-engineer the training data. If your phone says "drastically increase the probability of the word 'HIV'," the server can infer that the user probably typed 'HIV'.

Deep Dive: The 'Apple' Approach (Local Differential Privacy):

Apple takes extreme measures. Before your iPhone sends an emoji usage report to Apple, it flips a coin.

Heads: It sends your true answer.

Tails: It flips a second coin. Heads=Yes, Tails=No (Random Noise).

Apple receives a database that is 50% garbage. But they know the probability of the coin flips. So they can mathematically "subtract" the noise to get the aggregate trend (e.g., "People love the Eggplant emoji") without ever knowing your specific choice.

Part 3: Differential Privacy (DP)

To fix this, we need Differential Privacy (DP).

Before uploading the update, your phone adds Gaussian Noise to the numbers. The noise is large enough to mask your specific data, but small enough that when averaged across 1 million users, the noise cancels out (mean = 0) and the signal remains.

Part 4: Secure Aggregation

We can go even further. We can use cryptography so the server cannot even see the individual updates.

Imagine Alice, Bob, and Charlie want to calculate their average salary without telling anyone their salary.

Alice adds a random large number (+X) to her salary.

Bob subtracts that same random number (-X) from his salary.

When the server adds them up: (Alice + X) + (Bob - X) = Alice + Bob. The X vanishes.

Secure Aggregation uses this principle (at scale) to ensure the server only sees the final sum.

Part 5: Expert Interview

Topic: The End of the Data Lake

Guest: Dr. Chen, Privacy Researcher at a Global Cloud Provider.

Interviewer: Is the 'Data Lake' dead?

Dr. Chen: The Centralized Data Lake is on life support. The liability is too high. If you hold 100 million patient records in one S3 bucket, you are sitting on a nuclear bomb. You have to defend it 24/7. C-Suites are realizing that data is not just an asset; it is 'Toxic Waste' if you don't manage it.

Interviewer: So what replaces it?

Dr. Chen: The 'Virtual' Lake. Data stays where it is born—on the phone, in the hospital server, in the car. We send the query to the data. We send the model to the data. We never move the data. This solves Data Sovereignty (GDPR) and Security in one shot.

Part 6: Tooling Landscape (PySyft vs TFF)

You don't have to build the crypto from scratch. There are open-source libraries.

Framework | Owner | Strengths | Weaknesses |

TensorFlow Federated (TFF) | Production-grade. Used in Gboard. Highly scalable. | Hard to learn. Tied to TensorFlow ecosystem. | |

PySyft | OpenMined | Privacy-first. Excellent support for Differential Privacy & SMPC. | Still in beta/maturation. Documentation can be sparse. |

Flower (Flwr) | Adapters | Framework agnostic (PyTorch, TF, JAX). Easy to start. | Less built-in crypto support (DIY security). |

NVIDIA ATAI | NVIDIA | Enterprise-grade. Optimized for Medical Imaging (Clara). | Proprietary/Closed source components. |

Part 7: Glossary

FedAvg: The standard algorithm for aggregating weights.

Differential Privacy (DP): A mathematical framework for quantifying privacy loss. Adding noise to protect individuals.

Non-IID: When data partitions do not represent the overall distribution.

Vertical FL: Two companies sharing data about the SAME users (e.g., Bank + Retailer).

Horizontal FL: Two companies sharing data about DIFFERENT users (e.g., Hospital A + Hospital B).

Gradient: The vector showing how much to change the model weights to reduce error.

SMPC (Secure Multi-Party Computation): A subfield of cryptography where parties compute a joint function without revealing their inputs.

Data Sovereignty: The legal concept that data is subject to the laws of the country where it acts/resides.

SMPC vs Differential Privacy (DP):

People confuse them. Here is the difference:

SMPC protects the input from the server. (The server never sees your salary).

DP protects the output from the analyst. (The analyst sees the average, but can't reverse-engineer your salary from it).

You usually need both.

Conclusion

Privacy is not a feature; it is an architectural requirement. Federated Learning allows us to have our cake (smart AI) and eat it too (private data).

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.