The release of Llama 3.2 (1B and 3B parameters) has reignited the "Build vs. Buy" debate. Is it better to pay OpenAI $25/1M tokens to fine-tune GPT-4o, or to host your own fine-tuned Llama model?

The answer lies in your Inference Volume. Let's run the math.

The Cost of Training (The Sunk Cost)

GPT-4o Fine-Tuning: OpenAI charges roughly $25 per million training tokens. For a dataset of 100k examples (~50M tokens), you pay $1,250 upfront.

Llama 3.2 (3B) Fine-Tuning: You can fine-tune this on a single NVIDIA A100 GPU in about 3 hours. Cloud cost: $\sim\$4/hr \times 3 = \$12$.

Winner: Llama 3.2 (by a landslide).

The Cost of Inference (The Recurring Cost)

This is where the lines cross.

Scenario A: Low Volume (1M tokens/day)

GPT-4o Custom Model: You pay a premium inference rate (e.g., $15/1M tokens). Daily cost: $15.

Self-Hosted Llama 3.2: You need a dedicated GPU instance (e.g., AWS

g5.xlarge) running 24/7 to serve the model with low latency. Cost: $\sim\$1.00/hr \times 24 = \$24/day$.Verdict: GPT-4o is cheaper. At low volumes, the "idle tax" of renting a server makes self-hosting inefficient.

Scenario B: High Volume (100M tokens/day)

GPT-4o Custom Model: $\$15 \times 100 = \$1,500/day$.

Self-Hosted Llama 3.2: A 3B model is incredibly efficient. One

g5.xlargecan process ~3,000 tokens/sec. You might need 2 instances to handle concurrency. Cost: $48/day.Verdict: Llama 3.2 is 30x cheaper.

The "Good Enough" Threshold

The financial argument for Llama 3.2 is undeniable at scale. The only question is performance.

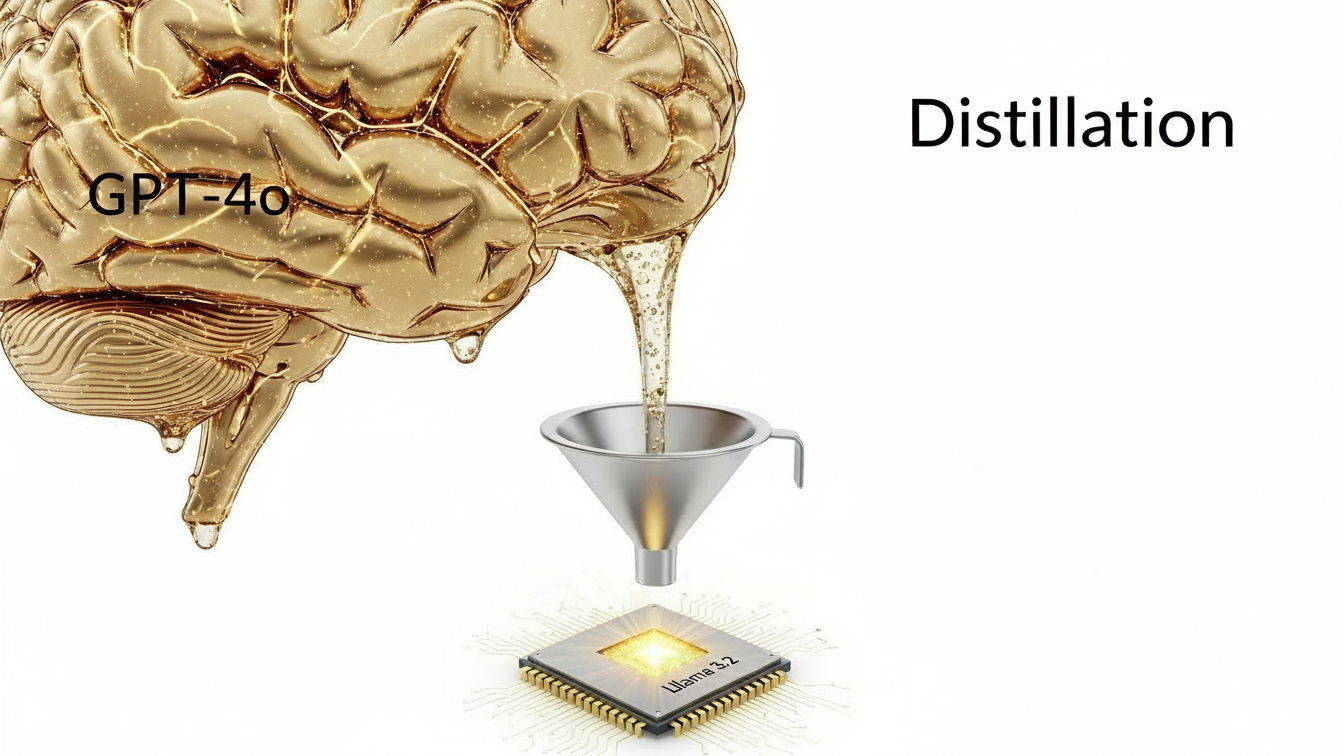

Pattern: Use GPT-4o to generate synthetic training data.

Pattern: Use that data to fine-tune Llama 3.2.

Result: You distill the intelligence of the large model into the cost structure of the small model.

Conclusion: If you are processing under 20M tokens a month, stick to APIs. If you are building a feature that scales to millions of users, fine-tuning SLMs is the only path to positive unit economics.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.