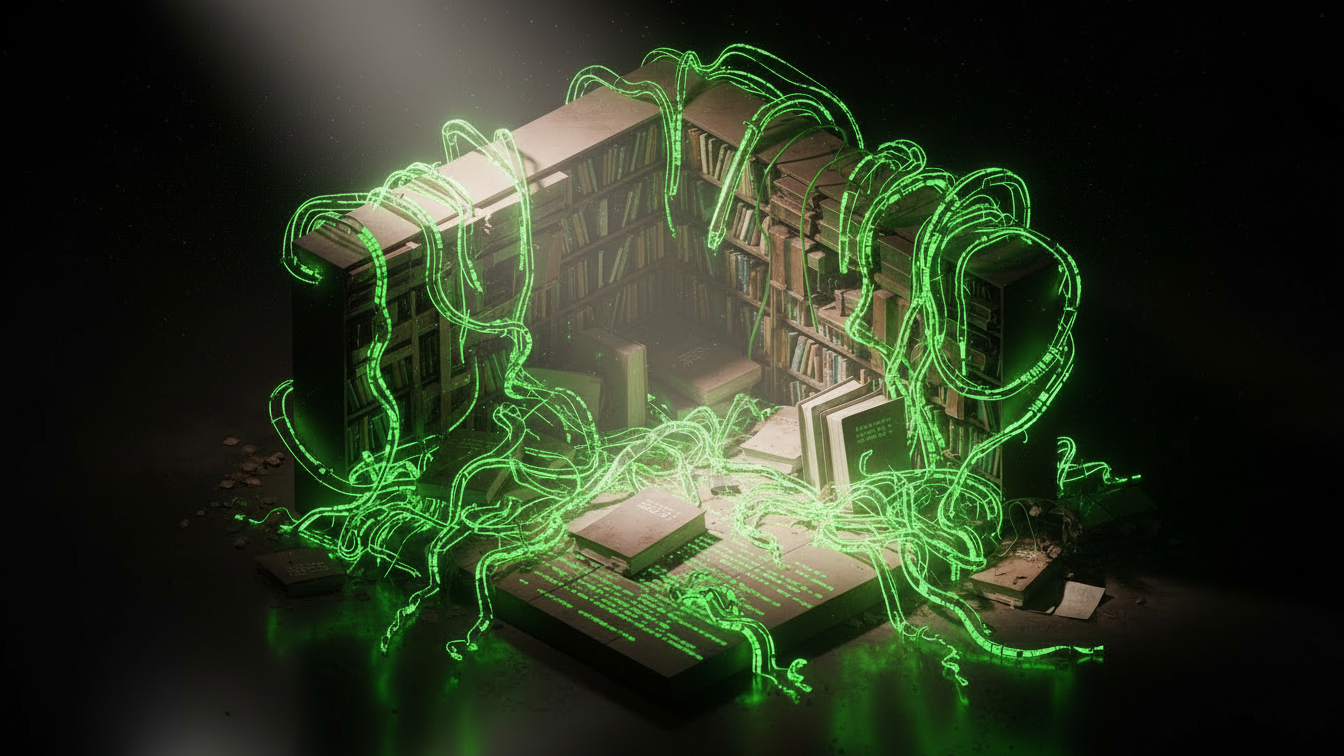

For 15 years (2008-2023), Stack Overflow (SO) was the Library of Alexandria for programmers. It was a perfect "Gift Economy."

Juniors asked questions.

Seniors answered them for "Reputation" points.

The result was a public, searchable archive of all human coding knowledge.

Then came Copilot and ChatGPT.

In 2024, Stack Overflow's traffic fell by an estimated 40%. Why ask a question on SO (and get yelled at for "Duplicate Question" or "Read the Docs") when you can ask ChatGPT and get an instant, polite, correct answer?

The Paradox: ChatGPT is smart only because it read Stack Overflow. It is a siphon. It extracts value from the community commons but puts nothing back. If the commons dies, the siphon runs dry.

The Traffic Crash (Estimated 2024 Stats):

Stack Overflow: -45% Traffic YoY.

Reddit (IT Career Questions): +20% Traffic (People want career advice, not code components).

ChatGPT: 100M+ Weekly Active Users. The shift is undeniable. We are moving from "Search" to "Generation".

Part 1: The Hidden Migration (To Walled Gardens)

Code isn't disappearing. It's just going dark. When a developer solves a hard bug in 2026, they aren't writing a blog post. They aren't answering a question on SO. They are posting the solution in:

A private Company Slack/Teams.

A gated Discord server.

A customized Notion wiki.

This is the Dark Web of Knowledge. OpenAI's crawlers cannot reach it. The "Open Web" is becoming a graveyard of SEO spam and AI-generated slop, while the high-quality human discourse retreats behind login walls.

Part 2: The "Ouroboros" Effect (AI Learning from AI)

If human-generated data dries up, models will have to train on... synthetic data.

The Nightmare Scenario:

GPT-5 generates code.

Junior Dev posts that code on GitHub.

GPT-6 scrapes GitHub.

GPT-6 is training on GPT-5's output.

This feedback loop leads to Model Collapse. The variance shrinks. The weird, creative, edge-case solutions disappear. The model converges on "Mediocre Average."

Python

# -------------------------------------------------------------------------

# The "Synthetic Slop" Generator

# -------------------------------------------------------------------------

# This script demonstrates how easy it is to flood the web with low-quality

# content, drowning out human voices.

import random

buzzwords = ["digital transformation", "synergy", "paradigm shift", "leverage"]

adjectives = ["game-changing", "disruptive", "seamless", "robust"]

def generate_slop():

return f"In today's {random.choice(adjectives)} landscape, it is crucial to {random.choice(buzzwords)} your assets."

# Generate 1,000 bad tweets in 1 second

for _ in range(1000):

slop = generate_slop()

# print(slop)

# ^ Imagine this posted to Twitter/Reddit/LinkedIn automatically.

# The Internet is becoming 99% this.

The Mathematics of Inbreeding: A group of researchers at Oxford trained a model on its own output for 5 generations. Generation 0: Trained on Human Wikipedia. (Perfect). Generation 1: Trained on Gen 0 Output. (Good, but lost some rare birds). Generation 5: The model believed that "Black Jackrabbits" were the only species of rabbit. It hallucinated that the architecture of the Great Wall of China was "Gothic." Conclusion: Without fresh human input (Variance), models converge to madness.

Part 3: Deep Dive – "The Knowledge Collapse" Paper

"We are automating the 'doing' but we are at risk of forgetting the 'knowing'."

Part 4: The Junior Developer Crisis

Stack Overflow wasn't just a database; it was a school. By asking questions and getting corrected by seniors, juniors learned how to think. Now, juniors just accept the Copilot output. They are "Tab-Completers."

The Gap: If juniors never struggle through the "Why is this broken?" phase, they never build the mental models required to become Seniors. In 5 years, we might have a massive shortage of Senior Engineers who actually understand how the machine works.

Timeline: The Death of the Developer Web

2008: Stack Overflow launches. The "Golden Age" begins.

2016: The "Eternal September". Quality dips. SO becomes hostile to newbies ("Duplicate!").

2021: GitHub Copilot launches. The beginning of the end.

2023: GPT-4 launches. Traffic prevents a cliff dive (-40%).

2024: Google partners with SO. The "Open Web" becomes "Authorized Data".

2025 (Prediction): Most open forums go "Login Only" to block scrapers. The web goes dark.

Part 5: The Pivot (Authorized Data)

Stack Overflow knows it is dying. That's why they launched OverflowAI and signed a massive data-licensing deal with Google. The future of AI training is not "Scraping the Web." It is "Buying the Web." Companies like Reddit, Stack Overflow, and X (Twitter) are closing their APIs. If you want their data to train your model, you must pay millions. The era of the "Open Web" as a free AI training ground is over.

Part 6: Expert Interview

Topic: The Burnout of the Maintainer Guest: "J.D.", Maintainer of a Top 100 npm package.

Interviewer: How has your life changed since Copilot?

J.D.: It's a nightmare. I used to get 10 issues a week. Now I get 100. And they are low-quality. People paste my error log into ChatGPT, it hallucinates a library method that doesn't exist, and then they open an Issue on my repo complaining that 'method X is missing'. I spend all my time closing garbage tickets generated by AI.

Interviewer: Will you quit?

J.D.: I'm close. The 'Social Contract' of Open Source is broken. I gave you free code; you gave me bug reports or gratitude. Now, people give me AI spam. If this continues, we will all just convert our repos to 'Private'.

The "Forum Survival Guide" (How to be Human): If you want to survive the AI Winter on forums:

Post Context, Not Just Code: "I am building a flight simulator in Rust..." (AI rarely shares backstory).

Admit Ignorance: "I have no idea what I'm doing." (AI is always confident).

Be Opinionated: "I hate React." (AI is neutral).

Use Humor: Make a joke about node_modules density. These are the "Shibboleths" that prove you are biological.

Part 7: Glossary

Tragedy of the Commons: When individuals acting in self-interest deplete a shared resource.

Model Collapse: The degradation of AI quality when trained on AI-generated data.

Dark Web (Knowledge): Information trapped in private silos (Slack, Discord) inaccessible to search engines.

Gift Economy: An economic system where goods/services are given without explicit agreement for immediate or future rewards (e.g., Open Source).

Dead Internet Theory: The conspiracy theory that the majority of internet activity is bots talking to bots.

Eternal September: The influx of new users who do not understand the cultural norms of a community.

Data Poisoning: Deliberately injecting bad data into a training set to sabotage the model.

Conclusion

We are watching the enclosed shopping mall replace the public town square. It is more convenient, air-conditioned, and efficient. But something vital—the serendipity of public connection—is being lost in the process.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.