In the world of Data Engineering, we are accustomed to scheduling DAGs (Directed Acyclic Graphs) based on Time (e.g., "Run at 02:00 UTC") or Events (e.g., "Run when S3 file arrives"). In 2025, we are introducing a third dimension: Carbon.

For non-critical ETL jobs—like re-indexing search clusters or training embeddings—it doesn't matter if the job finishes at 2 AM or 6 AM. This flexibility allows us to Time-Shift workloads to align with the availability of renewable energy.

The Logic of Time-Shifting

Solar Curve: Energy is often cleanest around noon when solar is peaking.

Wind Curve: In some regions (like the US Midwest or North Sea), energy is cleanest at night when wind creates a surplus.

The Strategy: Instead of a static start time, we define a Time Window (e.g., "Run anytime between 12 AM and 8 AM") and let the scheduler pick the greenest hour.

Implementation in Airflow

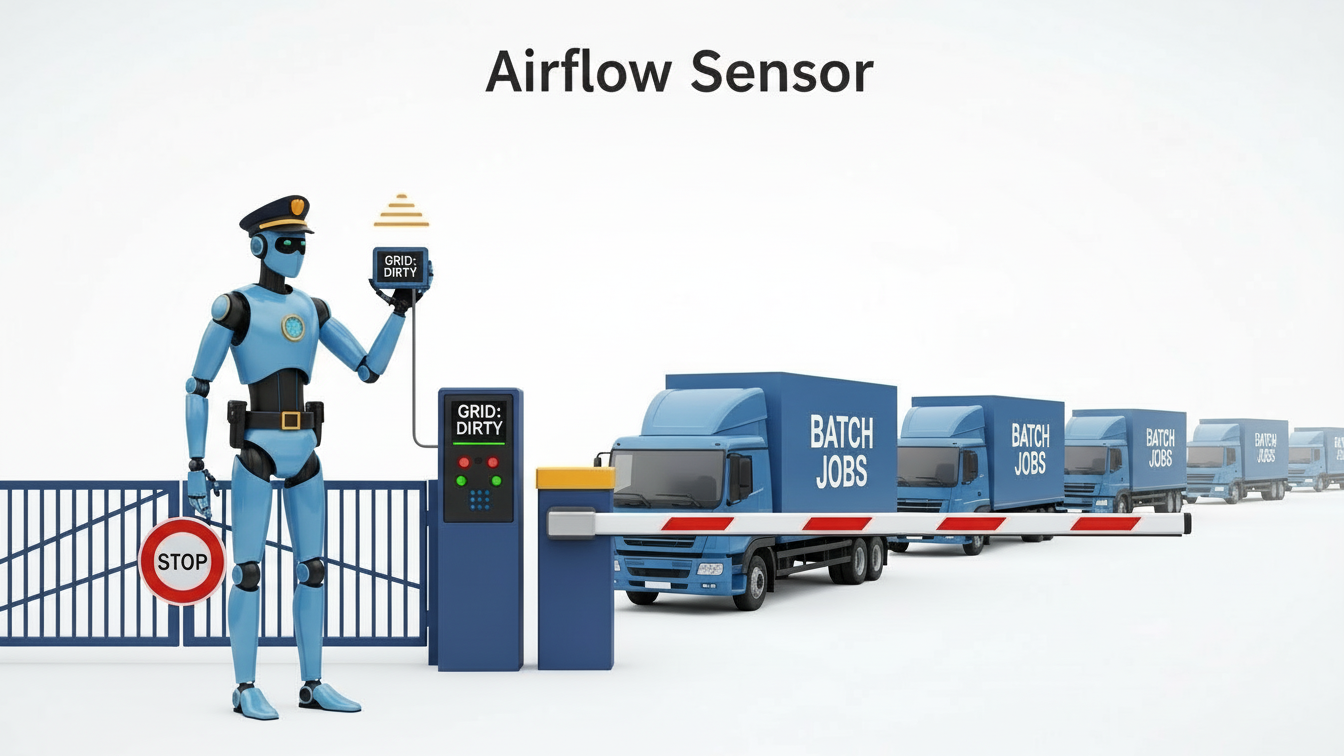

You can implement a Carbon Sensor in Apache Airflow. This sensor polls a carbon forecast API and only allows the DAG to proceed if the forecasted intensity for the next hour is optimal.

Python

from airflow.sensors.base import BaseSensorOperator

from carbon_sdk import get_forecast

class CarbonWindowSensor(BaseSensorOperator):

def poke(self, context):

region = "us-west-2"

current_intensity = get_current_intensity(region)

forecast = get_forecast(region, hours=1)

# If current is cleaner than the forecast, run NOW.

# If forecast says it will be cleaner in 1 hour, wait.

if current_intensity < min(forecast):

return True

else:

print("Waiting for cleaner energy...")

return False

Implementation in Dagster

Dagster's asset-based orchestration is perfect for this. You can define a FreshnessPolicy that is loose (e.g., "fresh within 24 hours") and use a CarbonResource to trigger materialization only during low-carbon windows.

Measuring the Impact

By shifting a 10-hour Spark job from a high-intensity window (450 gCO2/kWh) to a low-intensity window (150 gCO2/kWh), you reduce the carbon footprint of that job by 66% without changing a single line of Spark code or reducing the dataset size.

Verdict: Time-shifting is the lowest-hanging fruit in GreenOps. It requires no hardware changes, only smarter scheduling logic.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.