1. Executive Synthesis

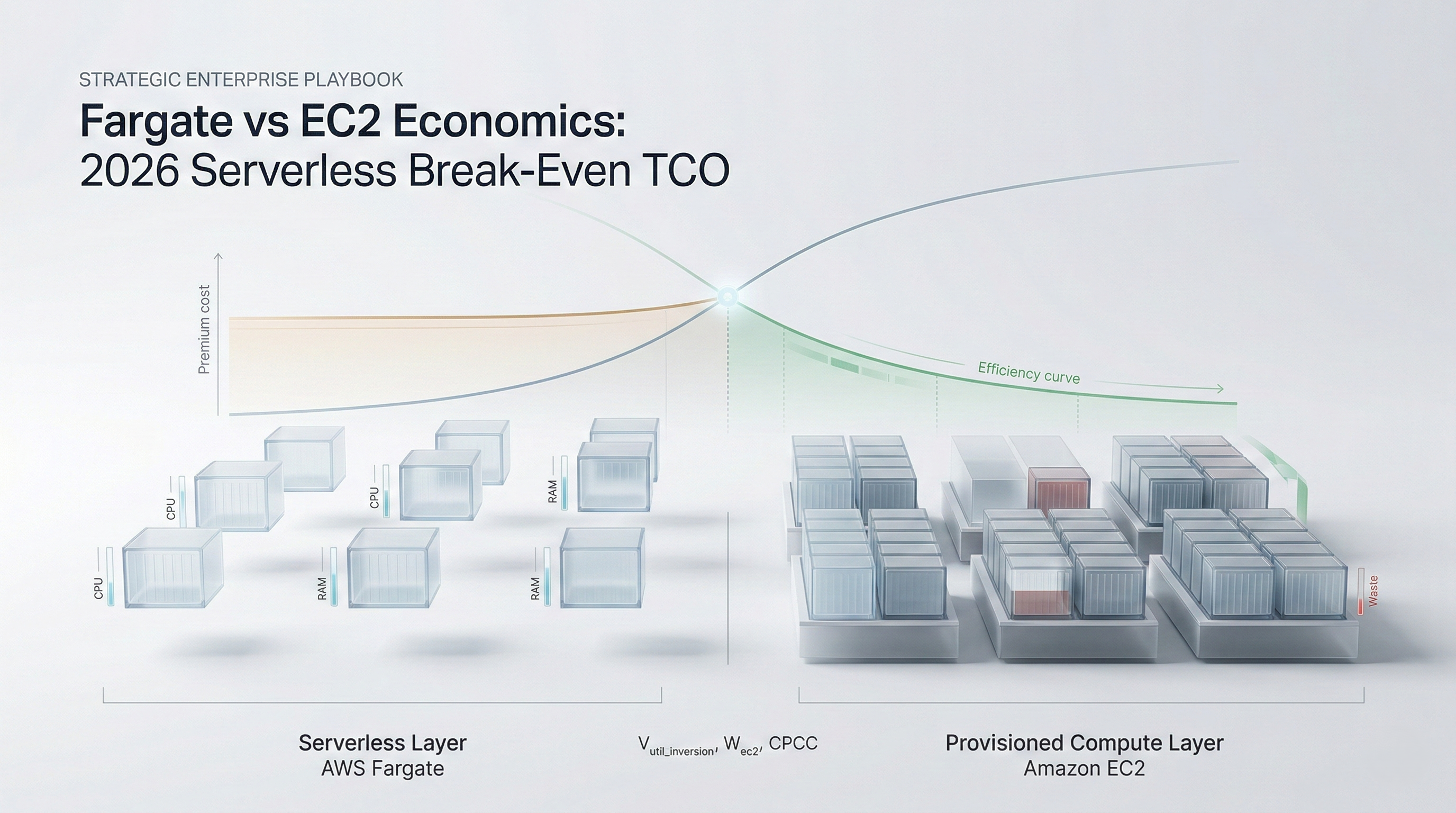

The enterprise adoption of serverless container compute—predominantly AWS Fargate—was originally driven by a compelling operational narrative: abstract away the undifferentiated heavy lifting of managing EC2 nodes, eliminate the complexity of cluster autoscaling, and pay exclusively for the compute requested by the pod. However, as enterprise architectures mature into massive-scale, continuous processing environments by 2026, the financial reality of this abstraction has become a severe liability. Fargate carries a massive baseline premium per vCPU and GB of RAM compared to standard EC2 instances.

When organizations default to serverless compute without mathematical justification, they fall into the "Serverless Premium Trap." Fargate is economically optimal for highly volatile, burstable workloads, zero-to-scale batch processing, and isolated CI/CD runners. But when utilized for steady-state, continuous SaaS web-serving layers or baseline AI inference APIs, the Fargate premium compounds, resulting in infrastructure bills that are 30% to 50% higher than a well-architected EC2 cluster managed by modern autoscalers like Karpenter.

This playbook establishes the quantitative discipline required to navigate the Fargate vs. EC2 dichotomy. It introduces the Serverless Premium Threshold (SPT) Model, forcing enterprise architects to mathematically evaluate the utilization consistency of a workload before dictating its compute backend. The operational landscape of 2026 demands that enterprises leverage "Bin-Packing Arbitrage." By deploying Karpenter to aggressively bin-pack pods onto EC2 Spot and Graviton instances in milliseconds, organizations can achieve the operational simplicity of serverless while capturing the massive financial discounts of raw, provisioned compute.

FinOps leaders must establish rigid governance boundaries: workloads exhibiting high continuous utilization must be mathematically barred from executing on Fargate. By implementing dynamic infrastructure routing—where the baseline load operates on reserved EC2 instances and only the unpredictable, spiky traffic spills over into serverless Fargate—enterprises can optimize their unit economics, eliminate the serverless premium, and relentlessly defend their gross margins.

2. Market Gap & Search Intent Failure Analysis

Search intent surrounding "Fargate vs EC2 cost comparison" is plagued by oversimplified, static calculators. Standard industry blogs compare the hourly price of one Fargate vCPU against one EC2 vCPU, note the 20-30% premium, and conclude that EC2 is cheaper. This analysis is functionally useless because it ignores the reality of EC2 waste.

The critical market gap is the failure to model the "Waste Factor" inherent in provisioned compute. An EC2 instance is rarely utilized at 100%. If an enterprise provisions a 16-vCPU instance but the scheduled pods only request 9 vCPUs, the organization is paying for 7 idle vCPUs. Analysts fail to provide the equations that calculate the exact utilization threshold where the waste on an EC2 instance becomes more expensive than the premium of a perfectly sized Fargate task. Furthermore, literature ignores the radical shift introduced by modern node provisioners like Karpenter, which drastically reduce EC2 waste by dynamically launching custom-sized instances perfectly matched to the pending pod requirements. This playbook fills that gap by providing the dynamic break-even formulas required to model actual cluster mechanics, not just static list prices.

3. Core Strategic Framework

To optimize container compute spend, enterprises must execute the Serverless Premium Threshold (SPT) Model. This framework mandates that compute placement is driven by mathematical utilization profiles rather than engineering convenience.

Implementation Protocol:

Workload Volatility Profiling: Analyze the target workload over a 14-day period to calculate its Peak-to-Trough Ratio (PTR) and its baseline continuous utilization percentage.

Calculate the EC2 Waste Factor ($W_{ec2}$): Model the projected bin-packing efficiency of the workload if deployed on an EC2 Auto Scaling Group.

Execute the Break-Even Algorithm: Determine the $V_{util\_inversion}$ to identify if the workload operates above or below the serverless break-even point.

Decision Matrix:

If Continuous Utilization $> 65\%$ AND workload is stateless, deploy on EC2 utilizing Karpenter and Spot Instances.

If Peak-to-Trough Ratio $> 4.0$ (highly volatile) OR workload requires immediate zero-to-scale capabilities, mandate deployment on AWS Fargate.

If the workload represents the steady-state baseline, reserve it on EC2; route overflow scaling spikes directly to Fargate using AWS ECS Capacity Providers or EKS Fargate Profiles.

4. Financial Modeling Layer (MANDATORY)

The deployment of serverless compute must be governed by strict financial equations to prevent margin erosion.

Core Equations

1. Fargate Baseline Premium ($P_{fargate}$):

Calculates the raw cost of executing a container on Fargate based on requested resources.

$$P_{fargate} = \sum_{i=1}^{n} \left( (V_{cpu\_req} \times R_{fargate\_cpu}) + (M_{ram\_req} \times R_{fargate\_ram}) \right) \times T_{hrs}$$

Where:

$V_{cpu\_req}$ = Number of vCPUs explicitly requested by the pod.

$R_{fargate\_cpu}$ = Hourly rate per Fargate vCPU.

$M_{ram\_req}$ = GB of RAM requested.

$R_{fargate\_ram}$ = Hourly rate per GB of Fargate RAM.

2. EC2 Waste Factor ($W_{ec2}$):

Quantifies the financial loss of unallocated space (poor bin-packing) on provisioned EC2 instances.

$$W_{ec2} = \left( 1 - \frac{U_{allocated\_cpu}}{U_{provisioned\_cpu}} \right) \times (C_{ec2\_hourly} \times T_{hrs})$$

Where:

$U_{allocated\_cpu}$ = Total vCPUs requested by all scheduled pods.

$U_{provisioned\_cpu}$ = Total vCPUs physically available on the underlying EC2 nodes.

$C_{ec2\_hourly}$ = Blended hourly cost of the EC2 instances.

3. Serverless ROI Inversion ($ROI_{inv}$):

Identifies the point at which the operational savings of not managing EC2 nodes are eclipsed by the Fargate compute premium.

$$ROI_{inv} = \frac{(P_{fargate\_annual} - C_{ec2\_annual\_optimized}) - C_{sre\_labor\_avoided}}{Total\_Compute\_Budget}$$

A) Sensitivity Analysis Table

This table models the monthly TCO of running 1,000 vCPUs of compute based on the cluster's bin-packing efficiency (EC2 Waste) versus the static Fargate premium.

Variable (EC2 Bin-Packing Efficiency) | EC2 Fleet Cost (Monthly) | Fargate Fleet Cost (Monthly) | Mathematical Winner |

Poor Efficiency (40% Utilized) | $85,000 (Paying for heavy waste) | $40,000 | Fargate (Saves $45k) |

Average Efficiency (65% Utilized) | $52,000 (Standard waste) | $40,000 | Fargate (Saves $12k) |

High Efficiency (90% Karpenter) | $25,000 (Optimized packing) | $40,000 | EC2 (Saves $15k) |

High Efficiency + Spot Instances | $9,000 (Maximum optimization) | $13,000 (Fargate Spot) | EC2 Spot (Saves $4k) |

Decision Threshold: If an engineering team cannot maintain an EC2 bin-packing efficiency greater than 65%, they are mathematically required to utilize Fargate. If they deploy Karpenter and hit 90% efficiency, Fargate becomes a financial penalty.

B) Break-Even Formula

The Utilization Inversion Point ($V_{util\_inversion}$) calculates the exact steady-state utilization percentage where an EC2 instance becomes cheaper than Fargate.

$$V_{util\_inversion} = \left( \frac{R_{ec2\_vCPU\_hr}}{R_{fargate\_vCPU\_hr}} \right) \times 100$$

Numerical Example: If a c6g.large EC2 instance costs $0.068/hr (2 vCPU, so $0.034 per vCPU/hr) and Fargate costs $0.040 per vCPU/hr. The Break-Even is ($0.034 / $0.040) * 100 = 85%. If your pods utilize more than 85% of the EC2 node space continuously, EC2 is cheaper. If your workloads are volatile and leave the EC2 node half empty (50% utilization), Fargate is drastically cheaper.

C) Probability-Weighted Risk Table

Quantifying the financial risks associated with serverless container routing.

Scenario | Probability | Financial Impact | Weighted Exposure |

Fargate IP Address Exhaustion (VPC limits) | 8.0% | $15,000 (Deployment failure) | $1,200 per event |

Scale-Up Latency (Fargate cold starts) | 25.0% | $8,000 (SLA violation/timeout) | $2,000 per event |

Over-Provisioned Fargate Task (Memory bloat) | 60.0% | $4,000 (Wasted premium spend) | $2,400 per month |

EC2 Spot Preemption (No capacity) | 12.0% | $10,000 (Service degradation) | $1,200 per event |

D) Cost-per-Unit Model

The central unit of measurement is the Cost Per Compute Cycle ($CPCC$):

$$CPCC = \frac{Total\_Compute\_Spend\_(EC2 + Fargate)}{Total\_vCPU\_Hours\_Actually\_Consumed\_by\_Application}$$

Threshold: If the $CPCC$ exceeds the raw EC2 On-Demand rate by more than 15%, the FinOps team must intervene. This indicates the enterprise is either suffering from massive EC2 waste ($W_{ec2}$) or over-utilizing the Fargate premium for baseline workloads.

5. Operational Architecture Integration

Karpenter Integration on EKS:

To defeat the Fargate premium, the enterprise must achieve near-perfect EC2 bin-packing. Traditional Kubernetes Cluster Autoscaler operates too slowly and is bound by rigid Auto Scaling Groups. By deploying Karpenter, the architecture fundamentally shifts. When a pod is scheduled, Karpenter evaluates the pod's exact vCPU/RAM requests, bypasses ASGs entirely, and instantly provisions a custom-fit EC2 instance directly from the EC2 Fleet API. By utilizing Graviton processors and mixing instance types seamlessly, Karpenter achieves >85% bin-packing efficiency, mathematically eliminating Fargate's only financial advantage.

Dynamic Overflow Architecture (Capacity Providers):

The optimal 2026 architecture is a hybrid routing model. FinOps architects must configure AWS ECS Capacity Providers or EKS Fargate Profiles to manage the Peak-to-Trough variance. The steady-state baseline load (e.g., the 1,000 pods required to run the application 24/7) is strictly scheduled onto highly discounted, 3-year Reserved EC2 Graviton instances. Only when an unpredictable traffic spike occurs (e.g., a viral marketing event) and the EC2 reservation is saturated, does the autoscaler spill the excess pods onto Fargate. This captures the zero-maintenance scaling of serverless without paying the premium on the baseline load.

Right-Sizing Fargate Profiles:

Because Fargate bills purely on requested resources, requesting 4 vCPUs for a pod that only uses 0.5 vCPUs is mathematically disastrous; the enterprise pays the Fargate premium on 3.5 idle vCPUs. Architecture must include mandatory automated right-sizing tools (e.g., Vertical Pod Autoscaler telemetry) that continuously scan Fargate definitions and shrink the requested vCPU and Memory directly down to the P95 usage threshold.

6. Failure Scenarios

Scenario 1: The 24/7 Fargate Trap

Breakdown: An engineering team defaults all microservices to Fargate to avoid managing EC2 nodes. They deploy 500 tasks that run continuously, 24/7, processing a steady stream of background queue data with zero volatility.

Financial Exposure: A structural 40% compute premium (roughly $50,000+ excess OpEx annually) paid for serverless elasticity on a workload that literally never scales down.

Governance Prevention Layer: FinOps Gateways. Any Terraform or Helm deployment requesting Fargate execution for workloads lacking an active Horizontal Pod Autoscaler (HPA) or possessing a minimum replica count $> 10$ is automatically rejected by the CI/CD pipeline, forcing deployment to EC2.

Scenario 2: The DaemonSet Sprawl on Fargate

Breakdown: In EKS, Fargate does not support traditional DaemonSets (background agents like Datadog, Splunk, or FluentBit that run once per node). To get observability, the team injects these agents as sidecar containers into every single Fargate pod.

Financial Exposure: If an enterprise runs 2,000 Fargate pods, they are now paying the Fargate premium to run 2,000 copies of a Datadog agent, resulting in massive, hidden compute bloat and API thrashing.

Governance Prevention Layer: Architectural Mandate. Workloads requiring heavy sidecar observability architectures are mathematically barred from Fargate. They must be routed to EC2 where a single DaemonSet can monitor 50 pods on a single node efficiently.

Scenario 3: The Rightsizing Illusion

Breakdown: Engineers attempt to save money on EC2 by aggressively shrinking pod CPU requests to fit more pods on a node. However, they shrink the limits too far, causing severe CPU throttling. To fix the application latency, they migrate the workload to Fargate with massive CPU requests, "solving" the latency but destroying the budget.

Financial Exposure: Exchanging an application performance problem for a severe financial hemorrhage, masking poor application profiling behind expensive serverless compute.

Governance Prevention Layer: Implementation of strict Application Performance Monitoring (APM) correlated with cost data. Any migration from EC2 to Fargate requires a documented FinOps review proving that the latency was caused by node-level noisy neighbor issues, not simple pod misconfiguration.

7. Board-Level Translation Layer

EBITDA Delta Modeling: Shifting steady-state, predictable workloads from Fargate back to highly optimized EC2 Graviton instances drives an immediate 30-45% reduction in compute COGS. For a SaaS platform spending $5M annually on Fargate, executing the SPT framework recovers $1.5M to $2.25M directly into EBITDA without altering product functionality.

Gross Margin Defense: The board must understand that Serverless is an operational tool, not a financial strategy. Defaulting to serverless universally compresses gross margins. By strictly reserving serverless for spiky, unpredictable traffic, the enterprise insulates its profit margins from the inherent premiums of managed compute.

Capital Allocation Signal: The decision to utilize Fargate signals a trade-off: spending OpEx (higher cloud bills) to save CapEx (engineering salaries). The CFO must evaluate if the engineering hours "saved" by not managing Karpenter/EC2 are actually generating new product revenue that exceeds the Fargate premium.

Risk-Adjusted ROI Formula:

$$ROI_{spt} = \frac{\text{OpEx Savings from EC2 Migration}}{\text{Engineering CapEx to Implement Karpenter} + C_{sre\_maintenance}}$$

8. Data Visualization Suggestions

A dual-line chart plotting continuous utilization percentage. The Fargate cost line is flat; the EC2 cost line starts high (due to waste) but drops sharply as utilization increases, crossing Fargate at the Break-Even point.

A visual load graph showing steady traffic in blue (handled by a solid block of EC2 Reserved Instances) and sudden traffic spikes in red (spilling over into ephemeral Fargate tasks).

A bar chart comparing the cost of running observability agents via a single EC2 DaemonSet versus injecting them as sidecars into 1,000 Fargate pods.

A visual representation of an EC2 node "box." Before Karpenter: the box is 50% full of pods (high waste). After Karpenter: the box is perfectly sized to fit the exact pods requested (zero waste).

A dashboard gauge showing the $CPCC$. Green indicates optimized EC2 bin-packing; Red indicates heavy serverless premium saturation.

9. Why Analyst-Style Summaries Fail at Financial Precision

When analysts review cloud architectures, they frequently rely on narrative truisms such as, "Serverless architectures like Fargate reduce operational overhead and lower total cost of ownership." This is a dangerous, generalized half-truth.

It fails because it treats "Total Cost of Ownership" as a qualitative feeling rather than a mathematical equation. Analysts do not calculate the $V_{util\_inversion}$ point. If a FinOps director follows the analyst's narrative and deploys a massive, steady-state AI inference API on Fargate, they will inadvertently incinerate their infrastructure budget. Precision modeling demands that we strip away the marketing narrative of "no servers to manage" and explicitly calculate the EC2 Waste Factor ($W_{ec2}$). By proving mathematically that Karpenter can drive EC2 bin-packing to 90%, the equation-backed model demonstrates that the analyst's assertion is financially invalid for high-utilization enterprise workloads. Financial engineering relies on math, not convenience.

10. Strategic Conclusion

AWS Fargate and serverless container compute represent a remarkable engineering achievement, offering instantaneous scale and profound operational simplicity. However, in the 2026 enterprise landscape, treating operational simplicity as a mandate that supersedes unit economics is a path to financial ruin. Fargate is not a default compute tier; it is a specialized tool engineered for volatility, designed to absorb unpredictable scaling events and zero-to-scale batch jobs.

When enterprises misallocate steady-state, predictable workloads onto Fargate, they pay a severe, continuous premium for elasticity they do not use. To execute mature FinOps, infrastructure leaders must adopt the Serverless Premium Threshold (SPT) Model. They must relentlessly profile workload volatility and enforce a mathematical break-even point. If a workload's continuous utilization exceeds the inversion point, FinOps must mandate its migration to EC2.

This transition is entirely dependent on modernizing the EC2 provisioning layer. Reverting to clunky, slow-moving Auto Scaling Groups is a failure. Enterprises must deploy Karpenter to achieve the lightning-fast, highly efficient bin-packing required to make EC2 mathematically superior to Fargate. By architecting a hybrid system—relying on tightly packed, discounted EC2 instances for the baseline and utilizing Fargate strictly as an overflow safety valve—the enterprise captures the exact operational benefits of serverless while permanently defending its gross margins from the serverless premium.

11. Implementation Readiness Checklist

Execute the Volatility Audit: Run a 30-day query across your container fleet to identify all workloads operating with a Peak-to-Trough ratio of $< 1.5$ (highly stable).

Calculate the Serverless Premium: Quantify the exact dollar amount currently being spent on Fargate for steady-state workloads that could be running on 3-year Reserved EC2 instances.

Deploy Karpenter (EKS/ECS): Replace legacy Cluster Autoscaler with Karpenter to drastically improve EC2 bin-packing efficiency and node spin-up speed.

Implement Hybrid Capacity Providers: Configure infrastructure-as-code to utilize EC2 ASGs as the primary capacity provider, routing traffic to Fargate only when EC2 scaling limits are temporarily exhausted.

Ban DaemonSet Workloads from Serverless: Identify any Fargate workloads running heavy sidecars (monitoring, service meshes) and aggressively migrate them back to EC2 node-level DaemonSets.

Enforce Fargate Right-Sizing: Deploy automated VPA (Vertical Pod Autoscaler) pipelines to continuously shrink Fargate vCPU and Memory requests down to exact P95 usage thresholds.

Evaluate Fargate Spot: For purely stateless, fault-tolerant batch jobs that must run on Fargate, mandate the use of Fargate Spot capacity to collapse the premium by up to 70%.

Block 24/7 Fargate Defaults: Implement CI/CD pipeline checks that physically block deployments from targeting Fargate if the replica count is static and lacks auto-scaling triggers.

Standardize on Graviton: Whether on EC2 or Fargate, force all compatible container workloads to utilize ARM-based Graviton architectures to secure an immediate 20% price-performance gain.

Establish the FinOps Utilization Gateway: Require engineering teams to submit empirical utilization data ($W_{ec2}$ calculations) before FinOps approves any massive-scale serverless deployments.

Stop guessing where your Kubernetes budget is going. Schedule a demo here to explore Kubernetes cost monitoring with Cloud Atler.