1. Executive Synthesis

The era of unrestricted, default public cloud migration ended when the scale of AI inference and continuous stateful processing fractured the unit economics of hyperscaler compute. By 2026, the strategic enterprise mandate is neither "cloud-first" nor "datacenter-only"; it is liquid workload placement. This requires a mature Hybrid FinOps Architecture, where highly predictable, massive-scale workloads are actively repatriated to leased bare metal or owned data centers to capture a 40-60% reduction in compute premiums, while volatile, ephemeral workloads remain tightly coupled to public cloud Auto Scaling Groups.

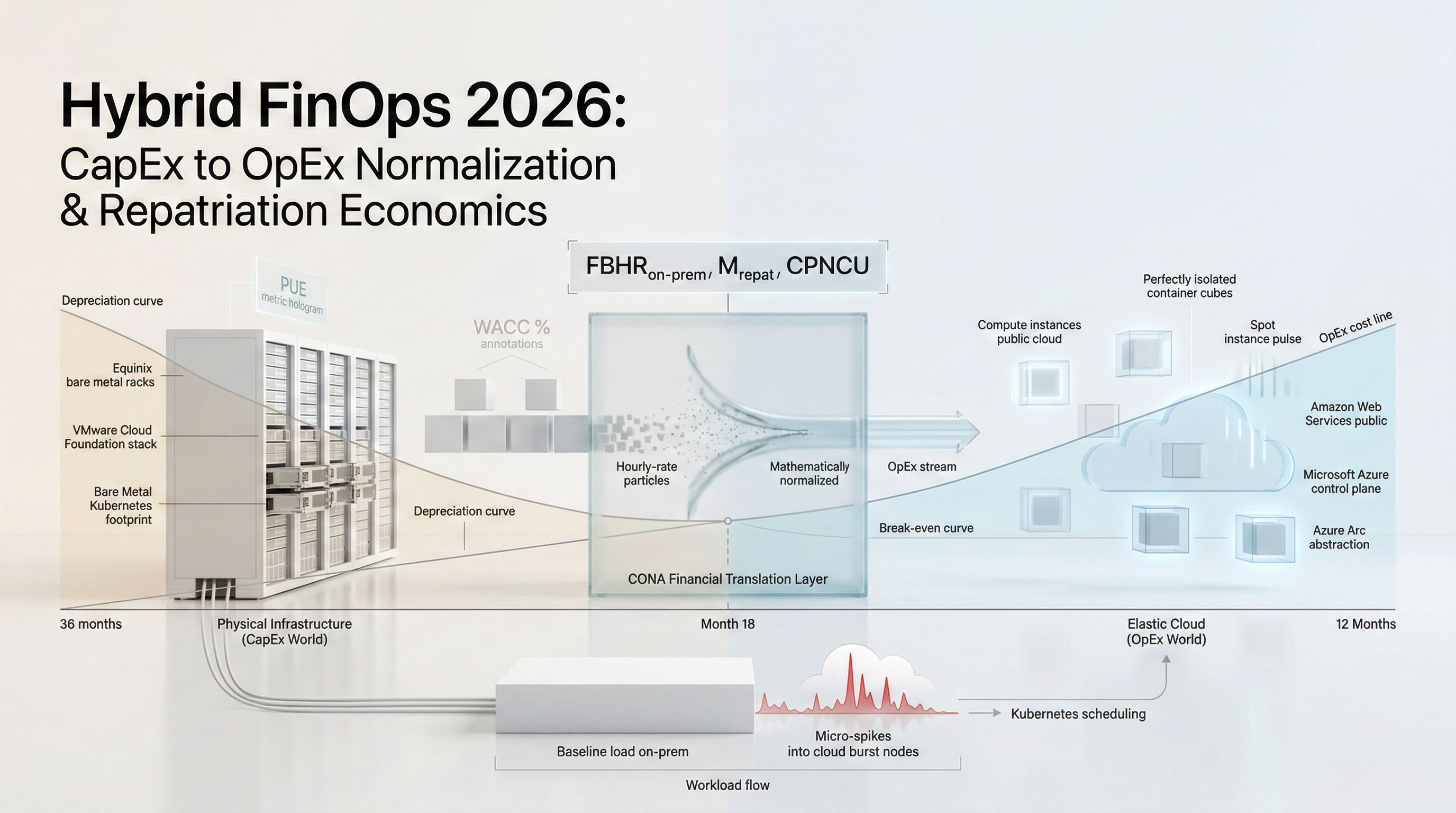

However, operating a hybrid architecture introduces a catastrophic blind spot for traditional FinOps teams: the inability to mathematically compare the Capital Expenditure (CapEx) of a physical GPU rack against the Operational Expenditure (OpEx) of an AWS p5.48xlarge instance. Hyperscaler billing engines output clean, normalized hourly rates. Data centers output complex depreciation schedules, three-year commercial real estate leases, distinct power/cooling utility bills, and varied hardware refresh cycles. When an Enterprise Architect attempts to compare these two environments without a mathematically rigorous normalization framework, the resulting decisions are universally flawed, leading to stranded capital or massive, unnecessary cloud premiums.

A successful 2026 Hybrid FinOps strategy demands the implementation of a unified accounting fabric. Enterprises must synthesize facility power usage effectiveness (PUE), hardware depreciation velocity, data egress penalties, and network cross-connect leases into a single, dynamic metric: the Fully Burdened Hourly Rate (FBHR) of on-premises compute. Once this metric is established, it must be programmatically injected into the same centralized FinOps database (e.g., the FOCUS 1.2 data lake) alongside public cloud telemetry.

This playbook provides the specific mathematical models required to normalize CapEx and OpEx at scale. It forces the enterprise to quantify the "Agility Penalty" of owning hardware and the "Premium Tax" of renting it. By establishing rigid break-even thresholds based on workload utilization consistency and data gravity, infrastructure leaders can dynamically route Kubernetes clusters across the geopolitical and physical boundaries of the enterprise, aggressively defending EBITDA against the compounding structural costs of the 2026 hyperscaler oligopoly.

2. Market Gap & Search Intent Failure Analysis

Enterprise research targeting "Cloud Repatriation ROI" or "Hybrid FinOps" frequently yields vendor-sponsored whitepapers that suffer from catastrophic confirmation bias. Hardware vendors present simplistic TCO calculators that compare the purchase price of a server against three years of public cloud On-Demand pricing, predictably concluding that hardware is 80% cheaper. Conversely, cloud providers publish frameworks that artificially inflate data center management costs while assuming perfect cloud elasticity.

The structural market gap is the total failure to model the Cost of Capital and Hardware Depreciation Velocity. Standard analyst summaries ignore the reality that purchasing a $3M cluster of AI accelerators today means locking capital into silicon that will be functionally obsolete in 18 months due to the rapid release cycles of NVIDIA and AMD. They fail to account for the Weighted Average Cost of Capital (WACC)—the money an enterprise loses by tying up cash in physical servers rather than investing it in high-yield product engineering. This playbook bridges this gap by introducing the CapEx-OpEx Normalization Architecture (CONA), which strictly integrates corporate finance mechanics (WACC, straight-line depreciation, network peering costs) into the engineering infrastructure decision matrix.

3. Core Strategic Framework

The enterprise must adopt the CapEx-OpEx Normalization Architecture (CONA). This framework acts as a financial translation layer, converting the discrete, lump-sum purchases of physical infrastructure and the fixed costs of facility management into an executable, continuous hourly rate that can be directly compared against cloud spot and reserved pricing.

Implementation Protocol:

Facility Baslining: Calculate the baseline operational cost of the colocation or private facility, isolating the Power Usage Effectiveness (PUE) and network cross-connect lease costs per rack.

Hardware Capitalization Translation: Apply the corporate WACC and a strict 36-month depreciation schedule to the physical servers, switches, and storage arrays to generate a monthly CapEx burden.

Hourly Normalization: Divide the combined monthly CapEx burden and facility OpEx by 730 hours to establish the baseline hardware compute rate.

Execution Decision Matrix:

If workload Continuous Utilization $> 80\%$ AND $FBHR_{on-prem}$ is $> 25\%$ cheaper than Cloud Reserved Instances, mandate repatriation to bare metal.

If workload requires distinct, latest-generation silicon (e.g., Blackwell GPUs) that the enterprise cannot amortize over 36 months, mandate public cloud deployment regardless of the hourly premium.

If the Data Egress requirement from On-Premises to Public Cloud exceeds 10 TB/day, deploy an AWS Direct Connect / ExpressRoute and factor the port-hour cost into the $FBHR_{on-prem}$ before executing arbitrage.

4. Financial Modeling Layer (MANDATORY)

The mathematical normalization of hardware and cloud requires strict equations to govern workload placement.

Core Equations

1. Fully Burdened Hourly Rate ($FBHR_{on-prem}$):

This equation translates static data center costs into a dynamic hourly metric equivalent to a cloud invoice line item.

$$FBHR_{on-prem} = \frac{\left( \frac{CapEx_{infra}}{D_{months}} \right) + OpEx_{power} + OpEx_{facility} + OpEx_{labor\_alloc}}{N_{usable\_compute\_nodes} \times 730}$$

Where:

$CapEx_{infra}$ = Total capital cost of servers, networking gear, and initial setup.

$D_{months}$ = Depreciation schedule (strictly 36 months for 2026 enterprise standards).

$OpEx_{power}$ = Monthly cost of power and cooling based on rack kW draw and facility PUE.

$OpEx_{facility}$ = Monthly colocation lease, cross-connect fees, and physical security.

$OpEx_{labor\_alloc}$ = Monthly cost of hardware SREs (Smart Hands) allocated to the specific cluster.

2. Repatriation Arbitrage Margin ($M_{repat}$):

Calculates the true financial advantage of moving a workload out of the public cloud, explicitly penalizing the hardware for the Weighted Average Cost of Capital.

$$M_{repat} = \left( C_{cloud\_3yr\_reserved} \right) - \left( (FBHR_{on-prem} \times 730 \times 36 \times N_{nodes}) \times (1 + WACC) \right)$$

Where:

$C_{cloud\_3yr\_reserved}$ = Total 3-year cost of the equivalent cloud instances under maximum discount.

$WACC$ = The enterprise's Weighted Average Cost of Capital (e.g., 0.08 or 8%).

3. Hardware Obsolescence Penalty ($P_{obsolescence}$):

Quantifies the financial risk that owned hardware will become insufficiently performant before the 36-month depreciation schedule completes, requiring premature replacement.

$$P_{obsolescence} = \left( \frac{T_{remaining\_months}}{36} \right) \times CapEx_{infra} \times Probability_{tech\_shift}$$

A) Sensitivity Analysis Table

This table models the EBITDA impact of repatriating a 500-node continuous processing cluster based on the colocation Power Cost and the Enterprise WACC.

Variable (Power Cost kW/h) | Low WACC (5%) | Base WACC (9%) | High WACC (14%) | Repatriation Decision |

Low Power ($0.06/kWh) | +$2.8M (Highly Accretive) | +$2.1M | +$1.2M | Execute Repatriation |

Med Power ($0.12/kWh) | +$1.5M | +$800k | -$100k | Viable for Low/Base WACC |

High Power ($0.24/kWh) | -$400k (Destroying Value) | -$1.1M | -$2.5M | Block Repatriation, Stay in Cloud |

Decision Threshold: If the facility power costs exceed $0.15/kWh and the corporate WACC is high, the enterprise cannot compete with hyperscaler economies of scale; all repatriation efforts must be abandoned.

B) Break-Even Formula

The Utilization Break-Even Point ($U_{hybrid\_be}$) calculates the exact percentage of time the physical hardware must be actively running production workloads to equal the cost of on-demand public cloud elasticity.

$$U_{hybrid\_be} = \left( \frac{FBHR_{on-prem}}{Rate_{cloud\_on-demand}} \right) \times 100$$

Numerical Example: If an equivalent EC2 instance costs $2.00/hour on-demand, and the normalized $FBHR_{on-prem}$ for the bare metal server is $0.80/hour. The break-even utilization is ($0.80 / $2.00) * 100 = 40%. The on-premises hardware must run at least 40% of the month (292 hours) to be cheaper than spinning up a cloud node dynamically. If the workload only runs 20% of the month, stay in the cloud.

C) Probability-Weighted Risk Table

Quantifying the operational risks introduced by owning physical infrastructure.

Scenario | Probability | Financial Impact | Weighted Exposure |

Supply Chain Delivery Delay (Hardware) | 35.0% | $400,000 (Time-to-market loss) | $140,000 per build |

Colocation Power Outage (Beyond UPS/Generator SLA) | 1.5% | $2,500,000 (SLA breach & downtime) | $37,500 per year |

Hardware Component Failure (RMA Latency) | 12.0% | $15,000 (Degraded performance) | $1,800 per month |

Stranded Capacity (Project Cancellation) | 20.0% | $850,000 (Unused CapEx) | $170,000 per project |

D) Cost-per-Unit Model

The central unit of measurement for hybrid operations is the Cost Per Normalized Compute Unit (CPNCU):

$$CPNCU = \frac{Total\_Monthly\_Cloud\_Spend + (Total\_CapEx_{amortized} + Facility\_OpEx)}{Total\_vCPU\_Hours\_Actually\_Utilized\_Globally}$$

Threshold: If the $CPNCU$ of the on-premises footprint exceeds the $CPNCU$ of the cloud footprint for two consecutive quarters, FinOps must initiate a hardware liquidation protocol and shift the workloads back to public cloud spot instances.

5. Operational Architecture Integration

Bare Metal Kubernetes (Tanzu/Rancher/Anthos):

A hybrid architecture cannot rely on disparate deployment mechanisms. Developers must not know whether their container is running in AWS us-east-1 or an Equinix datacenter in Ashburn. The architecture requires a unified Kubernetes control plane (e.g., Google Anthos, Azure Arc, or SUSE Rancher). These platforms deploy uniform K8s clusters onto physical hardware, effectively turning bare metal servers into self-managed cloud regions. The CONA framework takes the $FBHR_{on-prem}$ of those physical nodes and injects it directly into the Kubernetes cost monitoring tool (e.g., Kubecost), allowing for identical pod-level chargeback reporting regardless of the underlying physical location.

Direct Connect & Egress Abstraction:

Repatriation fails when workloads in the datacenter need to constantly query 500 TB data lakes remaining in AWS S3, generating massive NAT Gateway and Data Transfer Out charges. Hybrid architecture mandates the deployment of dedicated, private fiber connections (AWS Direct Connect / GCP Cloud Interconnect). The cost of these 100 Gbps ports must be strictly capitalized into the $FBHR_{on-prem}$. Furthermore, enterprises must deploy caching layers (e.g., Alluxio or localized Redis) on-premises to minimize the frequency of cloud-bound API calls, strictly suppressing the network egress penalty.

Spot-Instance Spillover Architecture:

The hybrid environment must be dimensioned strictly for the baseline load. If the continuous load requires 1,000 nodes, the enterprise purchases exactly 1,000 physical nodes. The architecture handles burst traffic via "Cloud Bursting." When the bare metal Kubernetes cluster hits 95% utilization, the global ingress controller automatically routes excess API requests to a mirror Kubernetes cluster running exclusively on cheap, ephemeral Spot instances in the public cloud.

6. Failure Scenarios

Scenario 1: The Zombie Hardware Trap

Breakdown: An enterprise purchases $5M in physical servers to repatriate a massive Hadoop cluster. Nine months later, the business unit pivots to a real-time streaming architecture (Kafka/Flink) that requires fundamentally different CPU-to-Memory ratios. The $5M hardware is now technically obsolete for the enterprise's needs but carries 27 months of depreciation, rendering it "stranded capital."

Financial Exposure: $3.75M in un-amortized capital trapped in the datacenter, forcing the enterprise to simultaneously pay for new cloud compute while absorbing the depreciation hit on the P&L.

Governance Prevention Layer: The Stranded Capital Gateway. FinOps strictly prohibits CapEx purchases for workloads that lack a proven, 3-year static architectural forecast. Highly volatile or experimental product lines are mathematically banned from on-premises deployment, regardless of theoretical utilization.

Scenario 2: The Egress Hemorrhage

Breakdown: A machine learning training workload is repatriated to a private GPU cluster to save on AWS

p4dhourly rates. However, the 10 PB training dataset remains in AWS S3. The on-premises GPUs pull the 10 PB across the public internet via a standard NAT Gateway every week for training epochs.Financial Exposure: Saving $150,000 a month in GPU compute while generating $450,000 a month in AWS Data Transfer Out (Egress) fees.

Governance Prevention Layer: Network Gravity Modeling. Before any workload is approved for repatriation, the FinOps team must calculate the exact GB/hour network egress requirement. If the projected Egress Cost $> 15\%$ of the $M_{repat}$ (Arbitrage Margin), the repatriation is blocked until the data lake is physically migrated to an on-premises object storage array (e.g., MinIO/Pure Storage).

Scenario 3: The SRE CapEx Illusion

Breakdown: An enterprise calculates that hardware is cheaper than cloud but fails to account for the labor required to rack, stack, cable, patch, and replace failed RAM sticks at 3:00 AM. They attempt to run a 500-node on-premises footprint with the same two cloud engineers who previously managed a fully abstracted AWS environment. The physical infrastructure suffers continuous degradation.

Financial Exposure: SLA violations and systemic outages resulting in $500,000+ in lost revenue per month due to hardware neglect.

Governance Prevention Layer: Mandatory Labor Capitalization. The $FBHR_{on-prem}$ equation enforces the inclusion of $OpEx_{labor\_alloc}$. FinOps must mandate that every 100 physical nodes requires the budgeting of 0.5 dedicated Hardware SRE FTEs. If this labor cost flips the arbitrage margin negative, the workload stays in the cloud.

7. Board-Level Translation Layer

EBITDA Delta Modeling: Shifting massive, steady-state workloads from OpEx (cloud) to CapEx (owned hardware) mechanically increases EBITDA, as depreciation (CapEx) sits below the EBITDA line. A CFO executing a $10M repatriation maneuver immediately improves the EBITDA valuation multiple of the company, providing massive leverage for board-level financial reporting.

Gross Margin Defense: The hyperscaler premium is a continuous tax on SaaS COGS. By operating the baseline load on normalized, heavily depreciated hardware, a SaaS enterprise permanently lowers the floor of its unit economics, insulating gross margins against cloud vendor price hikes or changes in Enterprise Discount Program (EDP) tiers.

Capital Allocation Signal: The decision to utilize physical hardware is a rigid capital allocation that decreases corporate agility. The board must evaluate if tying up $10M in physical servers yields a higher Return on Invested Capital (ROIC) than deploying that $10M into new sales channels or M&A.

Risk-Adjusted ROI Formula:

$$ROI_{repat} = \frac{(C_{cloud\_3yr} - C_{on-prem\_3yr}) - (P_{obsolescence} + P_{egress\_penalty})}{CapEx_{infra} \times (1 + WACC)}$$

8. Data Visualization Suggestions

A line graph plotting time (36 months) on the X-axis and Total Cumulative Cost on the Y-axis. The OpEx (Cloud) line is a steep, steady diagonal. The CapEx (Hardware) line starts with a massive vertical spike at Month 1, then slopes very gently, crossing the OpEx line at the break-even month.

A network architecture diagram showing an AWS VPC connected via Direct Connect to an Equinix bare metal rack, highlighting the strict unidirectional data flow (Ingress is free, Egress is heavily taxed) to prevent financial hemorrhage.

A flowchart showing the CONA logic: "Is Utilization > 80%?" -> "Is Tech Lifespan > 36 months?" -> "Is Egress < 10TB/day?" leading to binary "Cloud" or "Repatriate" decisions.

A waterfall chart taking a raw $5,000 server purchase and breaking it down mathematically via power, cooling, labor, and depreciation to arrive at exactly $0.22/hour.

A visual representation of traffic volume: a solid block representing physical on-premises servers absorbing the baseline load, with red "spikes" overflowing seamlessly into AWS Spot instances.

9. Why Analyst-Style Summaries Fail at Financial Precision

When technology analysts publish reports stating, "Enterprises should consider repatriating predictable workloads to save on cloud costs," they are dispensing dangerous, incomplete financial advice. Narrative summaries fail because they lack the rigorous corporate finance mechanisms required to evaluate physical assets.

If a CIO follows a narrative summary, they will look at a Dell server invoice and an AWS EC2 invoice, conclude Dell is cheaper, and authorize the purchase. They fail to calculate the Weighted Average Cost of Capital (WACC), completely ignoring the fact that locking up millions in cash carries an intrinsic penalty that must be mathematically applied to the hardware's operating rate. Equation-backed modeling, specifically utilizing the $FBHR_{on-prem}$ and the $M_{repat}$ formulas, bridges the gap between IT operations and corporate finance. It forces the engineering team to speak the language of the CFO—quantifying depreciation schedules, accounting for stranded capital risks, and embedding facility power rates directly into the unit economics of a Kubernetes pod. You cannot execute a hybrid cloud strategy with prose; you execute it with strict, mathematically normalized accounting.

10. Strategic Conclusion

The 2026 enterprise infrastructure landscape demands an end to ideological purity. The concept that "all workloads belong in the cloud" is as financially destructive as the archaic belief that "all data must be housed in a private datacenter." True infrastructure maturity is defined by the absolute liquidity of compute, governed strictly by mathematical arbitrage.

Executing a Hybrid FinOps strategy requires accepting that public cloud and private infrastructure operate on fundamentally misaligned financial mechanics. Cloud is an elastic operational expense; hardware is an inelastic capital commitment. To reconcile this, FinOps leaders must deploy the CapEx-OpEx Normalization Architecture (CONA). By forcing all physical datacenter costs—including power, cross-connects, labor, and depreciation—through a rigorous mathematical funnel, the enterprise generates a Fully Burdened Hourly Rate ($FBHR_{on-prem}$). This single metric is the Rosetta Stone of hybrid operations, allowing Kubernetes schedulers and infrastructure architects to dynamically weigh the cost of a bare-metal node against a public cloud Spot instance in real-time.

Repatriation is an incredibly powerful financial weapon for defending gross margins, but it carries immense risk. Stranded capital, hardware obsolescence, and network egress penalties can instantly destroy the theoretical savings of owned infrastructure. Therefore, repatriation must be heavily gated. Only workloads demonstrating unyielding continuous utilization, long-term architectural stability, and low data gravity are granted the privilege of consuming corporate CapEx. Everything else, regardless of the hourly premium, must remain in the public cloud to preserve the ultimate corporate asset: agility.

11. Implementation Readiness Checklist

Calculate Facility PUE and kWh: Obtain the exact Power Usage Effectiveness and utility cost per kilowatt-hour from your colocation or datacenter provider to establish the baseline physical OpEx.

Establish the Corporate WACC: Consult with the CFO to explicitly define the enterprise's Weighted Average Cost of Capital to apply the correct penalty to hardware purchases.

Define the $FBHR_{on-prem}$: Run your standard physical server SKU through the CONA equations to generate an exact, fully burdened hourly rate for your on-premises compute.

Audit Workload Utilization: Analyze the trailing 90 days of cloud telemetry to identify highly stable, steady-state workloads (Continuous Utilization > 80%) as prime candidates for repatriation.

Model Network Egress Gravity: Before moving any compute, calculate the volume of data that must traverse the internet back to the public cloud and apply the egress cost to the Arbitrage Margin.

Deploy Unified K8s Control Planes: Implement Anthos, Arc, or Rancher to abstract the physical bare metal, allowing developers to deploy containers without interacting with legacy hardware configurations.

Integrate Hardware Costs into K8s FinOps: Feed the manually calculated $FBHR_{on-prem}$ into Kubecost or OpenCost so internal chargeback reports look identical regardless of where the pod runs.

Automate Cloud Bursting: Architect the global ingress routing layer to automatically spill excess traffic to public cloud Spot instances when the on-premises cluster hits 90% utilization.

Standardize Depreciation Schedules: Enforce a strict 36-month straight-line depreciation rule for all FinOps modeling to ensure parity with the rapid release cycles of modern AI and CPU silicon.

Implement the Stranded Capital Gateway: Require mandatory FinOps and architectural sign-off proving that a workload will not fundamentally change its compute architecture for at least 3 years before approving CapEx.

Stop guessing where your Kubernetes budget is going. Schedule a demo here to explore Kubernetes cost monitoring with Cloud Atler.