1. Executive Synthesis

Operating a mature multi-cloud environment in 2026 without a unified, schema-enforced billing data lake is a structural failure in financial governance. Historically, enterprises struggled to compare unit costs across hyperscalers because the AWS Cost and Usage Report (CUR), Azure Enterprise Agreement billing exports, and GCP Cloud Billing data operated on entirely alien taxonomies. An EC2 "Instance Hour" was calculated, tagged, and discounted fundamentally differently than a Google "Core Hour." This forced FinOps teams into an endless cycle of writing fragile, custom SQL mappings just to answer basic board-level questions about cross-cloud gross margins.

The FinOps Open Cost and Usage Specification (FOCUS) version 1.2 permanently eliminates this taxonomy friction. However, the strategic value of FOCUS 1.2 does not lie in building prettier dashboards; it lies in algorithmic financial execution. By standardizing columns such as BilledCost, EffectiveCost, ChargeCategory, and Provider, FOCUS transforms static, retrospective billing reports into an executable, normalized data asset. This playbook transitions the enterprise from reactive cost reporting to the Normalized Data-Gravity Arbitrage (NDGA) Framework.

When billing data is seamlessly normalized, an enterprise can programmatically calculate the exact multi-cloud arbitrage margin between executing a massive batch processing job on AWS Spot instances versus GCP Preemptible VMs, accounting for the egress penalties of moving the underlying data. FOCUS 1.2 is the architectural prerequisite for dynamic, price-aware infrastructure orchestration.

Implementing this requires treating your cloud billing data as a Tier-1 production data engineering pipeline. Enterprises must extract the raw hyperscaler telemetry, run it through robust transformation models (e.g., using dbt), enforce the FOCUS 1.2 schema, and land the normalized data in a highly performant data warehouse (Snowflake or BigQuery). Once this normalized table exists, custom Kubernetes schedulers and infrastructure-as-code pipelines can query it in real-time, executing mathematically sound workload placement decisions that defend gross margins across the entire multi-cloud portfolio.

2. Market Gap & Search Intent Failure Analysis

The primary failure in search intent surrounding "FOCUS FinOps implementation" is the reduction of the specification to a reporting exercise. Vendors and introductory guides present FOCUS as a way to "make your Tableau charts look cleaner." They provide basic Python scripts to rename a few columns and consider the integration complete.

The market gap is the total lack of architectural guidance on building programmatic arbitrage engines on top of the normalized schema. Standard literature ignores the reality of data gravity—the fact that even if compute is 15% cheaper on Azure today, the cost to egress 500TB of data from AWS to Azure to utilize that compute completely obliterates the savings. Analyst summaries fail to provide the mathematical equations necessary to query a FOCUS 1.2 database and calculate the net arbitrage margin. Furthermore, they drastically underestimate the data engineering overhead required to process billions of rows of daily multi-cloud CUR data without blowing up the data warehouse query costs. This playbook provides the rigorous data architecture and financial algorithms required to weaponize FOCUS 1.2.

3. Core Strategic Framework

The enterprise must implement the Normalized Data-Gravity Arbitrage (NDGA) Framework. This framework utilizes the unified schema of FOCUS 1.2 to continuously scan multi-cloud environments for compute inefficiencies, mathematically weighing the cost of compute displacement against the cost of network egress.

Implementation Protocol:

Raw Telemetry Ingestion: Configure automated, daily exports of AWS CUR 2.0, Azure Amortized Cost Data, and GCP Detailed Billing into a centralized raw object storage bucket (S3/GCS).

dbt Transformation to FOCUS 1.2: Execute dbt (data build tool) models that map the proprietary provider columns to the strict FOCUS 1.2 specification (e.g., mapping

lineItem/UnblendedCosttoBilledCost, applying amortized reservation logic toEffectiveCost).Data Gravity Profiling: For every stateless workload, calculate the associated data mass ($GB_{required}$) needed for execution.

Decision Matrix (Arbitrage Engine):

A custom infrastructure scheduler queries the FOCUS data warehouse.

If $M_{arb} > 15\%$ (Margin of Arbitrage), and workload is stateless, redirect the CI/CD deployment pipeline to target the cheaper cloud provider for that specific compute run.

If $M_{arb} \le 15\%$, maintain the workload in its current origin cloud to avoid pipeline complexity and network instability.

4. Financial Modeling Layer (MANDATORY)

The algorithmic execution of multi-cloud routing relies on querying the FOCUS 1.2 schema using the following models.

Core Equations

1. Multi-Cloud Arbitrage Margin ($M_{arb}$):

Calculates the true financial benefit of executing a workload on a secondary cloud provider, explicitly accounting for data egress.

$$M_{arb} = \left( \frac{(P_{compute\_A} \times T_{hrs}) - ((P_{compute\_B} \times T_{hrs}) + (V_{egress\_GB} \times P_{egress\_rate}))}{(P_{compute\_A} \times T_{hrs})} \right) \times 100$$

Where:

$P_{compute\_A}$ = EffectiveCost per hour in the Origin Cloud (derived from FOCUS 1.2).

$P_{compute\_B}$ = EffectiveCost per hour in the Target Cloud.

$V_{egress\_GB}$ = Volume of data required to move across the cloud boundary.

$P_{egress\_rate}$ = The specific outbound data transfer cost per GB.

2. Billing Normalization Yield ($Y_{norm}$):

Quantifies the financial return on the engineering effort required to maintain the FOCUS pipeline, based on the volume of multi-cloud waste identified and remediated.

$$Y_{norm} = \frac{\sum (W_{multi\_cloud\_waste\_recovered}) - C_{pipeline\_ops}}{C_{data\_engineering\_capex}}$$

Where:

$W_{multi\_cloud\_waste\_recovered}$ = Savings generated by cross-cloud resizing and arbitrage routing.

$C_{pipeline\_ops}$ = The monthly Snowflake/BigQuery compute cost to run the dbt transformations.

3. Schema Translation Overhead ($STO$):

Measures the operational efficiency of the data pipeline itself. Processing billions of billing rows can become exceptionally expensive if not optimized.

$$STO = \frac{C_{warehouse\_compute}}{Total\_Rows\_Normalized\_to\_FOCUS\_1.2} \times 1,000,000$$

A) Sensitivity Analysis Table

This table models the Multi-Cloud Arbitrage Margin ($M_{arb}$) for a massive Hadoop/Spark data processing job based on the compute price delta and the data gravity (Egress Volume).

Variable (Data Gravity) | 10% Compute Delta | 25% Compute Delta | 50% Compute Delta (Spot) | Arbitrage Viability |

Low Egress (10 GB) | +9.5% Margin | +24.5% Margin | +49.5% Margin | Execute immediately |

Med Egress (5 TB) | -15.0% Margin | +5.0% Margin | +30.0% Margin | Viable only if using Spot |

High Egress (50 TB) | -120.0% Margin | -80.0% Margin | -15.0% Margin | Never move the compute |

Decision Threshold: Multi-cloud compute arbitrage is mathematically invalid for heavily stateful workloads unless the compute price delta exceeds 50% (e.g., highly liquid spot markets) AND egress volumes are stringently minimized.

B) Break-Even Formula

The break-even data volume ($V_{break}$) where the cost of moving data to a cheaper cloud exactly cancels out the compute savings. If your dataset is larger than this, do not route the workload.

$$V_{break} = \frac{T_{hrs} \times (P_{compute\_A} - P_{compute\_B})}{P_{egress\_rate}}$$

Numerical Example: Cloud A compute is $10/hr. Cloud B is $4/hr. Job takes 100 hours. Egress is $0.08/GB. The compute savings is $600. The break-even data volume is 7,500 GB (7.5 TB). If the job requires moving 8 TB, you lose money by switching clouds.

C) Probability-Weighted Risk Table

Quantifying the risks of automated multi-cloud cost optimization.

Scenario | Probability | Financial Impact | Weighted Exposure |

Data Warehouse Runaway Query (Pipeline Bloat) | 15.0% | $8,000 (Unexpected DB compute) | $1,200 per month |

dbt Transformation Failure (Stale Pricing Data) | 5.0% | $12,000 (Suboptimal routing) | $600 per event |

Arbitrage Engine Logic Flaw (Infinite Egress Loop) | 1.5% | $45,000 (Massive network bill) | $675 per event |

Cross-Cloud IAM Latency (Job Failure) | 8.0% | $3,000 (Wasted compute hours) | $240 per event |

D) Cost-per-Unit Model

For the billing architecture, the unit is the Cost Per Normalized Gigabyte (CPNG):

$$CPNG = \frac{C_{Ingest} + C_{dbt\_Transform} + C_{Storage}}{Total\_GB\_of\_Billing\_Data\_Processed}$$

Threshold: If $CPNG > \$1.20$, the pipeline is poorly partitioned. FinOps must enforce columnar pruning and temporal partitioning (e.g., clustering tables by ChargePeriodStart) to reduce data warehouse scanning costs.

5. Operational Architecture Integration

Data Lakehouse Construction (Snowflake/BigQuery):

Do not attempt to process FOCUS data inside a transactional database (PostgreSQL) or via simple Python scripts on a laptop. Multi-cloud billing data at enterprise scale generates tens of millions of rows daily. The architecture requires landing the raw Parquet/CSV exports into object storage, then using an ELT (Extract, Load, Transform) approach. The data warehouse must utilize materialized views for the final FOCUS 1.2 schema, allowing high-concurrency dashboards (Looker/QuickSight) and API clients to query the EffectiveCost without recalculating amortized reservation logic on the fly.

dbt (Data Build Tool) Integration:

The translation from provider-specific logic to FOCUS is executed via dbt. Enterprise architects must construct dbt DAGs (Directed Acyclic Graphs) that handle the nuances of each cloud. For example, AWS amortizes upfront Savings Plans differently than Azure amortizes Reserved Instances. The dbt logic must standardize these into the FOCUS EffectiveCost column, ensuring that a $1.00 compute spend on AWS is mathematically equivalent to a $1.00 compute spend on Azure when queried by the arbitrage engine.

Kubernetes Custom Scheduler Integration:

To realize the NDGA framework, a custom K8s mutating admission webhook is deployed. When a developer submits a generic batch job deployment (e.g., training a medium-sized AI model), the webhook intercepts the request. It queries the FOCUS normalized database via an internal API to fetch the trailing 7-day average Spot/Preemptible prices across AWS and GCP for the requested instance class. It runs the $M_{arb}$ calculation. If GCP is >15% cheaper net of egress, the webhook mutates the pod specification to target the GCP node pool instead of the AWS node pool.

6. Failure Scenarios

Scenario 1: The Egress Trap

Breakdown: An automated FinOps routing engine successfully identifies that Azure spot instances are 40% cheaper than AWS spot instances for a specific 500-node batch job. It routes the compute to Azure. However, the 200TB data lake required for the job remains in AWS S3. The job pulls 200TB across the open internet, incurring massive AWS NAT Gateway and data transfer out charges.

Financial Exposure: Saving $2,000 in compute while generating an $18,000 network egress bill. Net loss of $16,000 in a single weekend.

Governance Prevention Layer: The Arbitrage Engine must possess a hardcoded "Data Gravity Gateway." The routing algorithm must mathematically evaluate the $V_{break}$ formula. If the required data volume is not explicitly provided in the job metadata, the engine defaults to the Origin Cloud, blocking any automated cross-cloud mutation.

Scenario 2: Data Warehouse Cost Explosion ($STO$ Failure)

Breakdown: The FinOps team configures a business intelligence dashboard to refresh every 15 minutes. The dashboard runs a massive

GROUP BYquery across 3 years of unpartitioned FOCUS billing data (billions of rows) inside Snowflake.Financial Exposure: The FinOps reporting pipeline itself costs $40,000 per month in Snowflake compute credits, eliminating the savings it was designed to find.

Governance Prevention Layer: Strict data warehouse governance. All FOCUS tables must be physically clustered by

ChargePeriodStartandProviderName. BI tools are restricted from querying raw fact tables and must query pre-aggregated, daily-rollup materialized views.

Scenario 3: Amortization Logic Drift

Breakdown: The enterprise purchases a massive new 3-year No-Upfront Compute Savings Plan on AWS. The dbt transformation logic was hardcoded to only handle All-Upfront RIs. The FOCUS

EffectiveCostcolumn begins reporting the raw on-demand price for AWS, rather than the heavily discounted rate. The Arbitrage engine mistakenly believes AWS is vastly more expensive than GCP and begins routing all liquid workloads away from AWS, causing the enterprise to miss its Savings Plan utilization commitment.Financial Exposure: $100,000+ in wasted, unutilized AWS Savings Plan commitments while simultaneously paying on-demand rates to GCP.

Governance Prevention Layer: Implement data observability testing (e.g., using Monte Carlo or dbt tests). A daily automated test must assert that the sum of

BilledCostroughly equals the sum ofEffectiveCostat the organizational level over a 30-day window. If the variance exceeds 2%, the dbt pipeline halts and alerts the FinOps data engineers to a schema mapping failure.

7. Board-Level Translation Layer

EBITDA Delta Modeling: Implementing FOCUS 1.2 is not a cost center; it is the infrastructure required to unlock competitive bidding between hyperscalers. By proving to hyperscalers that the enterprise has the architectural capability to seamlessly route workloads based on normalized unit costs, FinOps leaders can negotiate deeper Enterprise Discount Programs (EDPs), directly expanding EBITDA.

Gross Margin Defense: For complex SaaS platforms running across multiple clouds due to acquisitions, COGS reporting is traditionally a mess of estimations. FOCUS 1.2 provides GAAP-compliant, normalized unit economics, allowing the board to accurately evaluate the distinct gross margins of an AWS-hosted product line versus an Azure-hosted product line.

Capital Allocation Signal: A unified data schema allows the CFO to make immediate, apples-to-apples comparisons of infrastructure efficiency across disparate engineering units, directing capital to the teams exhibiting the lowest normalized cost-per-transaction.

Risk-Adjusted ROI Formula:

$$ROI_{FOCUS} = \frac{\text{Negotiated Multi-Cloud Discounts} + \text{Arbitrage Savings}}{\text{Cost of Data Engineering} + \text{Warehouse Compute}}$$

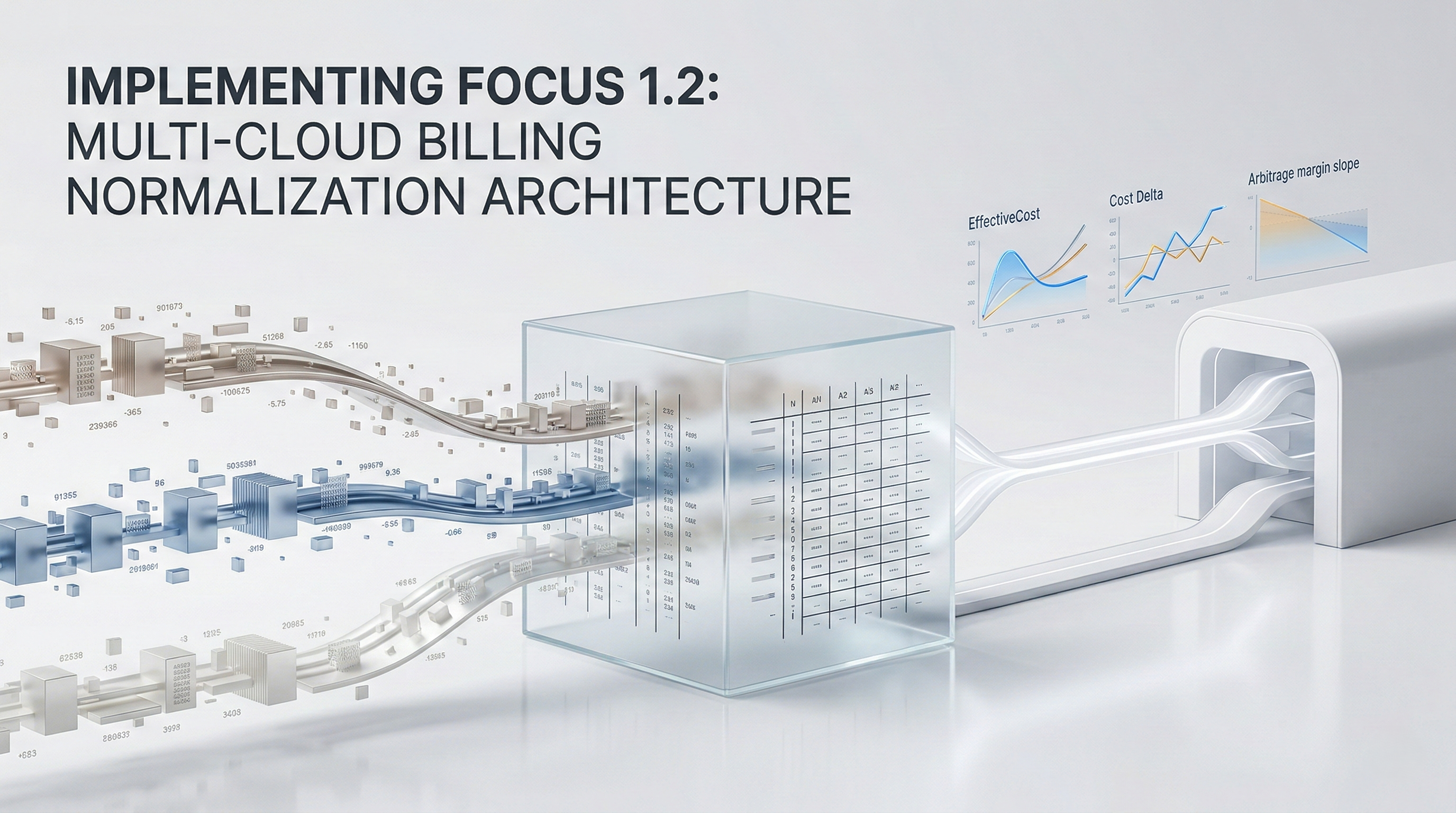

8. Data Visualization Suggestions

A clean multi-line chart showing the

EffectiveCostper normalized Compute Hour across AWS, Azure, and GCP over time, clearly highlighting pricing inversions.

A heat map showing Compute Price Delta on the X-axis and Egress Volume on the Y-axis. Green squares indicate "Profitable to Move," red indicates "Egress Penalty Exceeds Savings."

An ELT flow diagram showing raw AWS/Azure/GCP buckets feeding into a dbt processing layer, landing in a Snowflake FOCUS 1.2 schema, and being queried by a Kubernetes webhook.

A scatter plot tracking daily

BilledCostvariance. Because the data is normalized, a single anomaly algorithm monitors all three clouds simultaneously, flagging a red dot for a GCP spike.

A financial waterfall chart showing how upfront reservations, spot discounts, and standard usage blend together to form the final true

EffectiveCostunder the FOCUS standard.

9. Why Analyst-Style Summaries Fail at Financial Precision

When analysts state, "Organizations should adopt FOCUS to simplify multi-cloud billing," they dramatically undersell the capability and ignore the implementation complexity. Analyst narratives treat FOCUS like a simple spreadsheet template.

This narrative fails because a template cannot execute logic. An enterprise cannot manually map 5 billion rows of monthly cloud billing data in Excel. The value of FOCUS is strictly proportional to the data engineering rigor applied to it. Narrative summaries fail to warn enterprises about the $STO$ (Schema Translation Overhead) – the very real risk that poorly written SQL queries against massive billing datasets will bankrupt the FinOps team. Precision modeling, utilizing the NDGA framework, treats billing data as a high-velocity production asset. It demands the mathematical quantification of data gravity and explicitly calculates the arbitrage margin before moving a single byte of compute, turning a reporting standard into an autonomous financial weapon.

10. Strategic Conclusion

The adoption of the FOCUS 1.2 specification marks the end of the hyperscaler vendor lock-in via billing obfuscation. For the first time, enterprise FinOps teams possess a standardized, open-source schema capable of directly translating the complex pricing mechanics of AWS, Azure, and Google Cloud into a single, mathematically rigorous source of truth.

However, treating FOCUS simply as an upgrade to internal management dashboards represents a massive failure of strategic vision. The true objective is to leverage this normalized data architecture to build dynamic, cross-cloud arbitrage engines. By centralizing billing telemetry into a highly optimized data lakehouse, and aggressively transforming it via dbt, organizations can empower their infrastructure-as-code pipelines and Kubernetes schedulers to become price-aware.

This transition requires FinOps teams to evolve. They can no longer just be financial analysts; they must become data engineers and systems architects. The frameworks provided—specifically the calculation of the Multi-Cloud Arbitrage Margin and the strict adherence to the Data Gravity break-even models—ensure that the enterprise avoids the catastrophic financial traps of cross-cloud data egress. By mastering the FOCUS architecture, the enterprise fundamentally shifts the balance of power away from the cloud providers and back to the corporate balance sheet, ensuring that every compute cycle is procured at the absolute optimal market rate.

11. Implementation Readiness Checklist

Deploy Raw Billing Exports: Configure native, granular billing exports (AWS CUR 2.0, Azure Cost Details, GCP Detailed Billing) to stream into a centralized, highly secure S3 or GCS bucket daily.

Provision the FinOps Data Lakehouse: Allocate dedicated compute in Snowflake or BigQuery specifically for the FinOps data engineering pipeline, implementing strict budget caps to prevent query runaway.

Implement dbt Core/Cloud: Deploy dbt as the transformation engine, utilizing community-supported FOCUS packages to map raw provider columns to the 1.2 specification.

Validate Amortization Math: Conduct a rigorous manual audit matching the dbt-calculated

EffectiveCostfor 10 specific resources against the native cloud console to ensure reservation logic is flawless.Establish Temporal Partitioning: Physically cluster and partition the data warehouse tables by date and provider to ensure visualization queries scan megabytes of data, not terabytes.

Profile Enterprise Data Gravity: Audit the top 20 most expensive batch processing workloads, calculating their precise data dependency volumes to establish the $V_{break}$ thresholds.

Build the Arbitrage API: Expose a lightweight internal REST API over the FOCUS database that infrastructure tools can query to instantly retrieve the trailing 7-day average cost for specific normalized compute SKUs.

Deploy the K8s Mutating Webhook: Pilot an admission controller in non-production Kubernetes clusters that intercepts stateless batch jobs and routes them to the cheapest cloud provider based on the Arbitrage API.

Automate Pipeline Observability: Implement data quality testing (e.g., verifying

BilledCostis never null,ChargePeriodStartis chronologically valid) to prevent corrupted data from influencing infrastructure routing.Sunset Legacy Custom Mappings: Once the FOCUS pipeline achieves 99% accuracy against invoice totals for 60 consecutive days, aggressively deprecate all legacy SQL scripts and force the enterprise to utilize the normalized schema exclusively.

Stop guessing where your Kubernetes budget is going. Schedule a demo here to explore Kubernetes cost monitoring with Cloud Atler.