1. Executive Synthesis

The era of descriptive and predictive FinOps—characterized by passive dashboards, delayed anomaly alerts, and human-in-the-loop remediation—has reached a point of diminishing returns. As enterprise cloud environments hyperscale across ephemeral Kubernetes clusters, serverless architectures, and dynamic GPU allocation for AI inference, the latency between cost anomaly detection and manual remediation directly erodes gross margins. Agentic AI FinOps represents the transition from observability to autonomous, policy-bound infrastructure mutation. This playbook establishes the architectural, financial, and governance requirements for deploying autonomous FinOps agents capable of directly executing infrastructure rightsizing, lifecycle policy enforcement, and workload redeployment without synchronous human approval.

In a mature 2026 operating environment, the velocity of cloud provisioning drastically outpaces the cognitive capacity of central FinOps teams. When a rogue inference workload scales unexpectedly or a Kubernetes cluster over-provisions nodes due to misconfigured Horizontal Pod Autoscalers (HPA), traditional FinOps alerts generate operational noise. By the time an SRE investigates and applies a Terraform state change, the enterprise has incurred measurable financial damage. Agentic FinOps flips this paradigm by utilizing large language models (LLMs) integrated with function-calling capabilities, securely scoped via rigid Identity and Access Management (IAM) boundaries, to act as autonomous site reliability engineers. These agents intercept cloud logging streams, construct an optimal remediation hypothesis, mathematically validate the financial impact against an enterprise risk threshold, and execute the API calls to terminate, resize, or migrate the offending resources.

Implementing this requires a fundamental shift in risk tolerance and governance. The financial models driving agentic execution must quantify the probability of infrastructure degradation against the guaranteed cost of inaction. A mathematically rigorous framework is necessary to define exactly when an agent is permitted to execute an infrastructure mutation versus when it must escalate to a human operator. This threshold is governed by the Agentic Confidence Score and the resulting Financial Exposure radius. Enterprises failing to adopt this autonomous posture will face persistent margin compression as AI-driven compute consumption continuously breaks traditional static budgeting models.

The strategic mandate is clear: establish the necessary FinOps unit economics, define the explicit mathematical boundaries for autonomous infrastructure manipulation, and deploy the Agentic AI FinOps model to eliminate the financial latency inherent in human-driven cloud cost optimization. This playbook provides the rigorous modeling required to execute this transition safely and profitably.

2. Market Gap & Search Intent Failure Analysis

Current FinOps literature and vendor documentation critically fail enterprise practitioners by conflating "AI-assisted FinOps" with "Agentic FinOps." Search queries for "AI in FinOps" yield superficial content focused on LLMs acting as natural language interfaces for querying billing data (e.g., "Chatbots for AWS Cost Explorer"). This is a fundamental misunderstanding of the 2026 market requirements.

The market gap lies in the absence of executable, mathematically proven frameworks for autonomous infrastructure modification. Vendors sell visibility, but enterprise margin defense requires immediate action. Existing playbooks lack the strict quantitative boundaries necessary to grant an AI agent production-write access. They fail to address the critical variables of time-to-remediate ($TTR$) cost decay, the statistical probability of a false-positive infrastructure termination, and the explicit IAM boundary logic required to prevent catastrophic self-inflicted denial of service. By treating AI as a reporting tool rather than an execution engine, the industry has ignored the profound operational savings of zero-latency remediation. This playbook fills that gap by providing the precise mathematical models and governance architectures required for autonomous execution.

3. Core Strategic Framework

To execute Agentic FinOps, enterprises must implement the Autonomous Financial Remediation (AFR) Framework. This framework replaces human heuristic decision-making with algorithmic cost-benefit execution.

The AFR Framework mandates that every cloud resource anomaly detected triggers an instantaneous calculation evaluating the cost of the anomaly per minute against the statistical risk of automated remediation causing a production outage. The agent does not simply look at a CPU utilization metric; it queries the underlying application's Service Level Objective (SLO), the current error budget, and the cost profile of the instance type.

Implementation Protocol:

Telemetry Ingestion: Ingest granular billing data (FOCUS 1.2 standard), Kubernetes metrics (Prometheus/Thanos), and application traces into a low-latency vector database accessible by the FinOps Agent.

Hypothesis Generation: Upon anomaly detection, the agent generates multiple remediation paths (e.g., Path A: Terminate instance; Path B: Resize instance; Path C: Migrate to Spot).

Algorithmic Evaluation: The agent scores each path using the AFR financial models (detailed in Section 4).

Execution Decision Matrix:

If $Probability_{Success} > 0.95$ AND $Cost_{Anomaly} > \$100/hr$, execute autonomously.

If $Probability_{Success} < 0.95$ OR workload is tagged

Tier-0, route to SRE via Slack with pre-built Terraform PR.

State Reconciliation: Post-execution, the agent verifies the financial drop and application health, writing an immutable log of the reasoning chain and financial delta.

4. Financial Modeling Layer (MANDATORY)

The financial viability of Agentic FinOps is predicated on accurately modeling the cost of human latency versus the statistical risk of automated error.

Core Equations

1. The Cost of Human Latency ($C_{latency}$):

This equation quantifies the financial waste incurred during the time it takes a human to acknowledge, investigate, and remediate a cloud anomaly.

$$C_{latency} = \sum_{i=1}^{n} \left( \Delta T_{remediation_i} \times \omega_{rate_i} \right)$$

Where:

$\Delta T_{remediation}$ = Time delta between anomaly start and human resolution (in hours).

$\omega_{rate}$ = Excess waste burn rate per hour for resource $i$.

2. Agentic Expected Value ($EV_{agent}$):

This determines if the autonomous action is financially justifiable by weighing the saved latency cost against the risk-adjusted cost of an automated failure (e.g., accidentally killing a revenue-generating pod).

$$EV_{agent} = (P_{success} \times C_{latency\_saved}) - (P_{failure} \times C_{downtime})$$

Where:

$P_{success}$ = Agent confidence score based on historical execution accuracy for similar resource tags.

$C_{latency\_saved}$ = The projected waste eliminated by immediate execution.

$C_{downtime}$ = The enterprise cost per minute of downtime for the specific workload.

3. The Autonomous ROI Ratio ($ROI_{AFR}$):

$$ROI_{AFR} = \frac{\sum (EV_{agent}) - (C_{agent\_compute} + C_{agent\_licensing})}{\text{Total OpEx of Manual FinOps Team Allocation}}$$

A) Sensitivity Analysis Table

This table demonstrates the EBITDA impact across 10,000 compute nodes over an annual cycle based on the FinOps agent's success rate and the average waste burn rate per anomaly.

Variable (Psuccess) | Low Case (85%) | Base Case (95%) | High Case (99%) | EBITDA Impact |

Low Waste Rate ($5/hr) | -$125,000 | +$450,000 | +$620,000 | Marginal to Moderate |

Med Waste Rate ($25/hr) | +$200,000 | +$1,850,000 | +$2,400,000 | Highly Accretive |

High Waste Rate ($100/hr) | +$850,000 | +$5,200,000 | +$7,800,000 | Transformative |

Decision Threshold: Agentic execution is suspended if rolling $P_{success}$ falls below 90% in any 7-day window.

B) Break-Even Formula

The break-even point in operational days ($D_{be}$) for deploying an enterprise FinOps AI Agent architecture (development, specialized LLM API costs, and integration) versus the manual status quo:

$$D_{be} = \frac{C_{integration} + (C_{LLM\_inference} \times \text{Daily API Calls})}{\sum (\omega_{rate} \times \Delta T_{human\_latency}) - \sum (\omega_{rate} \times \Delta T_{agent\_latency})}$$

Numerical Example: If integration costs $150,000, daily LLM API calls cost $50, daily human waste is $2,500, and agent waste is $50, the break-even is approximately 61 days.

C) Probability-Weighted Risk Table

Quantifying the financial exposure of agentic infrastructure mutation.

Scenario | Probability | Financial Impact | Weighted Exposure |

False-Positive Termination (Non-Prod) | 4.5% | $500 (Dev delay) | $22.50 per event |

False-Positive Termination (Prod - Stateful) | 0.1% | $85,000 (Data recovery/SLA) | $85.00 per event |

Over-Aggressive Rightsizing (CPU Throttling) | 2.0% | $5,000 (Latency penalty) | $100.00 per event |

Runaway Agent (Infinite Loop API Calls) | 0.05% | $12,000 (API costs) | $6.00 per event |

D) Cost-per-Unit Model

For Agentic FinOps, the unit is the Cost Per Autonomous Remediation (CPAR):

$$CPAR = \frac{C_{LLM\_Tokens\_Prompt} + C_{LLM\_Tokens\_Completion} + C_{Function\_Compute}}{Total\_Successful\_Mutations}$$

Threshold: If $CPAR > \$1.50$, the agent logic must be optimized to smaller, task-specific models (e.g., Llama 3 8B) rather than routing all logic through heavy reasoning models.

5. Operational Architecture Integration

Deploying Agentic FinOps requires a rigorous, zero-trust architecture to prevent the agent from becoming an attack vector or causing systemic outages.

Kubernetes Integration (EKS/GKE):

The agent interfaces with the Kubernetes API server not via raw shell execution, but through constrained Custom Resource Definitions (CRDs). If the agent determines a deployment is vastly over-provisioned based on historical HPA data and Prometheus metrics, it submits a mutation request to a dedicated FinOpsRemediation CRD. An in-cluster operator validates this request against Open Policy Agent (OPA) Gatekeeper rules. For example, OPA will block the agent if the requested replicaCount drops below the mandatory High Availability (HA) threshold of 3 for Tier-1 namespaces.

Multi-Cloud IAM Isolation:

Agentic FinOps must operate under extreme Least Privilege. The agent assumes temporary, strictly bounded IAM roles via AWS STS or GCP Workload Identity.

ReadOnly Phase: The agent assumes an

arn:aws:iam::account:role/FinOps-Agent-Analyzerole to query CloudWatch, Cost Explorer, and Compute Optimizer.Execution Phase: Once the math validates execution, the agent assumes

arn:aws:iam::account:role/FinOps-Agent-Execute. This role has explicitly denied actions via Service Control Policies (SCPs), such asec2:TerminateInstanceson instances taggedEnvironment: ProductionandData: Stateful. The agent is only permitted to mutate non-critical or explicitly stateless workloads, and can only apply tags or modify auto-scaling group limits within a 20% variance bound.

AI/ML Workload Governance:

GPU spot instances are highly volatile. The agent continuously monitors spot interruption notices and multi-region GPU spot pricing. When a price spike is detected in us-east-1 for H100s, the agent autonomously cordons the cluster, saves the distributed training checkpoint to S3/GCS, and dynamically re-provisions the Ray cluster in us-west-2 where spot prices are 40% lower, updating the DNS routing layer automatically.

6. Failure Scenarios

Autonomous infrastructure mutation carries inherent systemic risk. The following scenarios quantify the exposure and define the governance prevention layer.

Scenario 1: The "Death Spiral" Rightsizing Loop

Breakdown: The agent detects low CPU utilization and scales down a deployment. The reduced capacity causes queue queuing and synthetic latency. The monitoring system interprets this latency as a need for more CPU, scaling the cluster up. The agent detects the new "waste" and scales it back down.

Financial Exposure: $15,000 to $50,000 per incident in API thrashing, lost revenue from application latency, and degraded customer experience.

Governance Prevention Layer: Implementation of a temporal backoff lock. Once a specific resource ID is mutated by the agent, a cryptographic lock is written to a DynamoDB state table preventing any further agentic mutation on that specific resource for an enforcement window of 72 hours.

Scenario 2: Catastrophic Stateful Termination

Breakdown: An LLM hallucination in the agent's reasoning chain bypasses heuristic checks and terminates an underutilized but critical master database node during an off-peak maintenance window.

Financial Exposure: $250,000+ per event in SLA penalties, data recovery efforts, and brand damage.

Governance Prevention Layer: Immutable infrastructure tagging combined with SCP-level explicit Deny rules. The agent's execution role mathematically cannot execute

DeleteorTerminatecommands against any resource lacking a specificAgentic-Mutable: Truecryptographic tag verified by a secondary automated pipeline.

Scenario 3: API Quota Exhaustion

Breakdown: In response to a widespread anomaly (e.g., a massive misconfiguration deployed by a junior engineer), the agent spawns thousands of parallel remediation threads, exhausting the AWS/GCP API rate limits for the entire enterprise account.

Financial Exposure: $50,000+ per hour due to the inability of other critical production auto-scalers to function during the API blackout.

Governance Prevention Layer: The agent is deployed within a dedicated AWS account or GCP project, and its execution engine is throttled by a token-bucket algorithm limiting API write requests to 50 per minute. If the remediation queue exceeds this, it triggers a catastrophic failure human escalation.

7. Board-Level Translation Layer

For C-suite and board-level executives, Agentic FinOps must be justified not as a technical experiment, but as an automated margin defense mechanism.

EBITDA Delta Modeling: Every dollar of cloud waste eliminated by zero-latency agentic execution flows directly to EBITDA. If an enterprise spends $50M annually on cloud, and 15% is ephemeral waste that takes human teams an average of 4 days to catch and remediate, the agentic transition compresses that latency to 4 minutes. This recovers approximately $6.5M in raw EBITDA, representing a measurable defense of gross margins against scaling cloud costs.

Gross Margin Defense: As AI token sprawl and inference compute costs skyrocket, unit economics degrade. Agentic FinOps ensures that the Cost of Goods Sold (COGS) for SaaS products remains tightly correlated to actual customer utilization, autonomously terminating idle compute the millisecond a tenant ceases activity.

Capital Allocation Signal: By relying on mathematical certainty for operational efficiency, capital previously allocated to expanding offshore FinOps analyst teams can be redirected toward core product engineering.

Risk-Adjusted ROI Formula: Board members must see the risk factored into the return.

$$ROI_{Risk-Adjusted} = \frac{\text{Projected Savings} - (\text{Probability of Catastrophic Outage} \times \text{Cost of Outage})}{\text{Total Cost of Agentic Implementation}}$$

If this ratio exceeds 4.0x, the project is a mandatory strategic imperative.

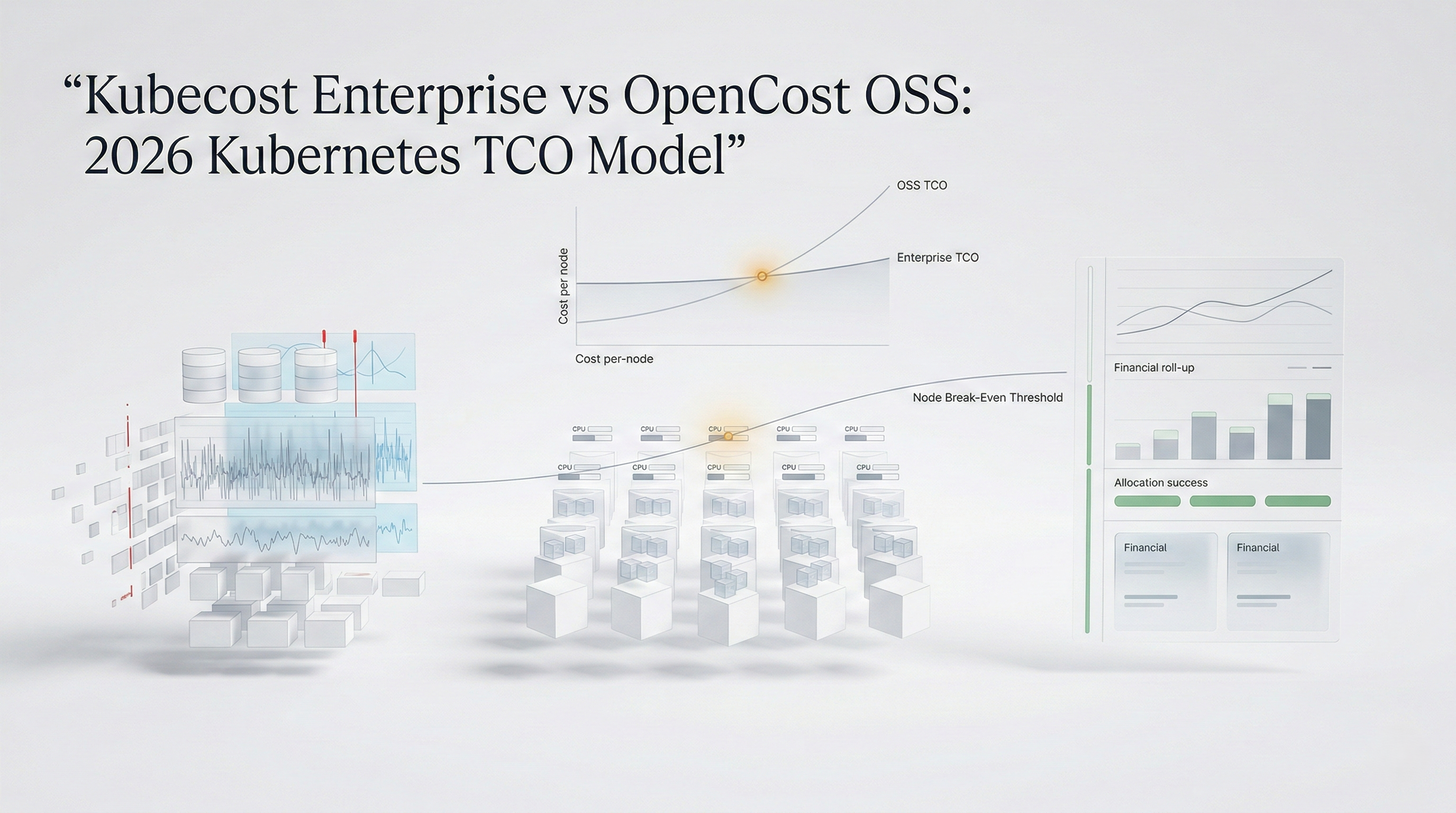

8. Data Visualization Suggestions

To properly contextualize this framework in a presentation or dashboard, utilize the following visualizations:

A dual-line graph showing cost accumulating over time. The human line climbs steadily over days; the agent line flatlines within minutes.

A 2D heatmap plotting Cost of Anomaly on the Y-axis and Agent Confidence Score on the X-axis, color-coded red (Escalate to Human), yellow (Require Human Approval), and green (Execute Autonomously).

An architectural diagram showing the FinOps agent securely inside a VPC, utilizing STS to assume strictly scoped, temporary execution roles across different multi-cloud boundaries.

A scatter plot showing various agentic failure scenarios, with bubble size representing financial exposure and placement representing probability vs severity.

A comparison of the fully loaded human cost per ticket (SRE salary/time) versus the LLM token API cost for an automated remediation.

9. Why Analyst-Style Summaries Fail at Financial Precision

Traditional technology analyst reports discuss "AI for Cloud Cost Management" using vague narrative structures. They claim that "organizations should consider leveraging AI to optimize costs" without providing the operational mathematics required to actually grant an LLM infrastructure access.

Narrative summaries fail because they cannot be executed in code. An SRE cannot take a Gartner or Forrester report and translate it into an AWS IAM policy or a Python execution script. Furthermore, analyst reports ignore the critical threshold mathematics: exactly when is an AI model reliable enough to terminate a server?

Equation-backed modeling, as demonstrated in the AFR framework, replaces qualitative guesswork with quantitative certainty. By calculating the Agentic Expected Value ($EV_{agent}$) and establishing a rigid Break-Even point, infrastructure leaders can programmatically define risk. You cannot govern a cloud environment with prose; you govern it with explicit thresholds, probability distributions, and cryptographic identity boundaries.

10. Strategic Conclusion

Agentic AI FinOps is the inevitable end-state of cloud financial operations. The sheer volume, velocity, and complexity of ephemeral infrastructure and GPU allocations in 2026 outstrip human capacity to monitor, evaluate, and act. The financial latency inherent in traditional ticketing systems and human-in-the-loop remediation pipelines creates an unacceptable drag on gross margins and EBITDA.

Transitioning to this model is not fundamentally an AI challenge; it is an architecture and risk management challenge. The technology to allow an LLM to generate Terraform code or Kubernetes manifests exists. The differentiator between an enterprise that successfully leverages this to drive down TCO and one that causes self-inflicted production outages is the strict adherence to the mathematical frameworks and governance boundaries outlined in this playbook.

Enterprises must begin by scoping agents to read-only visibility to establish baseline $P_{success}$ metrics. From there, they must cautiously expand into autonomous execution strictly within non-production environments or stateless, highly available worker tiers. By enforcing immutable tagging, rigid IAM boundaries, and programmatic temporal backoffs, the risk of agentic drift is mitigated, unlocking zero-latency cost control.

11. Implementation Readiness Checklist

Enforce FOCUS 1.2 Billing Standards: Ensure all multi-cloud billing exports are normalized to the FOCUS 1.2 specification to allow the AI agent a unified schema for financial reasoning.

Establish the Agentic Expected Value ($EV_{agent}$) Baseline: Calculate the historical cost of manual remediation latency over the last 6 months to establish the baseline target for agentic savings.

Implement Immutable Resource Tagging: Deploy a global policy (e.g., Azure Policy, AWS SCP) mandating

Agentic-Mutable: True/Falsetags on all infrastructure as code deployments.Deploy the Decision Engine Vector Database: Provision a low-latency vector DB to store historical FinOps anomalies, agent logic chains, and infrastructure state data for continuous LLM context grounding.

Configure Multi-Cloud OIDC Integration: Establish secure Workload Identity federation using OpenID Connect (OIDC) to eliminate long-lived service account keys for the FinOps Agent.

Build the Agentic "Dry Run" Pipeline: Run the agent in "shadow mode" for 30 days. Log the agent's proposed API calls and measure them against actual human SRE actions to calculate the initial $P_{success}$ metric.

Define IAM Service Control Policies (SCPs): Create the explicit network of

Denyrules at the organizational root level to physically prevent the agent's assumed role from modifyingTier-0databases or network configurations.Implement Temporal Backoff Logic: Code a mandatory DynamoDB state-lock mechanism preventing the agent from executing multiple mutations on the same cloud resource within a 72-hour window.

Establish OPA Gatekeeper Rules in K8s: Deploy Rego policies in all Kubernetes clusters restricting the agent's ability to modify

HorizontalPodAutoscalerminimum thresholds below High Availability standards.Define the Automated Kill Switch: Build an immediate, out-of-band circuit breaker (e.g., a Slack command

/agent-kill) that instantly revokes the agent's IAM roles and stops all autonomous mutation globally within 3 seconds.

Stop guessing where your Kubernetes budget is going. Schedule a demo here to explore Kubernetes cost monitoring with Cloud Atler.