1. Executive Synthesis

The rapid integration of Generative AI into commercial SaaS platforms has precipitated a silent, catastrophic collapse of traditional software unit economics. For two decades, SaaS pricing models relied on deterministic compute. If a user paid $30 per month for a project management tool, the underlying Cost of Goods Sold (COGS)—a few database queries, minor compute cycles, and network egress—remained relatively static, predictable, and incredibly low (typically 10-15% of revenue).

Generative AI fundamentally breaks this paradigm by introducing probabilistic, highly volatile compute costs. When a SaaS platform integrates a feature like "Summarize this 50-page document" or "Auto-generate project plans," the user initiates an API call to a Large Language Model (LLM) that bills per input and output token. A single heavy user on a $30/month subscription can easily consume $150 in OpenAI or Bedrock API calls over 30 days, resulting in a -400% gross margin on that specific account. By 2026, "Token Sprawl"—the unchecked proliferation of unregulated LLM API calls initiated by end-users—is the single greatest threat to SaaS enterprise valuation.

To survive, SaaS leaders must abandon traditional flat-rate pricing and static infrastructure architectures. You cannot defend gross margins if your application hardcodes every prompt to the most expensive, frontier model (e.g., GPT-4 or Claude 3.5 Sonnet) regardless of the complexity of the task. Enterprises must implement the Inference Margin Defense (IMD) Model.

The IMD mandates that the engineering architecture becomes financially self-aware. It requires the deployment of an AI Gateway (a routing layer) that dynamically evaluates the complexity of a user prompt, checks the user's current token profitability, and routes the request to the most economically viable model. Simple classification tasks are silently routed to virtually free, internally hosted small language models (e.g., Llama 3 8B), reserving the expensive API calls exclusively for complex reasoning tasks or premium-tier users. This playbook provides the financial mathematics to enforce dynamic feature throttling, redesign AI SaaS pricing, and restore the 80% gross margins that the public markets demand.

2. Market Gap & Search Intent Failure Analysis

Current advice regarding "SaaS AI pricing strategy" is dominated by simplistic product marketing blogs. A search yields advice such as "charge an add-on fee for AI features" or "pass the API costs directly to the consumer." This advice is financially unviable in a competitive 2026 landscape. Consumers actively resist nickel-and-diming consumption models for basic B2B software, and flat-rate "AI Add-ons" ($10/mo extra) frequently fail to cover the cost of power users, leaving the margin vulnerability intact.

The market gap is the absence of architectural and mathematical integration between product pricing and AI infrastructure routing. Analyst summaries fail to provide the algorithms required to throttle a user before they become unprofitable. They ignore the unit economics of the Vector Database (RAG architectures), focusing solely on the LLM token cost, thereby under-reporting the true AI COGS. This playbook resolves this by providing the precise equations needed to calculate Token-Adjusted COGS and establishing the automated governance required to dynamically degrade service quality (model shifting) to preserve profitability without churning the customer.

3. Core Strategic Framework

The enterprise must adopt the Inference Margin Defense (IMD) Model. This framework strictly links user subscription revenue to their dynamic token consumption, utilizing an intelligent gateway to manipulate infrastructure routing based on real-time profitability thresholds.

Implementation Protocol:

Token Cost Aggregation: Instrument the SaaS application to append a

Tenant_IDandSubscription_Tierto every single LLM API call or self-hosted inference request.Calculate Token-Adjusted COGS: Continuously aggregate the blended cost of prompt tokens, completion tokens, and vector database queries per tenant.

Deploy the AI Gateway: Implement a routing proxy (e.g., LiteLLM or an internal API gateway) that sits between the application and the foundational models.

Execution Decision Matrix:

If Tenant Margin $> 60\%$ AND Task is Complex, route to Premium API (GPT-4 / Claude Opus).

If Tenant Margin $< 30\%$ (Danger Zone), dynamically downgrade routing to an open-source, cheaper model (e.g., Llama 3 / Haiku) for all background tasks.

If Tenant Token Consumption exceeds the absolute $L_{throttle}$ (Break-even), immediately return an HTTP 429 (Rate Limit) for AI-specific features until the billing cycle resets, while keeping standard deterministic SaaS features active.

4. Financial Modeling Layer (MANDATORY)

Defending AI margins requires real-time algorithmic execution of the following equations.

Core Equations

1. Generative AI COGS per Tenant ($COGS_{ai}$):

Calculates the exact infrastructure cost of providing AI features to a specific user or tenant over a billing period.

$$COGS_{ai} = \sum_{i=1}^{n} \left( (T_{prompt\_i} \times P_{in}) + (T_{completion\_i} \times P_{out}) \right) + \left( Q_{vector} \times P_{query} \right)$$

Where:

$T_{prompt}$ = Number of input tokens for request $i$.

$P_{in}$ = Price per input token of the selected model.

$T_{completion}$ = Number of output tokens generated.

$P_{out}$ = Price per output token.

$Q_{vector}$ = Number of Retrieval-Augmented Generation (RAG) vector database queries executed.

$P_{query}$ = Amortized cost per vector query.

2. Token-Adjusted Gross Margin ($GM_{tenant}$):

Determines the true profitability of a specific customer after accounting for both traditional cloud infrastructure and probabilistic AI consumption.

$$GM_{tenant} = \left( \frac{R_{mrr} - (COGS_{standard} + COGS_{ai})}{R_{mrr}} \right) \times 100$$

Where:

$R_{mrr}$ = Monthly Recurring Revenue from the tenant.

$COGS_{standard}$ = Cost of standard deterministic hosting (EC2, S3, Postgres).

3. The Throttle/Degradation Limit ($L_{throttle}$):

Calculates the exact token budget a user is allowed to consume before the system mathematically enforces margin protection.

$$L_{throttle\_tokens} = \frac{R_{mrr} \times (1 - Margin_{target\_percentage}) - COGS_{standard}}{P_{blended\_token\_rate}}$$

A) Sensitivity Analysis Table

This table models the Gross Margin of a standard $50/month SaaS subscription based on the volume of AI features utilized and the routing strategy employed by the engineering team.

Variable (Tenant AI Usage) | Static Premium Routing (GPT-4 Only) | Dynamic IMD Routing (GPT-4 + Llama 3) | Resulting Margin Impact |

Light User (100k tokens/mo) | $48.00 Profit (96% Margin) | $49.00 Profit (98% Margin) | Safe in both models |

Avg User (1M tokens/mo) | $25.00 Profit (50% Margin) | $40.00 Profit (80% Margin) | IMD Preserves SaaS Economics |

Heavy User (5M tokens/mo) | -$75.00 Profit (-150% Margin) | $15.00 Profit (30% Margin) | Static Routing Destroys Company |

Decision Threshold: Relying exclusively on premium, proprietary LLM APIs for all application features guarantees negative gross margins on heavy users. Dynamic routing is a mathematical necessity.

B) Break-Even Formula

The API Break-Even Threshold ($V_{api\_be}$) defines the maximum volume of API-driven tokens a cohort can consume before building and hosting a custom open-source model becomes financially mandatory.

$$V_{api\_be} = \frac{CapEx_{model\_training} + (C_{gpu\_hosting\_monthly} \times 12)}{(P_{api\_out} \times 12)}$$

Numerical Example: If fine-tuning a custom Llama 3 model costs $50,000, and hosting it on AWS EC2 GPUs costs $10,000/mo ($120,000/yr), the annual self-hosted TCO is $170,000. If the OpenAI API costs $0.015 per 1k output tokens. The break-even volume is 11.3 Billion tokens per year. If your user base generates more than 11.3 Billion tokens annually, you are actively losing money by not bringing the model in-house.

C) Probability-Weighted Risk Table

Quantifying the operational and financial risks of AI SaaS features.

Scenario | Probability | Financial Impact | Weighted Exposure |

Runaway Automated API Loop (Scripting bug) | 5.0% | $25,000 (Unexpected API bill) | $1,250 per month |

Heavy User Concentration (10% of users consume 80%) | 45.0% | $40,000 (Margin destruction) | $18,000 per month |

Prompt Injection Attack (Malicious token drain) | 12.0% | $5,000 (Wasted compute) | $600 per month |

Cloud Provider GPU Shortage (Self-hosted goes offline) | 18.0% | $15,000 (Failback to premium API) | $2,700 per month |

D) Cost-per-Unit Model

The central unit of measurement for AI-integrated SaaS is the Cost Per Generative Transaction (CPGT):

$$CPGT = \frac{COGS_{ai\_monthly}}{Total\_AI\_Feature\_Invocations}$$

Threshold: If $CPGT$ exceeds $0.05 per user click, the feature is fundamentally unscalable under a flat-rate subscription. Product engineering must implement UI friction (e.g., removing auto-summarize on load, requiring explicit user clicks) to reduce passive invocations.

5. Operational Architecture Integration

The AI Gateway Routing Layer:

To execute the IMD framework, direct API calls from the frontend or backend application to OpenAI/Anthropic must be strictly prohibited. The architecture mandates a centralized AI Gateway (e.g., using open-source tools like LiteLLM or an internal Rust-based proxy). The application sends a generic request to the Gateway: generate_summary(text). The Gateway queries the internal FinOps database to check the user's $GM_{tenant}$ (Gross Margin). If the user is profitable, the Gateway forwards the request to the expensive claude-3-opus API. If the user is near the $L_{throttle}$ limit, the Gateway silently translates the request and routes it to an internally hosted, highly quantized Mistral-8B endpoint running on cheap EC2 instances, gracefully degrading the output quality to preserve the financial integrity of the account.

Asynchronous RAG & Vector Database FinOps:

Retrieval-Augmented Generation (RAG) introduces massive hidden costs. Every time a user asks a question, the system queries a Vector Database (e.g., Pinecone, Milvus) before hitting the LLM. If not architected correctly, high-frequency RAG queries will destroy margins through database compute overhead. Architecture must enforce semantic caching. If User A asks "What is the Q3 policy update?", the vector embeddings and the resulting LLM answer are cached in Redis. When User B asks a semantically similar question, the system serves the cached answer, bypassing both the Vector DB compute and the LLM API cost entirely, achieving a $COGS_{ai}$ of $0.00.

Self-Hosted Inference Clusters (vLLM on Kubernetes):

When token volume crosses the $V_{api\_be}$ break-even point, enterprises must deploy their own inference architecture. This requires running frameworks like vLLM on Kubernetes clusters backed by Spot GPUs (e.g., NVIDIA L4s or A10Gs). Because inference is stateless, it is the perfect workload for heavily discounted public cloud Spot instances, slashing the effective Cost Per Generative Transaction (CPGT) by up to 80% compared to managed APIs.

6. Failure Scenarios

Scenario 1: The "Auto-Generate" Death Spiral

Breakdown: A SaaS platform releases a feature that automatically generates a 500-word LLM summary for every new email that enters a user's inbox. A power user receives 1,000 automated system emails a day. The SaaS platform fires 1,000 API calls per day without the user even logging in.

Financial Exposure: The user pays $20/month but generates $400/month in passive OpenAI API costs, destroying the profitability of 20 other normal users.

Governance Prevention Layer: Passive Invocation Ban. FinOps must enforce a rigid product rule: expensive generative API calls can only be triggered by synchronous, explicit user actions (e.g., clicking a "Summarize" button). Asynchronous, loop-driven generative features must be strictly limited to virtually free, self-hosted small models.

Scenario 2: The Flat-Rate AI Add-On Trap

Breakdown: Sales leadership insists on charging a flat "$10/month AI Add-on" for unlimited generative features to boost MRR. They assume average usage will offset heavy usage. However, "AI Power Users" self-select into the tier, operating complex RAG queries 8 hours a day. The $10 fee covers less than 3 days of their token consumption.

Financial Exposure: Negative gross margins scaling linearly with the adoption of the "premium" product tier.

Governance Prevention Layer: Soft-Limits and Margin Circuit Breakers. The product must possess dynamic throttling limits ($L_{throttle}$). The Terms of Service must state "Fair Use," and the AI Gateway must automatically enforce a rate limit or graceful degradation to a cheaper model the millisecond the specific user's $COGS_{ai}$ exceeds $8.00.

Scenario 3: Untracked Token Sprawl

Breakdown: Engineering teams embed API keys directly into various microservices. They deploy 15 different generative features across the platform. At the end of the month, the CFO receives a $150,000 Anthropic bill but cannot attribute a single dollar of it to a specific feature, customer, or subscription tier.

Financial Exposure: Total loss of unit economic visibility, making it impossible to adjust pricing, discontinue unprofitable features, or calculate COGS.

Governance Prevention Layer: Mandatory Telemetry Injection. The AI Gateway drops any outbound LLM request that does not contain a valid

feature_idandtenant_idin the metadata payload. All token consumption must be synchronously written to the FOCUS billing data lake for real-time FinOps analysis.

7. Board-Level Translation Layer

EBITDA Delta Modeling: Left unchecked, Generative AI features act as a structural tax on SaaS EBITDA. By implementing the IMD framework and dynamic routing, an enterprise can compress AI COGS by 60-70%. For a $50M ARR SaaS business, capping runaway token sprawl prevents a projected 15% erosion in EBITDA margin, preserving tens of millions in enterprise valuation.

Gross Margin Defense: The public markets value SaaS companies at high multiples precisely because they traditionally possess 80%+ gross margins. If AI compute costs drag gross margins down to 55%, the company will be re-valued as a traditional IT services business, crushing the stock price. The AI Gateway and Token-Adjusted COGS formulas are the mathematical defense mechanisms against this valuation collapse.

Capital Allocation Signal: Reaching the $V_{api\_be}$ (Break-Even) point signals to the board that the company must shift capital from paying OpEx to proprietary API vendors toward investing in internal ML engineering capabilities and CapEx/Cloud-reserved GPU inference infrastructure.

Risk-Adjusted ROI Formula:

$$ROI_{imd} = \frac{\text{Recovered Negative Margins from Heavy Users}}{\text{Engineering Cost to Build AI Gateway} + C_{gateway\_compute}}$$

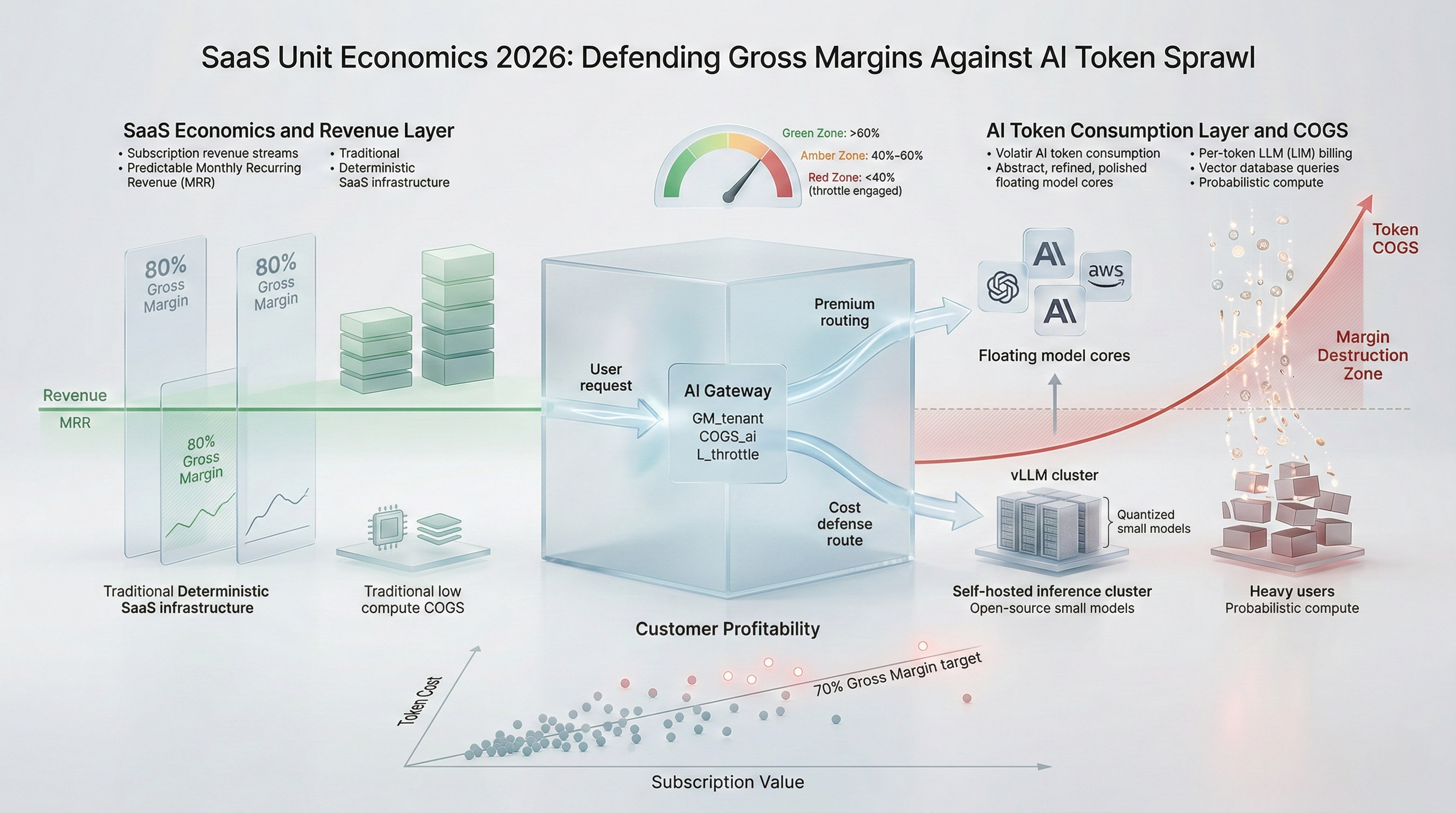

8. Data Visualization Suggestions

A line chart showing Monthly Recurring Revenue (MRR) as a flat horizontal line, while the Token COGS line curves sharply upwards based on user consumption. The area where COGS crosses above MRR is shaded red, labeled "Margin Destruction Zone."

A network diagram showing a user request hitting the AI Gateway, querying a "Margin DB," and dynamically routing either "Up" to the expensive GPT-4 API or "Down" to a cheap, self-hosted Llama-3 EC2 cluster.

A flowchart showing Request 1 hitting the LLM API (high cost) and storing the vector in Redis. Request 2 checks Redis, finds a match, and returns the answer directly (zero LLM cost).

A scatter plot where each dot is a customer. X-axis is Subscription Value, Y-axis is Total AI Token Cost. A diagonal line represents the 70% Gross Margin target. Dots above the line are financially toxic accounts requiring immediate throttling.

A classic break-even graph. The steep line represents paying per-token API costs. The flatter line starting higher on the Y-axis represents the fixed cost of self-hosting a model. The intersection point dictates the infrastructure pivot.

9. Why Analyst-Style Summaries Fail at Financial Precision

When software analysts recommend that "SaaS providers must integrate AI to stay competitive, but should carefully monitor usage to avoid cost overruns," they are providing useless platitudes. A platitude cannot execute a rate limit on a runaway Python script.

Narrative advice fails because it relies on human-in-the-loop review to monitor usage. If a FinOps team reviews the cloud bill at the end of the month to "monitor usage," the financial damage has already been finalized on the provider's invoice. Equation-backed modeling, specifically the implementation of the $L_{throttle}$ limit integrated into the AI Gateway, replaces retrospective monitoring with real-time, algorithmic financial enforcement. By calculating the exact token limit that sustains an 80% margin and coding that equation directly into the API routing logic, the enterprise mathematically guarantees its unit economics. You do not monitor token sprawl; you architect physical barriers against it.

10. Strategic Conclusion

The integration of Generative AI into SaaS applications represents the most severe threat to software unit economics in a generation. The shift from deterministic database queries to probabilistic, per-token API consumption fundamentally destroys the financial viability of unlimited, flat-rate subscription models. If enterprises allow end-users to dictate the volume of expensive LLM API calls without stringent architectural friction, gross margins will evaporate.

To survive the AI margin crush of 2026, SaaS leadership must violently restructure their infrastructure and product architectures. Engineering cannot be permitted to hardcode direct API calls to premium frontier models. The implementation of an intelligent, mathematically governed AI Gateway is a non-negotiable survival requirement. By employing the Inference Margin Defense (IMD) Model, the SaaS platform becomes financially self-regulating. It continuously calculates the Token-Adjusted Gross Margin ($GM_{tenant}$) of every user in real-time.

When a user is highly profitable, they receive the highest quality AI outputs. When a user approaches their break-even threshold, the system gracefully degrades their service, routing their prompts to vastly cheaper, self-hosted open-source models, or executing strict rate limits. This strategy, combined with aggressive semantic caching and UI friction to prevent passive API invocations, ensures that the SaaS platform can offer cutting-edge generative capabilities without sacrificing the 80% gross margins that define enterprise software valuations.

11. Implementation Readiness Checklist

Mandate Metadata Tagging: Force engineering to rewrite all LLM API invocation payloads to strictly include

Tenant_ID,Subscription_Tier, andFeature_ID.Deploy the AI Proxy Gateway: Implement LiteLLM, Kong, or a custom gateway to sit between all application microservices and the external AI foundational models.

Calculate $COGS_{ai}$ Baseline: Run a 14-day analysis of current token consumption to identify the top 5% of users who are driving 80% of the generative AI costs.

Implement Semantic Caching: Deploy a Redis or specialized vector caching layer in front of the AI Gateway to intercept and serve repeat prompts without invoking the LLM.

Remove Passive Invocations: Audit the product UI to eliminate any generative AI features that run automatically in the background without explicit, synchronous user intent.

Code the $L_{throttle}$ Logic: Integrate the Token-Adjusted Gross Margin formula into the Gateway, setting a hard circuit breaker that reroutes or blocks traffic when an account margin drops below 40%.

Calculate the In-House Break-Even ($V_{api\_be}$): Run the mathematics to determine exactly how many billions of tokens your platform must consume before building a custom Llama 3/Mistral cluster becomes cheaper than OpenAI.

Deploy Small Language Models (SLMs): Provision cheap, heavily quantized (INT4/INT8) open-source models on Spot instances to handle all simple tasks (e.g., entity extraction, basic summarization) to bypass premium API costs.

Update Terms of Service: Legally protect the business by introducing strict "Fair Use" clauses for AI feature consumption, explicitly granting the platform the right to rate-limit power users.

Align Pricing with Token Tiers: Restructure SaaS pricing tiers so that higher subscription levels explicitly grant higher internal token budgets, forcing power users to fund their own compute.

Stop guessing where your Kubernetes budget is going. Schedule a demo here to explore Kubernetes cost monitoring with Cloud Atler.