Most modern systems are not just about writing code anymore. They are about moving work reliably across services, data flows, and environments. Whether you are processing data, deploying software, or coordinating microservices, the way tasks are orchestrated shapes everything: performance, reliability, cost, and even developer experience.

However, teams often adopt orchestration models without fully understanding their trade-offs. Some lean toward event-driven pipelines because they feel flexible and scalable. Others prefer DAG-based orchestration because it brings structure and predictability. Both approaches solve real problems, but they behave very differently under scale, failure, and complexity.

So, the real question is not which one is better, but rather which one fits the system you are building and where it can fail you.

Understanding Event-Driven Pipelines

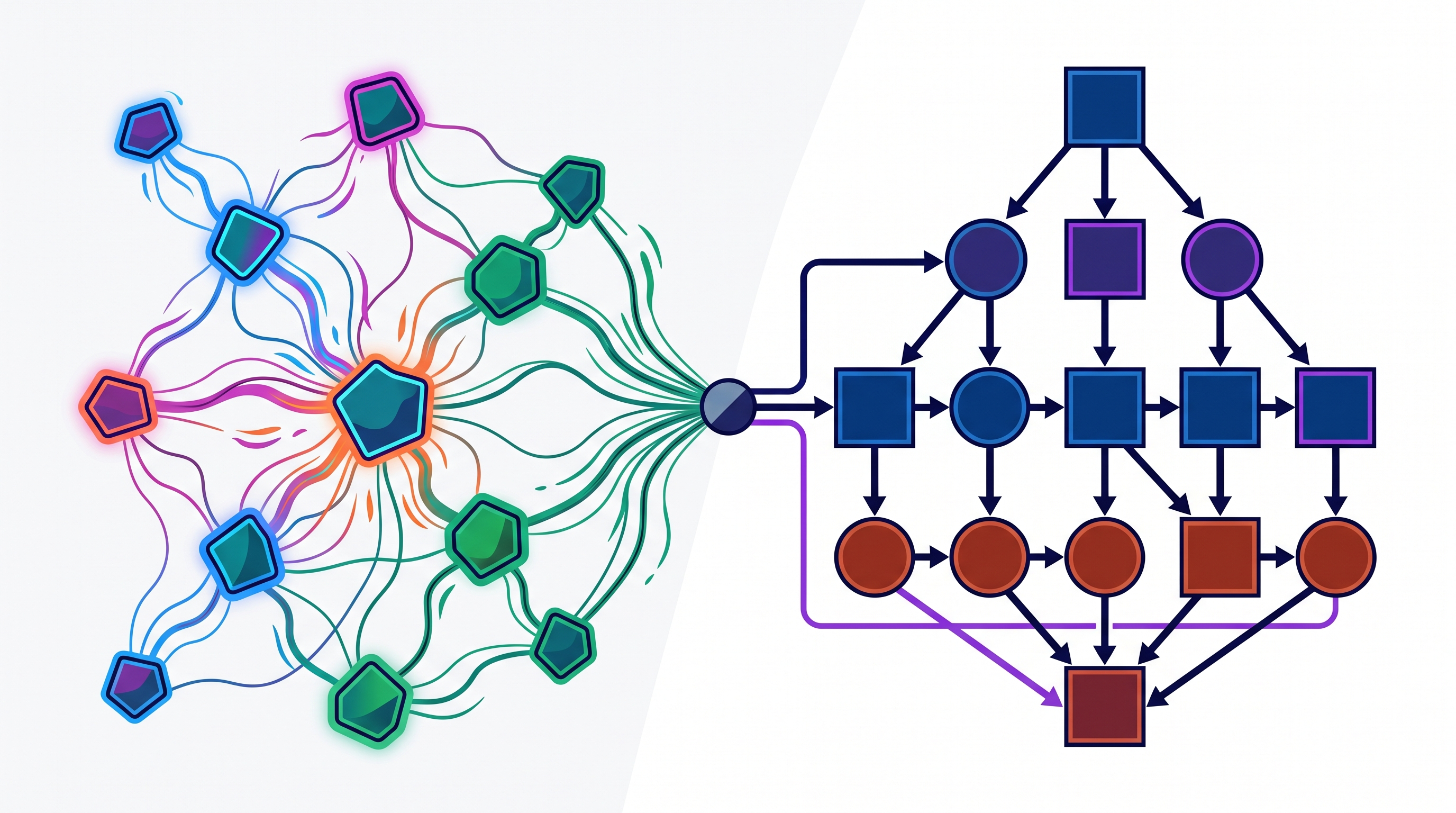

Event-driven pipelines operate on a simple idea: things happen because something else happened. Instead of following a predefined sequence, tasks are triggered by events such as a file upload, a message in a queue, or a service emitting a signal.

This model is inherently reactive. When an event occurs, it triggers downstream actions, which may in turn generate more events. Over time, this creates a chain, or more accurately, a web of loosely connected processes.

Because of this, event-driven systems are highly flexible. They allow components to operate independently, scale dynamically, and respond in real time. However, this flexibility comes with complexity. Since there is no single, predefined path, understanding the full flow of execution becomes challenging.

In practice, this means that while event-driven pipelines are excellent for responsiveness and scalability, they can be difficult to reason about, especially when debugging or tracing failures.

Understanding DAG-Based Orchestration and Its Structured Nature

DAG-based orchestration, on the other hand, is built on structure. A Directed Acyclic Graph (DAG) defines a set of tasks and their dependencies in a fixed, explicit order. Each node represents a task, and edges define how tasks depend on one another.

Because the flow is predefined, execution becomes predictable. You know exactly what runs, in what order, and under what conditions. This makes DAG-based systems easier to debug, monitor, and optimize.

However, this structure also introduces rigidity. Changes to workflows often require updating the DAG itself, and handling dynamic or real-time events can be less straightforward. While DAGs excel in batch processing and well-defined workflows, they can struggle in environments that demand high adaptability.

In essence, DAG-based orchestration prioritizes clarity and control, sometimes at the cost of flexibility.

Execution Model: Reactive Flow vs Predefined Graphs

The fundamental difference between these two approaches lies in how execution is driven.

Event-driven pipelines rely on reactive execution. Tasks are triggered by incoming events, and the system evolves dynamically as events propagate. This makes them well-suited for systems where inputs are unpredictable or continuous, such as streaming data or user-driven actions.

DAG-based orchestration relies on planned execution. The workflow is defined ahead of time, and tasks are executed according to that plan. This is ideal for scenarios where processes are repeatable and dependencies are well understood.

However, this difference has deeper implications. Reactive systems can adapt to real-time changes, but they can also become chaotic if not carefully managed. Planned systems provide stability, but they may lack the responsiveness needed in dynamic environments.

Choosing between the two often comes down to whether your system needs adaptability or determinism.

State Management and Data Flow Complexity

State management is another area where these models diverge significantly.

In event-driven pipelines, state is often distributed. Each component may maintain its own state or rely on external systems such as databases or caches. Because events are asynchronous, ensuring consistency becomes a challenge. Race conditions, duplicate processing, and eventual consistency are common concerns.

In DAG-based systems, state is typically more centralized and easier to track. Since execution follows a defined path, it is simpler to understand how data flows from one task to another. This reduces ambiguity and makes debugging more straightforward.

However, a centralized state can also become a bottleneck, especially at scale. While DAGs provide clarity, they may require additional effort to handle large, distributed workloads efficiently.

Failure Handling and Recovery Mechanisms

Failures are inevitable, and how a system handles them often determines its reliability.

Event-driven systems handle failures in a decentralized way. Each component is responsible for managing its own errors, retries, and recovery logic. While this allows for fine-grained control, it can also lead to inconsistent behavior if not standardized across the system.

Because events can be retried or replayed, event-driven pipelines offer flexibility in recovery. However, they also introduce challenges such as duplicate processing and out-of-order execution.

DAG-based systems, in contrast, provide more structured failure handling. Since the workflow is predefined, it is easier to implement retries, fallback mechanisms, and checkpoints. Failures can be isolated to specific nodes, and recovery can follow a predictable path.

That said, DAG-based recovery may lack the flexibility needed for complex, real-time scenarios. It works well for known failure patterns, but less so for unpredictable ones.

Observability and Debugging Challenges

Observability is where the differences become even more pronounced.

In event-driven systems, tracing the flow of execution can be difficult because there is no single, linear path. Events may trigger multiple downstream processes, creating a web of interactions that is hard to visualize. Debugging often requires correlating logs across multiple services, which can be time-consuming and error-prone.

In DAG-based systems, observability is more straightforward. The predefined structure allows for clear visualization of workflows, making it easier to identify where failures occur. Logs, metrics, and traces can be mapped directly to specific nodes in the graph.

However, this simplicity can be misleading. While DAGs make debugging easier for known workflows, they may not capture unexpected interactions or external dependencies as effectively as event-driven systems.

Scalability and Performance Trade-offs

Scalability is often a deciding factor in choosing an orchestration model.

Event-driven pipelines scale naturally. Because components are loosely coupled, they can scale independently based on demand. This makes them ideal for high-throughput, real-time systems.

However, this scalability can introduce unpredictability. Without careful control, systems may experience bursts of activity that overwhelm resources.

DAG-based systems scale in a more controlled manner. Since execution is planned, resource allocation can be optimized in advance. This leads to more predictable performance, but may limit responsiveness during sudden spikes.

In other words, event-driven systems offer elastic scalability, while DAG-based systems offer predictable scalability.

Use Case Alignment: When Each Model Makes Sense

The choice between event-driven and DAG-based orchestration depends heavily on the nature of the workload.

Event-driven pipelines are well-suited for:

Real-time data processing

Microservices communication

Systems with unpredictable inputs

High-frequency event streams

DAG-based orchestration is better suited for:

Batch processing

ETL workflows

Scheduled jobs

Systems with well-defined dependencies

In many cases, the most effective approach is not choosing one over the other, but combining both. Hybrid architectures allow teams to leverage the strengths of each model while mitigating its weaknesses.

The Hidden Cost of Choosing the Wrong Model

Choosing the wrong orchestration model does not always fail immediately. In fact, systems may work well initially but struggle as they scale.

An event-driven system without proper observability can become difficult to manage, leading to hidden failures and increased operational overhead. A DAG-based system in a highly dynamic environment may become rigid, slowing down development and limiting adaptability.

Because of this, the cost of a poor choice is often gradual but significant. It manifests as increased complexity, reduced performance, and higher maintenance effort.

Understanding these trade-offs early can prevent long-term issues.

A Smarter Way to Understand System Behavior with Atler Pilot

As systems grow more complex, understanding orchestration behavior becomes increasingly difficult. This is where platforms like Atler Pilot provide valuable insight.

Atler Pilot helps teams visualize how workflows actually execute across systems, whether event-driven or DAG-based. It connects performance, cost, and system interactions, allowing teams to identify inefficiencies, bottlenecks, and hidden dependencies.

Instead of relying on assumptions, teams can see how their orchestration choices impact real-world behavior. This enables better decision-making, whether optimizing existing pipelines or designing new ones.

By bringing clarity to complex systems, Atler Pilot helps teams move from reactive troubleshooting to proactive optimization.

Conclusion

Event-driven pipelines and DAG-based orchestration represent two fundamentally different approaches to managing workflows. One prioritizes flexibility and responsiveness, while the other emphasizes structure and predictability.

Neither approach is universally better. Each has strengths and limitations, and the right choice depends on the specific needs of the system.

What matters most is understanding these trade-offs and designing systems that align with your goals. In many cases, the best solution lies in combining both approaches, leveraging their strengths while minimizing their weaknesses.

Ultimately, orchestration is about building systems that are reliable, scalable, and understandable under real-world conditions.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.