As organizations adopt microservices and service mesh architectures, their systems become more flexible, scalable, and resilient. But this architectural evolution comes with a hidden cost. What was once a relatively straightforward infrastructure cost model becomes deeply fragmented across dozens, sometimes hundreds, of services interacting in complex ways.

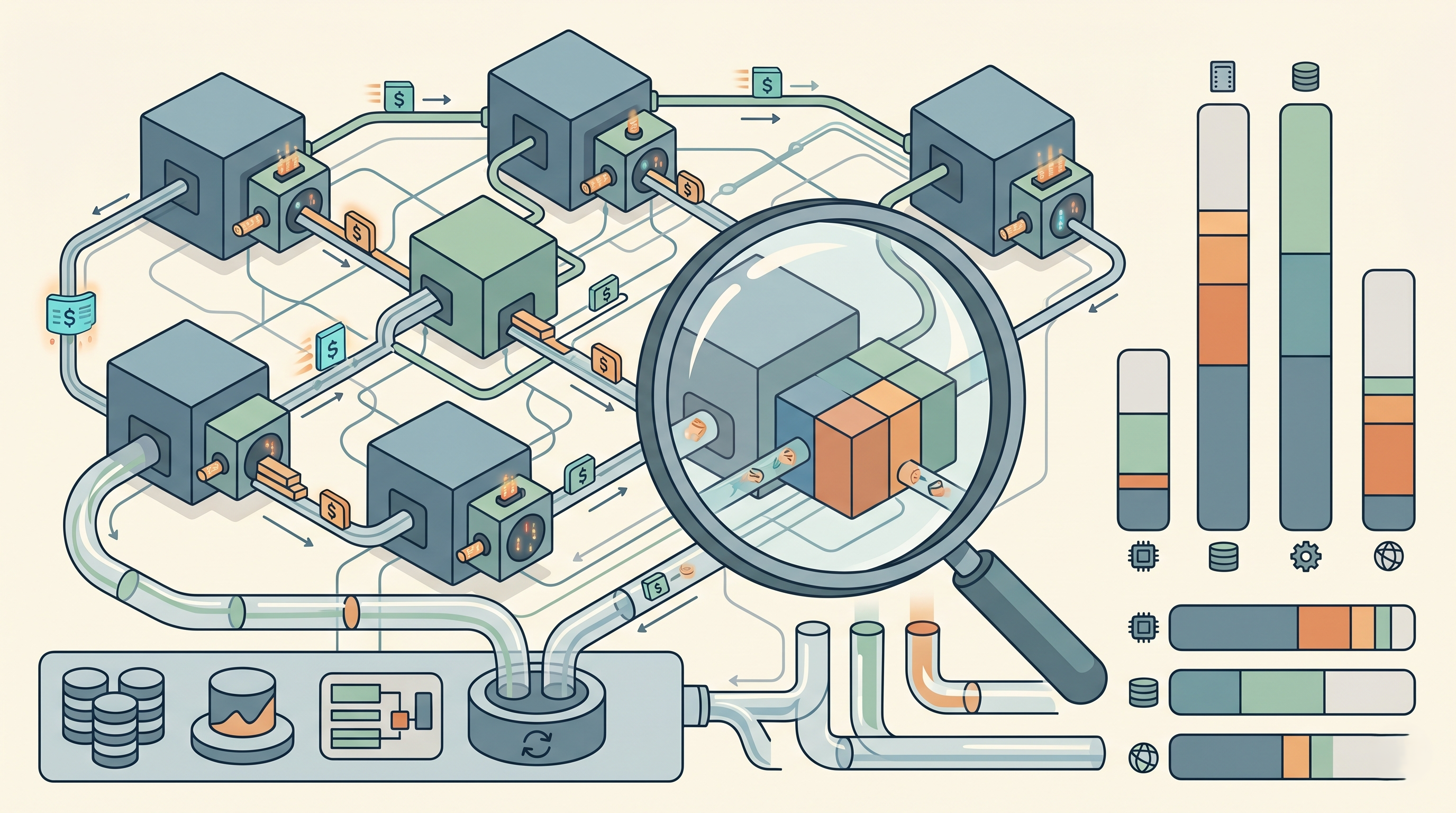

In such environments, cloud costs no longer map neatly to infrastructure. Instead, it flows through service-to-service communication, shared clusters, sidecars, and dynamic workloads. When costs rise, teams often struggle to find out which service is even responsible. This is where granular cost allocation becomes critical.

It allows organizations to trace cloud spending, down to the level of individual services, even within the complexity of a service mesh. So, through this article, let’s just understand how architectural decisions, traffic patterns, and system behavior translate into cost to bring clarity to an otherwise opaque system.

Why Service Mesh Architectures Complicate Cost Allocation?

Service mesh architectures introduce a layer of abstraction that fundamentally changes how services communicate. Instead of direct service-to-service calls, communication is routed through sidecar proxies, often managed by tools like Istio or Linkerd. This enables advanced capabilities such as traffic control, observability, and security.

However, this abstraction also obscures cost visibility. Each request now involves additional components:

Sidecar proxies consume compute and memory

Network traffic increases due to inter-service communication

Observability tooling generates additional data and overhead

These factors introduce indirect costs that are not easily attributable to a single service. A simple API request may trigger multiple downstream calls, each incurring its own cost footprint.

As a result, traditional cost allocation methods, which rely on mapping infrastructure to services, become insufficient. They fail to capture the distributed and interconnected nature of service mesh environments.

What is the Granular Cost Allocation?

Granular cost allocation goes beyond assigning cost at a high level. It involves breaking cloud spend into the smallest meaningful units within your architecture, typically at the service level or even the request level.

In a service mesh, this means understanding:

How much each service contributes to the total cost

How inter-service communication impacts spending

How shared infrastructure is utilized across services

Granularity is not just about detail, but it is also about accuracy and relevance. The goal is to provide insights that are actionable, allowing teams to identify inefficiencies and optimize specific parts of the system.

Mapping Cost to Service Interactions

One of the defining characteristics of service mesh architectures is the complexity of service interactions. A single user request may traverse multiple services, each adding latency, resource usage, and cost.

To allocate costs effectively, it is essential to map these interactions.

This involves tracing requests as they move through the system and identifying the services involved at each step. Distributed tracing tools can provide this visibility, showing how requests propagate and where resources are consumed.

Once these interactions are mapped, cost can be attributed based on each service’s role in the request. For example, a service that processes large amounts of data may incur higher compute and storage costs, while a gateway service may contribute more to network costs.

This approach shifts cost allocation from static resource mapping to dynamic request-based analysis.

Accounting for Sidecar Overhead

Sidecars are a fundamental component of service mesh architectures, but they introduce additional overhead that must be accounted for in cost allocation.

Each sidecar proxy consumes CPU and memory, and in high-traffic systems, this overhead can become significant. However, since sidecars are tightly coupled with their parent services, their cost must be attributed appropriately.

In practice, this means treating sidecar resources as part of the service’s total cost footprint. Ignoring this overhead can lead to underestimating the true cost of a service and misrepresenting its efficiency.

Accurate allocation requires capturing sidecar usage metrics and incorporating them into the overall cost model.

Handling Shared Infrastructure in Mesh Environments

Service mesh architectures often rely on shared infrastructure, such as Kubernetes clusters, ingress controllers, and observability systems. These shared components support multiple services simultaneously, making cost allocation more complex.

To address this, costs must be distributed based on usage.

For example, cluster costs can be allocated based on resource consumption metrics such as CPU and memory usage. Network costs can be distributed based on traffic volume between services. Observability costs can be assigned based on the amount of data generated by each service.

The key is to choose allocation methods that reflect actual usage patterns. While perfect precision may not always be achievable, consistency and fairness are essential for meaningful insights.

Incorporating Traffic Patterns into Cost Allocation

In service mesh architectures, traffic patterns play a significant role in determining cost. Services that handle high volumes of requests or large data transfers naturally incur higher costs.

However, not all traffic is equal.

Some services may generate excessive internal traffic due to inefficient design, such as unnecessary retries or redundant calls. Others may rely heavily on downstream dependencies, amplifying their cost impact.

By analyzing traffic patterns alongside cost data, teams can identify these inefficiencies. This allows for more accurate attribution and highlights opportunities for optimization.

For example, reducing unnecessary service calls or optimizing data transfer can lead to significant cost savings without affecting functionality.

Aligning Cost with Performance and Efficiency

Granular cost allocation becomes truly valuable when it is aligned with performance metrics.

A service that incurs high cost is not necessarily inefficient if it delivers proportional value. Conversely, a service with moderate cost may be inefficient if it provides limited benefit.

By correlating cost with metrics such as latency, throughput, and error rates, teams can evaluate efficiency more effectively. This enables a more nuanced approach to optimization, where decisions are based on both cost and performance.

For instance, increasing resource allocation for a critical service may be justified if it significantly improves user experience, while similar spending on a non-critical service may not be warranted.

Challenges in Achieving Granular Allocation

Despite its benefits, achieving granular cost allocation in service mesh architectures is challenging.

One of the primary challenges is data fragmentation. Cost data, performance metrics, and tracing information are often stored in separate systems, making it difficult to combine them into a unified view.

Another challenge is the dynamic nature of service mesh environments. Services scale up and down, traffic patterns change, and dependencies evolve. This constant change requires continuous monitoring and adaptation of the cost allocation model.

There is also the challenge of complexity. Building and maintaining a granular allocation system requires expertise in both cloud infrastructure and data analysis. Without the right tools, this can become a significant operational burden.

How Atler Pilot Enables Granular Cost Allocation in Service Meshes?

In practice, implementing granular cost allocation across service mesh architectures requires more than just principles. It requires a system that can handle complexity, context, and continuous change. This is where Atler Pilot plays an important role.

Atler Pilot is designed to map cloud cost directly onto service-level architecture, even in highly distributed environments like service meshes. Instead of relying solely on static tagging or infrastructure-level attribution, it understands how services interact and how cost flows through those interactions.

By integrating with observability signals, Atler Pilot correlates cost with service-to-service communication patterns. It effectively captures how requests traverse the mesh and distributes cost accordingly. This allows teams to see not just which service is consuming resources, but how its interactions contribute to overall cost.

One of its key strengths is handling shared and indirect costs. In service mesh environments, much of the cost is embedded in shared infrastructure and sidecar overhead. Atler Pilot intelligently allocates these costs based on actual usage patterns, ensuring that attribution remains accurate and meaningful.

It also brings context into the equation. When a service shows an increase in cost, Atler Pilot provides insight into whether the change is driven by higher traffic, inefficient communication patterns, or scaling behavior. This transforms cost allocation from a static report into a dynamic, explanatory system.

Most importantly, it makes these insights actionable. Teams can identify specific services or interactions that are inefficient and take targeted steps to optimize them. This reduces the complexity of managing cost in service mesh architectures and enables continuous improvement.

Conclusion

Granular cost allocation in service mesh architectures is not just a technical challenge—it is a necessity for operating efficiently at scale.

As systems become more distributed and interconnected, traditional cost models lose their effectiveness. Organizations need a deeper level of visibility that reflects the true complexity of their architectures.

By adopting a granular approach, teams can gain clarity into how cost is distributed, identify inefficiencies, and make more informed decisions. However, achieving this in practice requires the ability to connect cost with system behavior, interactions, and context.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.