Cloud infrastructure feels efficient by design. You pay for what you use, scale when needed, and avoid large upfront investments. On paper, it looks like the most cost-effective model modern engineering teams could ask for.

But in practice, cloud costs rarely stay predictable.

What makes them tricky is not just scale, but invisibility. Many of the most expensive charges are not obvious in dashboards or billing summaries. They sit quietly in the background, accumulating over time without triggering alerts or immediate concern. By the time teams notice, a significant portion of the budget has already been consumed.

For DevOps teams, this creates a dangerous gap. Systems may look healthy, performance may be stable, and deployments may be smooth, yet costs continue rising without a clear explanation.

In this blog, we will break down five of the most common hidden cloud costs that DevOps teams often miss, why they happen, and how to stay ahead of them before they impact your budget.

The Quiet Cost of Idle Resources

Idle resources are one of the most common and overlooked sources of cloud waste. These include virtual machines left running after testing, unused storage volumes, inactive load balancers, or forgotten development environments.

They do not generate errors. They do not affect performance. They simply continue to exist.

In fast-moving environments, it is easy to spin up resources and forget to remove them. Over time, these small inefficiencies accumulate into significant cost leakage. Since nothing appears broken, they rarely receive immediate attention.

Regular cleanup processes and visibility into unused resources are essential. Otherwise, idle infrastructure becomes a silent drain on your budget.

Data Transfer That Adds Up Quickly

Data transfer costs are often underestimated because they are less visible than compute or storage charges. However, moving data between regions, across availability zones, or out of the cloud can become surprisingly expensive.

Modern applications rely heavily on distributed architectures. Microservices communicate frequently, APIs exchange data constantly, and global deployments move traffic across regions. Each interaction may incur a small cost, but at scale, these costs grow rapidly.

The challenge is that data transfer charges are rarely obvious in application-level metrics. Teams focus on performance and reliability while overlooking how data movement affects cost.

Understanding traffic patterns and optimizing data flow can significantly reduce these hidden expenses.

Overprovisioned Compute Resources

To avoid performance issues, many teams intentionally allocate more compute resources than needed. While this approach provides safety, it often leads to consistent overprovisioning.

Instances run with low utilization. Containers reserve more CPU and memory than they use. Autoscaling policies may be too conservative, maintaining excess capacity even during low demand.

This creates a gap between what is provisioned and what is actually used. That gap represents wasted spend.

Overprovisioning is rarely obvious because systems continue to perform well. Without proper visibility, teams may assume resources are necessary when they are not.

Right-sizing workloads based on real usage patterns is key to eliminating this hidden cost.

Logging and Monitoring Overload

Observability is critical for modern systems, but it can become expensive if not managed carefully. Logging, metrics, and tracing generate large volumes of data, especially in high-traffic environments.

Each log entry, metric point, and trace contributes to storage and processing costs. Over time, these charges can grow significantly, often without clear visibility into their impact.

The challenge is that teams are reluctant to reduce observability for fear of losing insight during incidents. This leads to excessive data collection without clear retention or filtering strategies.

Balancing visibility with cost requires thoughtful data management. Not every log needs to be stored indefinitely, and not every metric needs high granularity.

Unused or Underutilized Reserved Capacity

Reserved instances and savings plans are designed to reduce costs, but they can become inefficient if not aligned with actual usage.

Teams may commit to long-term capacity based on expected demand, only to find that workloads change over time. Services may be optimized, traffic patterns may shift, or architecture decisions may evolve.

When reserved capacity is underutilized, organizations pay for resources they are not fully using. This creates a hidden cost that is harder to detect because it appears as a “discounted” rate.

Regular review of commitments and alignment with current usage is necessary to ensure these savings mechanisms remain effective.

The Real Challenge: Visibility

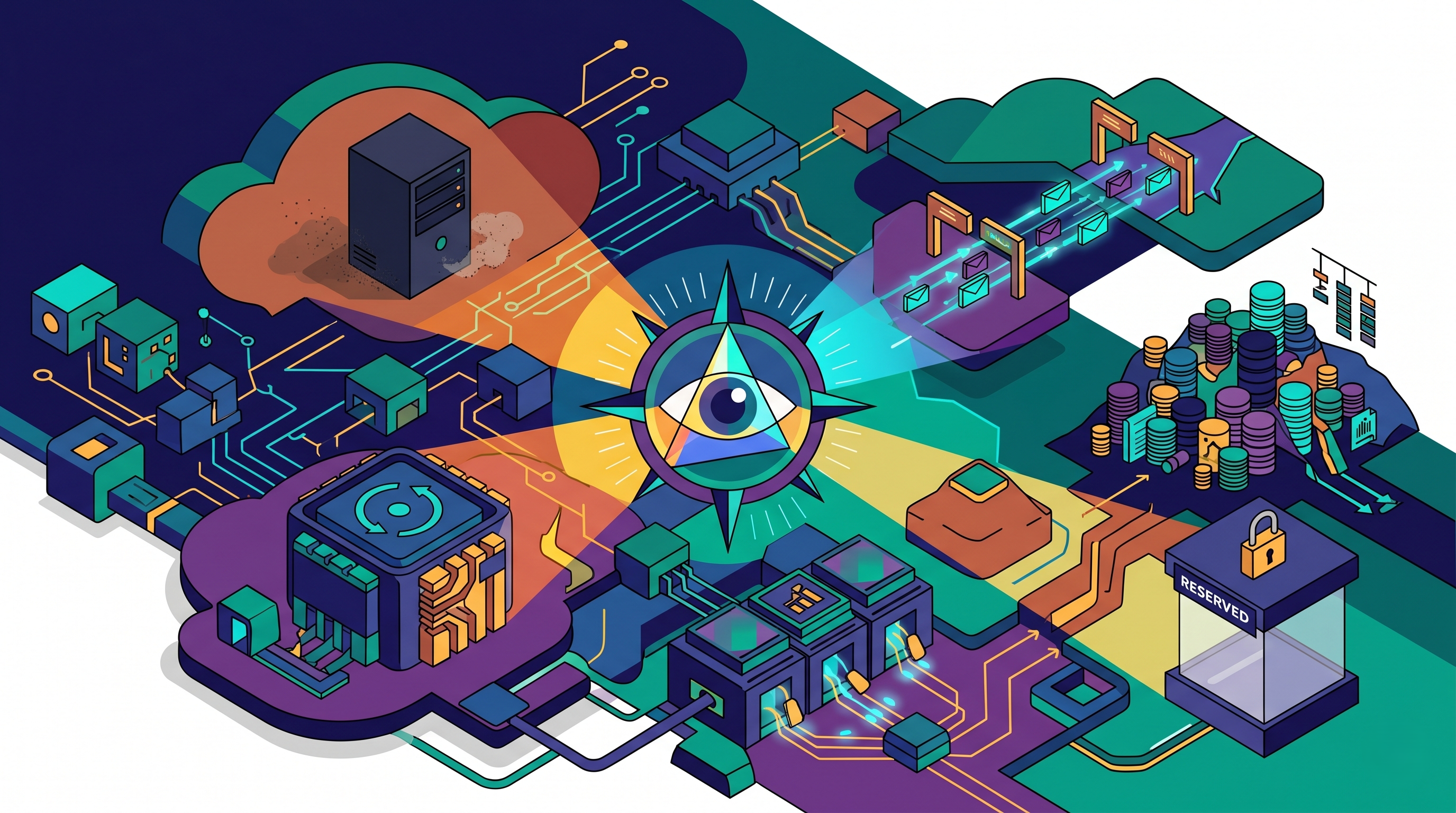

The common thread across all these hidden costs is not lack of awareness. It is lack of visibility.

DevOps teams often know these issues exist in theory. The challenge is identifying them in real environments where data is fragmented across multiple tools, services, and platforms.

Without clear visibility, teams react to total spend rather than understanding its composition. This makes optimization slower and less effective.

What is needed is not more data, but better interpretation of existing data.

Bringing Clarity to Hidden Costs with Atler Pilot

For many teams, the difficulty lies in connecting scattered cloud signals into a meaningful picture. Costs appear in billing reports, usage data lives in monitoring tools, and optimization opportunities remain buried in complexity.

Atler Pilot helps bridge this gap by turning fragmented cloud and operational data into actionable intelligence. Instead of manually searching for inefficiencies, teams gain clearer visibility into where resources are underutilized, where costs are rising unexpectedly, and where optimization efforts should focus.

This allows DevOps teams to move from reactive cost analysis to proactive cost control. Rather than discovering issues after the bill arrives, they can identify and address inefficiencies earlier.

In fast-growing environments, this clarity can quietly transform how teams manage cloud spend without adding friction to development workflows.

Common Missteps That Make Costs Worse

Some teams attempt to control costs by reducing usage aggressively, which can impact performance and reliability. Others rely solely on periodic reviews, missing continuous inefficiencies.

Another common mistake is treating cost optimization as a one-time effort rather than an ongoing process. Cloud environments change constantly, and cost management must evolve with them.

The most effective approach balances performance, reliability, and efficiency while maintaining continuous visibility.

Conclusion

Hidden cloud costs are not usually the result of poor decisions. They are the result of complex systems operating without clear, continuous visibility.

Idle resources, data transfer charges, overprovisioned compute, excessive observability data, and underutilized commitments all contribute to rising spend. Individually, they may seem small. Together, they can significantly impact budgets.

For DevOps teams, the goal is not to eliminate spending but to understand it. Because when visibility improves, control follows—and with it, the ability to scale efficiently without unnecessary waste.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.