There is a moment every engineering team eventually experiences. A small, seemingly harmless change is deployed, perhaps a minor configuration update or a routine code push. Nothing appears unusual at first. However, within minutes, dashboards begin to flicker, alerts start triggering, and systems that were stable moments ago begin to behave unpredictably. What started as a localized issue quietly expands into something much larger.

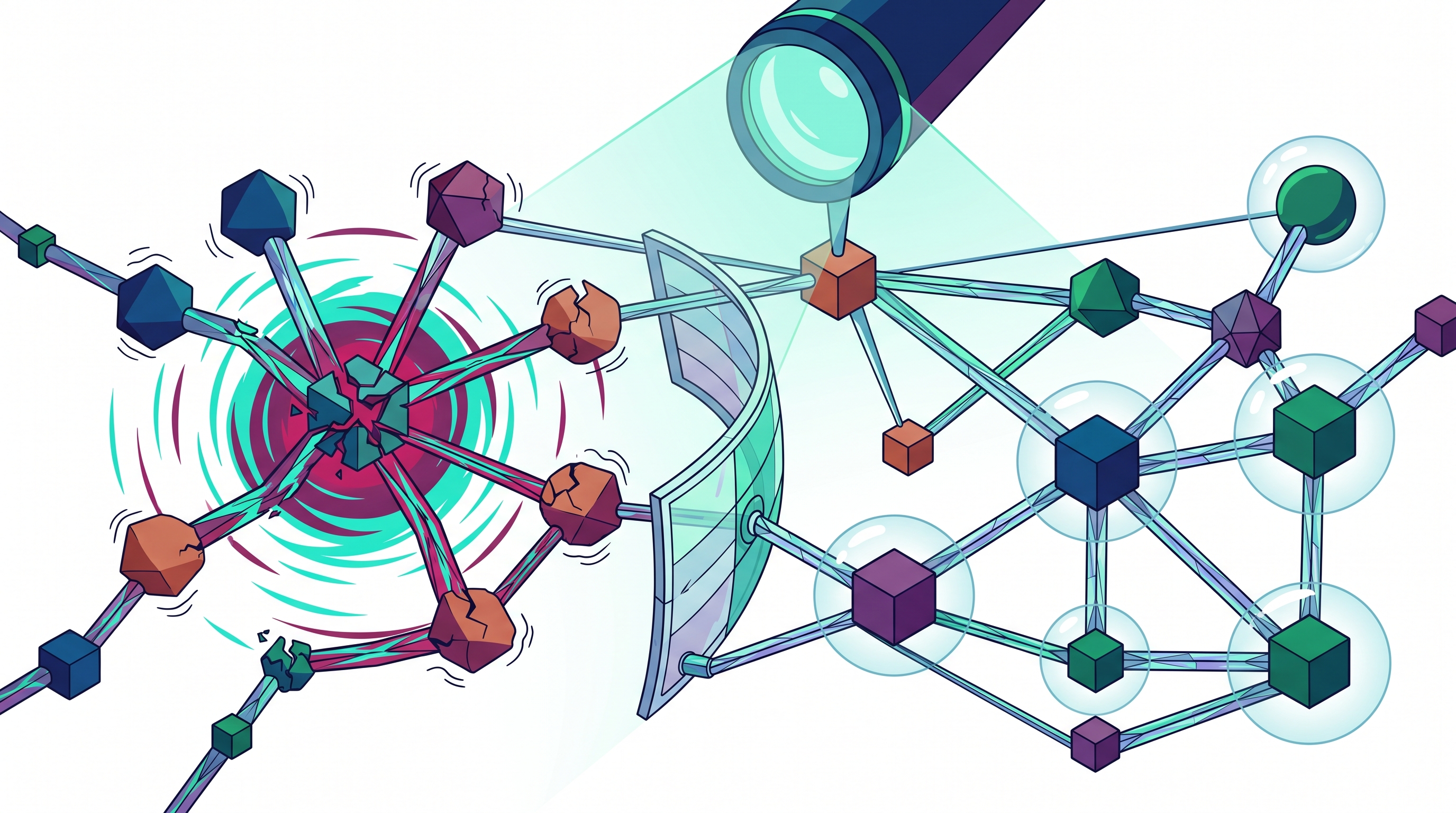

This is the uncomfortable reality of modern distributed systems. Failures rarely stay contained. They move, they amplify, and they often surface in places far removed from their origin. Because of the interconnected nature of today’s architectures, even a small fault can ripple across services, infrastructure layers, and user experiences.

This is precisely why the concept of blast radius matters. It shifts the focus away from the illusion of perfect reliability and toward a more practical and necessary question about when something fails, and how far the damage spreads? Understanding and controlling this spread is what separates resilient systems from fragile ones.

Understanding Blast Radius in Distributed Systems

Blast radius, at its core, is a measure of impact. It represents the scope of disruption caused by a failure within a system. While the term may sound technical, the idea itself is simple and highly intuitive. If a single component fails, does it affect only that component, or does it cascade into multiple systems and services?

In distributed environments, this question becomes critical because systems are no longer isolated. Services depend on APIs, APIs depend on databases, and multiple layers often share infrastructure resources. Because of these dependencies, failures can propagate in unexpected ways. A slowdown in one service might lead to timeouts in another, which in turn could trigger retries, increasing load and compounding the issue.

Therefore, blast radius is not just about identifying what breaks, but about understanding how failure travels. A small blast radius indicates that failures are contained and localized, affecting only a limited portion of the system. A large blast radius, however, suggests that failures spread widely, impacting multiple components, users, and business functions.

The distinction between these two scenarios often determines whether an incident remains a minor inconvenience or escalates into a major outage.

Why Failures Tend to Propagate in Modern Architectures

On the surface, modern systems are designed to be modular and resilient. Microservices, containerization, and distributed architectures promise independence and scalability. However, in practice, these systems often behave differently under stress.

Failures propagate because systems are deeply interconnected. Services rely on each other for data and functionality, and these dependencies are not always visible or well understood. Additionally, mechanisms designed to improve reliability, such as retries and failovers, can unintentionally amplify failures. When a service begins to fail, retries can increase traffic, placing additional strain on already struggling components.

Another factor is shared infrastructure. Even when services are logically separate, they may depend on the same databases, message queues, or compute resources. When these shared components degrade, multiple services can be affected simultaneously.

Moreover, monitoring and alerting systems often lag behind real-time events. By the time a failure is detected, it may have already spread across multiple layers. This delay makes containment more difficult and increases the overall impact.

Because of these factors, failures in distributed systems are rarely linear. They are dynamic, interconnected, and often exponential in their impact.

The Role of Hidden Coupling in Expanding Blast Radius

One of the most overlooked contributors to a large blast radius is hidden coupling. While systems may appear loosely coupled on the surface, deeper inspection often reveals subtle dependencies that become critical during failure scenarios.

Hidden coupling can take many forms. Services may share a common database, rely on the same configuration service, or depend on a global state that is not immediately visible. In some cases, multiple services may depend on a single upstream API, creating a bottleneck that becomes apparent only when that API fails.

These hidden connections mean that a failure in one component can quickly affect others, even if they are not directly related. Because these dependencies are not always documented or understood, teams are often caught off guard when failures spread.

This creates a false sense of security. Systems that appear distributed and independent may, in reality, behave like tightly coupled monoliths under stress. As a result, the blast radius becomes significantly larger than anticipated.

Understanding and addressing hidden coupling is therefore essential for controlling failure impact.

Strategies for Limiting Blast Radius in Practice

Reducing blast radius is not about preventing failures entirely, but about designing systems in a way that limits their impact. This requires a combination of architectural decisions, operational practices, and cultural mindset shifts.

One of the most effective approaches is controlled rollout of changes. Instead of deploying updates to the entire system at once, changes should be introduced gradually. By limiting exposure, teams can observe the impact of a change on a small subset of users or services before expanding further. This ensures that if something goes wrong, the damage remains contained.

Another important strategy is designing for isolation. Systems should be structured in a way that prevents failures from spreading across boundaries. This can involve separating workloads, avoiding shared dependencies, and ensuring that critical services do not rely on a single point of failure. Isolation ensures that even when one component fails, others can continue to function independently.

Introducing mechanisms that slow down failure propagation is equally important. Circuit breakers, rate limits, and timeouts act as barriers that prevent failures from cascading through the system. While these mechanisms may introduce slight delays or restrictions, they play a crucial role in maintaining overall system stability.

Observability also plays a key role in limiting blast radius. Without clear visibility into system behavior, it is difficult to understand how failures propagate or where they originate. By investing in comprehensive monitoring and tracing, teams can identify issues early and take action before they escalate.

Finally, adopting a mindset that treats failure as a normal and expected event is essential. Instead of striving for perfection, teams should focus on resilience and recovery. This shift in perspective encourages proactive design and continuous improvement.

The Importance of Observability in Failure Containment

Observability is often discussed in the context of monitoring performance, but its role in controlling blast radius is equally critical. Without visibility, teams are effectively operating in the dark, relying on assumptions rather than evidence.

In complex systems, understanding how components interact is not always straightforward. Dependencies can be dynamic, and behavior can change under different conditions. Observability tools provide insights into these interactions, allowing teams to trace requests, measure latency, and identify bottlenecks.

More importantly, observability enables teams to detect early warning signs. Subtle changes in latency, error rates, or resource utilization can indicate potential issues before they become critical. By identifying these signals early, teams can intervene and prevent failures from spreading.

However, observability is only effective when it is actionable. Collecting data is not enough; teams must be able to interpret and act on it quickly. This requires not only the right tools, but also the right processes and mindset.

Ultimately, observability transforms failure management from a reactive process into a proactive one.

Designing Systems That Fail Gracefully

A well-designed system does not aim to eliminate failure, but to handle it gracefully. This means ensuring that when something goes wrong, the system continues to function in a degraded but acceptable state.

Graceful degradation involves prioritizing critical functionality while temporarily limiting less important features. For example, an e-commerce platform might disable recommendations or analytics during high load, while ensuring that core purchasing functionality remains available.

This approach reduces the overall impact of failures and maintains a baseline level of service. It also provides teams with more time to diagnose and resolve issues without causing widespread disruption.

Designing for graceful failure requires careful planning and a deep understanding of system priorities. It involves identifying critical paths, defining fallback mechanisms, and ensuring that systems can operate under constrained conditions.

By focusing on continuity rather than perfection, teams can significantly reduce the effective blast radius of failures.

Common Challenges Teams Face in Managing Blast Radius

Despite understanding the importance of blast radius, many teams struggle to manage it effectively. One of the primary challenges is system complexity. As systems grow, so do their dependencies, making it increasingly difficult to map and understand interactions.

Another challenge is fragmented data. Information about system behavior is often spread across multiple tools and platforms, making it hard to get a unified view. This fragmentation leads to delays in detection and response, increasing the likelihood of failure propagation.

Additionally, organizational factors can play a role. Teams may operate in silos, with limited visibility into other parts of the system. This lack of collaboration can hinder efforts to identify and address cross-cutting issues.

Finally, there is often a gap between theoretical design and real-world behavior. Systems may be designed with isolation in mind, but practical constraints and evolving requirements can introduce unintended dependencies.

Addressing these challenges requires not only technical solutions but also organizational alignment and continuous learning.

An Intelligent Approach to Managing System Impact with Atler Pilot

As systems become more complex, managing blast radius manually becomes increasingly difficult. This is where intelligent platforms like Atler Pilot provide a significant advantage.

Atler Pilot enables teams to move beyond reactive incident management by offering a clearer understanding of how systems behave in real-world conditions. It brings together insights across performance, cost, and system interactions, allowing teams to identify hidden dependencies and potential risk areas.

By providing visibility into how different components are connected and how failures might propagate, Atler Pilot helps teams anticipate impact before it occurs. This proactive approach allows for better decision-making during deployments, improved prioritization of fixes, and more effective containment strategies.

Rather than relying on assumptions, teams can base their actions on real data. This shift from reactive to proactive management is essential for controlling blast radius in modern systems.

So, if you are still relying on assumptions to understand system impact, you are leaving too much to chance. Start using Atler Pilot to gain real visibility into your systems, identify hidden risks, and make smarter deployment decisions.

Conclusion

Failures are inevitable. No system, regardless of how well designed, is immune to unexpected issues. However, the difference between a resilient system and a fragile one lies in how it handles those failures.

Blast radius provides a practical framework for thinking about system impact. It encourages teams to focus not just on preventing failure, but on limiting its spread. By adopting strategies such as controlled rollouts, isolation, observability, and graceful degradation, teams can significantly reduce the impact of failures.

At the same time, leveraging intelligent tools like Atler Pilot can provide the visibility and insights needed to manage complexity effectively.

In the end, the goal is not to build systems that never fail, but to build systems that fail small, recover quickly, and continue to deliver value even under stress.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.