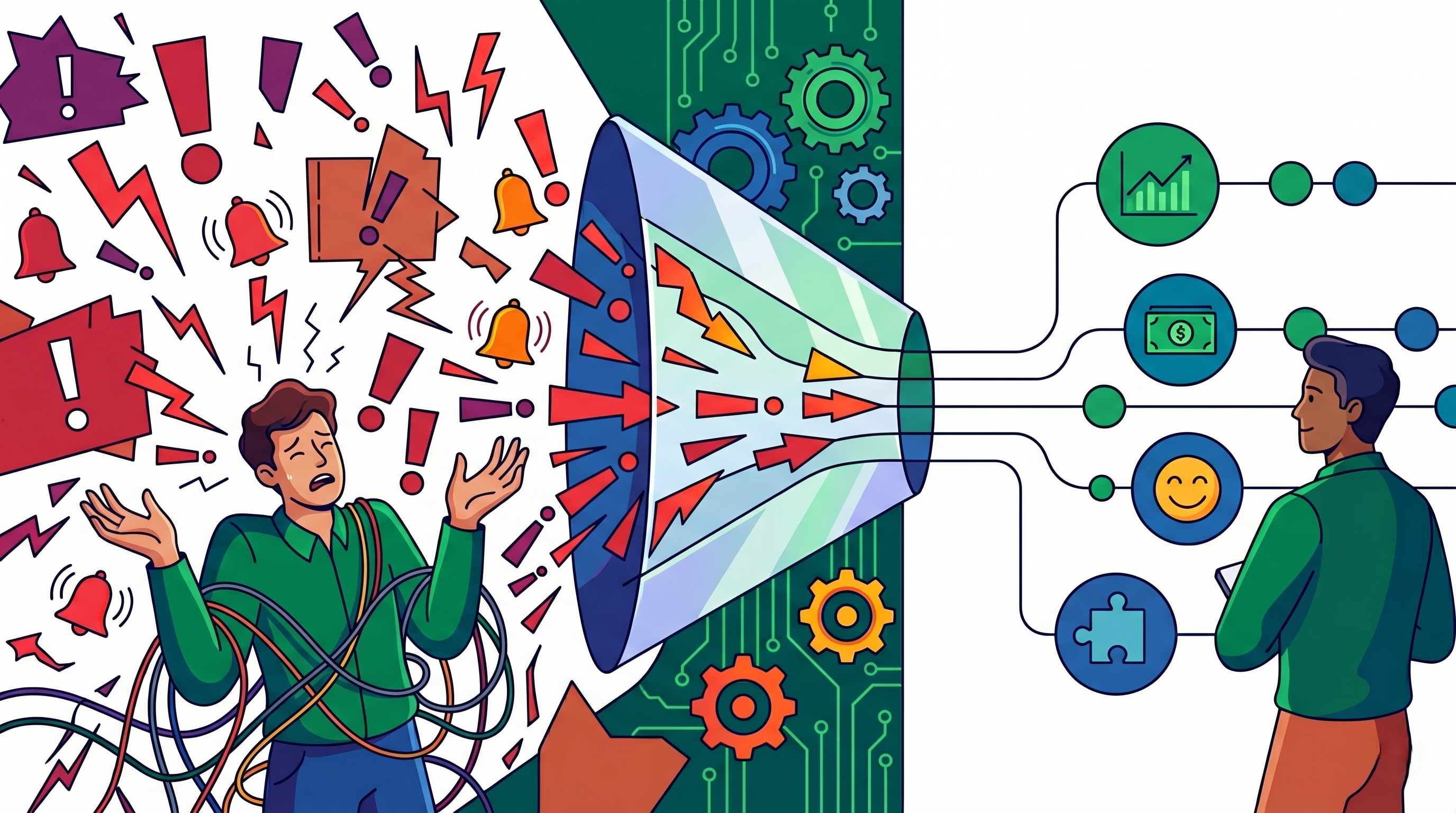

Alerts are meant to protect systems and help teams respond before issues become serious incidents. They notify engineers when performance drops, errors rise, dependencies fail, or customer experience is at risk. However, many DevOps teams face the opposite reality because they receive too many alerts every day. Instead of improving awareness, excessive notifications create distraction, frustration, and confusion. This is why alert fatigue has become one of the most common challenges in modern operations.

As infrastructure expands across cloud platforms, containers, APIs, and CI/CD pipelines, monitoring systems generate more signals than ever before. If those signals are unmanaged, engineers begin ignoring notifications or treating all alerts the same. Critical incidents then blend in with routine noise, and response times slow down. Teams may technically have monitoring coverage, but practically lose visibility. Intelligent monitoring systems solve this problem by improving alert quality rather than simply increasing alert quantity.

In this blog, we will explore how alert fatigue happens, why it damages performance, and how DevOps teams can prevent it using modern intelligent monitoring strategies.

Why Alert Fatigue Happens

Alert fatigue usually develops gradually rather than appearing overnight. As systems grow, teams keep adding new alerts for services, metrics, thresholds, and dependencies. Legacy alerts often remain active long after they stop being useful. Different teams may also create separate rules without coordination. Over time, alert volume increases faster than operational discipline.

Many organizations receive notifications for CPU spikes, memory changes, disk usage, deployment events, failed jobs, latency shifts, and queue depth. Some of these alerts may be useful occasionally, but many are low priority or repetitive. When engineers face this volume every day, alerts stop feeling urgent. They become background noise instead of trusted signals. That is when fatigue begins.

The Real Cost of Too Many Alerts

The damage caused by alert fatigue goes beyond simple annoyance. Frequent interruptions reduce concentration and slow progress on meaningful engineering work. On-call teams may experience sleep disruption, stress, and long-term burnout. Morale can decline when people feel they are constantly reacting without making progress. This affects both individuals and team performance.

Operationally, the risks are even greater. Important incidents may be missed because they look similar to harmless alerts. Engineers may acknowledge notifications quickly without proper investigation. Response times become slower because urgency loses meaning. Too many alerts can become almost as dangerous as having no alerts at all.

Focus on Business Impact

One of the best ways to reduce noise is to alert on business impact rather than every technical fluctuation. Temporary CPU spikes or short-lived latency changes may look concerning, but often do not affect users. Alerts should focus on conditions that matter to customers and operations. This includes failed logins, payment issues, sustained API errors, or rising user-facing latency. These are the signals that deserve immediate attention.

When alerts connect directly to customer experience, teams trust them more. Engineers know that when a notification appears, something meaningful may be at risk. This reduces wasted attention on minor technical events. It also aligns operations with business priorities. Better relevance creates better response behavior.

Eliminate Duplicate Alerts

One root cause often creates multiple alerts at the same time. A database slowdown may trigger API latency warnings, queue backlog notifications, transaction failures, and application error alerts simultaneously. Without correlation, responders receive many messages for one issue. This creates confusion and slows decision-making. Engineers spend time sorting alerts instead of solving the incident.

Intelligent monitoring systems group related signals into a single incident view. Instead of five separate notifications, teams receive one clear alert with supporting evidence. This improves clarity and reduces noise immediately. It also helps responders focus on the real source of the problem. Correlation is one of the fastest ways to improve alert quality.

Use Dynamic Thresholds

Static thresholds often fail in modern environments because workloads are not constant. CPU usage that is normal during peak business hours may be suspicious late at night. Traffic patterns can change by region, season, or campaign activity. A fixed number cannot understand these variations. This leads to unnecessary alerts or missed warnings.

Intelligent systems use baselines and dynamic thresholds instead. They learn what normal behavior looks like over time. Alerts then trigger when behavior changes meaningfully rather than crossing arbitrary limits. This greatly reduces false positives while improving true detection. Smarter thresholds create calmer operations.

Prioritize Severity Clearly

Not every issue should be treated with the same urgency. Yet many teams send similar notifications for small warnings and critical outages. This trains engineers to treat all alerts cautiously or ignore them completely. When everything feels urgent, nothing feels urgent. Clear severity levels solve this problem.

Organizations should classify alerts into categories such as informational, warning, high priority, and critical. Minor issues can wait for working hours, while severe customer-impacting incidents require immediate action. This helps teams allocate attention intelligently. Urgency should reflect real impact, not just technical activity. Strong severity models improve trust in alerting systems.

Build Smarter Escalation Paths

Alert fatigue increases when the wrong people are notified repeatedly. If every issue reaches senior engineers or multiple teams at once, frustration rises quickly. Many alerts only need one specialized team to respond. Routing everything everywhere wastes time and attention. Correct ownership matters as much as correct detection.

Modern systems should route alerts based on service ownership, expertise, and incident type. Database issues should reach database teams, while application regressions should reach product engineering teams. This reduces unnecessary noise across the organization. It also speeds up response because the right people are involved earlier. Smart routing creates operational efficiency.

Use Suppression During Planned Events

Some alerts happen during normal and expected activities. Deployments may restart services briefly, maintenance windows may reduce capacity, and load tests may create spikes. If monitoring systems ignore context, teams receive unnecessary alarms during healthy operations. This teaches people to distrust alerts. Over time, unnecessary noise becomes normalized.

Intelligent monitoring allows maintenance windows, deployment awareness, and temporary suppression rules. During known events, expected behavior does not trigger urgent notifications. This preserves attention for genuine problems. Teams remain confident that alerts still matter. Context-aware suppression is a practical improvement that many organizations overlook.

Add Context to Every Alert

A vague alert creates more work than value. If a notification only says “latency high,” responders still need to investigate the service name, region, impact level, and possible causes. This slows the response during important moments. Engineers need information immediately, not another puzzle. Context turns alerts into useful action.

Modern alerts should include service ownership, impacted environment, severity, related metrics, recent deployments, and suggested runbooks. With this information, responders can make decisions faster. They spend less time gathering basics and more time solving the issue. Better context often reduces the mean time to resolution significantly. A smarter alert is an informed alert.

Measure Alert Quality

Many teams track uptime but never measure how effective their alerts are. Without measurement, noisy systems continue indefinitely. Organizations should review metrics such as alerts per engineer, false positive rate, duplicate alert rate, after-hours pages, and percentage of actionable alerts. These numbers reveal whether monitoring is helping or hurting. What gets measured gets improved.

Regular review helps identify weak rules and repetitive sources of noise. Teams can retire low-value alerts or redesign poor thresholds. They can also track progress over time. Alert quality should be treated as an operational KPI. Strong teams improve their monitoring continuously.

Automate Common Responses

Some alerts represent issues that can be solved automatically. Restarting failed containers, scaling resources during spikes, or rerunning transient failed jobs often do not need manual intervention. If engineers are paged for these repetitive tasks constantly, fatigue grows quickly. Automation can remove much of this burden. It allows people to focus on more valuable work.

Automation should be applied carefully with confidence thresholds and safeguards. The goal is not to remove humans entirely. It is to reduce unnecessary interruptions for predictable events. When done well, automation improves both speed and morale. Intelligent monitoring becomes even stronger when paired with intelligent response.

Where Atler Pilot Creates Real Value

Many teams already collect large amounts of monitoring data but struggle to turn it into clarity. They know issues exist, but priorities remain unclear, and noise remains high. Engineers often spend more time reacting than optimizing. That is where Atler Pilot creates measurable value. It helps organizations transform fragmented cloud and operational signals into actionable intelligence.

With Atler Pilot, teams gain clearer visibility into efficiency gaps, optimization opportunities, and areas demanding attention. Instead of manually chasing scattered metrics, they can focus on smarter decisions and faster action. This supports stronger control with less operational chaos. If your environment creates more alerts than insight, Atler Pilot can help change that. Start with Atler Pilot and turn signals into smarter outcomes.

Conclusion

Alert fatigue is not just a workflow issue. It is a reliability risk that weakens response quality and team confidence. When engineers stop trusting alerts, incidents become harder to detect and resolve. Burnout rises while operational performance declines. Smarter monitoring is the real solution.

The best DevOps teams are not the ones receiving the most alerts. They are the ones receiving the right alerts with the right priority and the right context. Intelligent monitoring replaces noise with clarity. That shift helps teams move faster, stay focused, and protect systems more effectively.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.