For years, infrastructure budgeting followed predictable patterns like plan ahead, allocate resources, and adjust over time. Cloud computing disrupted this model entirely, introducing variability, elasticity, and real-time cost dynamics.

In modern systems, costs are no longer fixed. They are directly influenced by engineering decisions, system behavior, and usage patterns. This shift has driven the evolution of budget forecasting in data-driven cloud operations, transforming it from a periodic financial process into a continuous, insight-driven discipline.

In this blog, we will examine how forecasting has evolved in response to cloud complexity, the limitations of traditional approaches, and the role of real-time data and observability in improving accuracy. We will also explore the challenges organizations face in aligning cost with system behavior. and how to solve it. So, let's get started.

From Static Budgeting to Dynamic Forecasting

In traditional IT environments, budgeting was relatively straightforward. Organizations invested in hardware upfront, amortized costs over time, and operated within predictable expense boundaries. Forecasting was largely based on historical trends and incremental growth assumptions. Cloud disrupted this model entirely.

With on-demand provisioning, autoscaling, and consumption-based pricing, costs became highly variable. A single deployment could double infrastructure usage. A misconfigured service could generate thousands in unexpected charges overnight.

This shift forced organizations to move from static budgeting to dynamic forecasting. Instead of relying solely on past data, modern forecasting models incorporate real-time usage, system behavior, and growth patterns.

The Rise of Data-Driven Forecasting

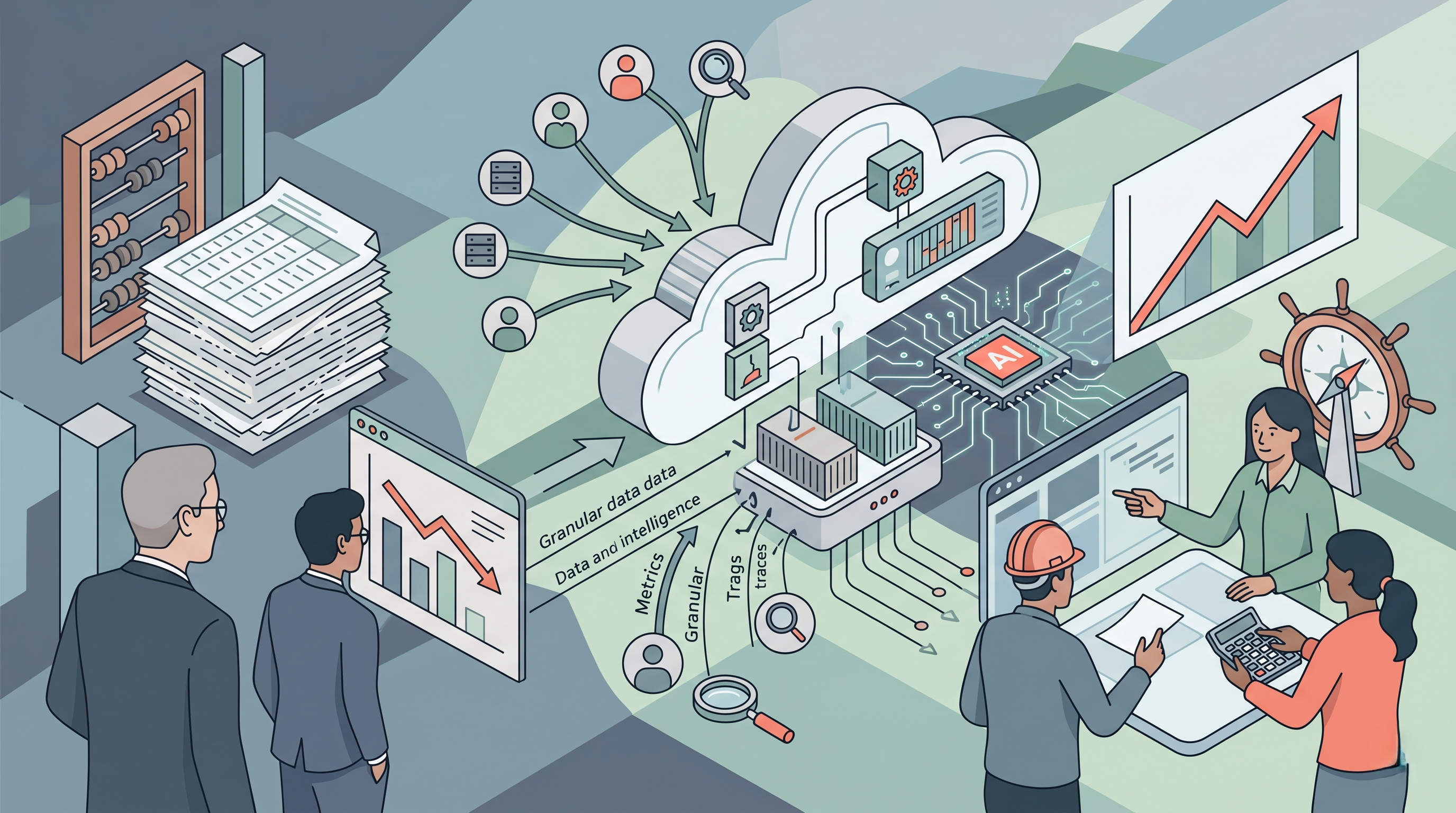

As cloud systems scaled, it became clear that intuition and spreadsheets were not enough. Forecasting required granular, real-time data across multiple dimensions such as compute, storage, networking, and service-level usage.

This led to the emergence of data-driven forecasting models, where decisions are powered by:

Historical usage trends

Real-time consumption metrics

Deployment patterns

User behavior analytics

Instead of estimating costs at a high level, organizations began forecasting at the level of:

Individual services

Features

Environments (dev, staging, production)

This shift enabled teams to answer more meaningful questions:

What is the cost impact of a new feature release?

How will traffic growth affect next month’s bill?

Which services are driving the most cost variance?

Forecasting became not just more accurate but more actionable.

Problem with Traditional Forecasting Models

Despite advancements, many organizations still rely on outdated forecasting approaches. These methods often fail because they assume stability in systems that are inherently dynamic.

One major limitation is the reliance on aggregated data. High-level cost summaries may hide critical variations at the service or resource level. Without granular insights, forecasts become less reliable.

Another challenge is the lack of real-time feedback. Traditional models operate on delayed billing data, which means organizations react to costs after they have already occurred.

Additionally, modern architectures such as microservices and containerized workloads introduce complexity that traditional models cannot easily capture. Costs are no longer tied to a single system but distributed across interconnected components.

As a result, forecasts often deviate significantly from actual spending, leading to budget overruns and reactive cost control measures.

The Role of Observability in Forecasting Accuracy

One of the most significant advancements in cloud forecasting is the integration of observability data.

Observability through metrics, logs, and traces provides deep visibility into system behavior. When combined with cost data, it enables organizations to understand not just how much they are spending, but why. For example, instead of simply observing a cost spike, teams can correlate it with:

Increased request volume

A recent deployment

Inefficient resource utilization

This contextual understanding transforms forecasting from a financial estimate into an engineering-informed prediction.

It also enables proactive decision-making. Teams can simulate the cost impact of scaling a service, changing configurations, or launching new features before those changes are implemented.

Real-Time Forecasting: The Shift Toward Continuous Cost Awareness

Modern cloud operations demand real-time visibility not just into performance, but into cost.

This has led to the rise of continuous forecasting, where cost projections are updated dynamically based on live data. Instead of waiting for monthly reports, teams can monitor cost trends as they evolve. Real-time forecasting allows organizations to:

Detect anomalies early

Adjust resource usage proactively

Align engineering decisions with budget constraints

It also bridges the gap between finance and engineering teams. Cost is no longer a retrospective metric, but it becomes a shared, real-time signal.

Challenges in Building Effective Forecasting Systems

Despite its importance, building accurate forecasting systems is not trivial.

One of the biggest challenges is data fragmentation. Cost data, usage metrics, and system events often exist in separate tools, making it difficult to create a unified view.

Another challenge is variability. Cloud workloads can change rapidly due to traffic fluctuations, feature releases, or scaling events. Capturing this variability in forecasting models requires sophisticated analysis.

There is also the issue of attribution. In complex systems, it can be difficult to determine which team, service, or feature is responsible for specific costs. Without clear attribution, forecasting becomes less actionable.

Finally, forecasting models must balance accuracy with usability. Highly complex models may produce accurate predictions but be difficult for teams to interpret and act upon.

The Emergence of FinOps and Collaborative Forecasting

To address these challenges, many organizations are adopting FinOps, which is a practice that brings finance, engineering, and operations together to manage cloud costs collaboratively.

In this model, forecasting is no longer owned solely by finance teams. Engineers play an active role by:

Understanding the cost impact of their decisions

Monitoring resource usage

Optimizing system design

This collaborative approach leads to more accurate forecasts and better alignment between technical and financial goals. Forecasting becomes a shared responsibility, embedded into daily workflows rather than isolated in periodic reviews.

From Forecasting to Prediction: The Role of AI and Automation

As data-driven practices mature, forecasting is evolving into predictive cost intelligence.

Advanced systems now leverage machine learning to:

Identify usage patterns

Detect anomalies

Predict future costs with greater accuracy

These systems can automatically adjust forecasts based on changing conditions, reducing the need for manual intervention.

Automation also plays a key role. By integrating forecasting with operational workflows, organizations can trigger actions, such as scaling down resources or adjusting configurations, based on predicted costs.

This represents a shift from passive forecasting to active cost management.

Introducing Atler Pilot: Bridging the Gap Between Insight and Action

While the evolution of budget forecasting has brought significant advancements, many organizations still struggle with one fundamental problem: turning insights into action.

This is where Atler Pilot comes in.

Atler Pilot is designed to go beyond traditional cost monitoring tools by providing real-time, actionable intelligence for cloud cost management. Instead of simply showing what has already happened, it helps teams understand what is happening and what will happen next.

With Atler Pilot, organizations can:

Track the cost impact of deployments and configuration changes in real time

Map cost variations directly to system events

Identify inefficiencies before they escalate

Gain granular visibility across services and environments

What sets Atler Pilot apart is its focus on contextual cost intelligence. By integrating system behavior with cost data, it enables teams to make informed decisions quickly and confidently.

In a landscape where every engineering decision has financial implications, Atler Pilot acts as a bridge between observability and cost optimization.

The Future of Budget Forecasting in Cloud Operations

The future of cloud forecasting lies in deeper integration, greater automation, and enhanced intelligence.

Forecasting will become:

More granular, down to feature-level cost predictions

More real-time, with continuous updates

More predictive, driven by AI and machine learning

At the same time, tools will evolve to provide not just insights, but recommendations and automated actions.

As cloud environments continue to grow in complexity, the ability to forecast and control costs will become a key competitive advantage.

Conclusion

Budget forecasting in cloud operations has evolved from a static, finance-driven process into a dynamic, data-driven discipline.

Today, it is not just about predicting costs. It is about understanding the relationship between system behavior and financial impact. Organizations that embrace this evolution gain more than cost control. They gain the ability to make smarter engineering decisions, scale efficiently, and align technical innovation with financial sustainability

And in this journey, tools like Atler Pilot play a crucial role in transforming forecasting from a passive exercise into a strategic capability. Because in modern cloud environments, the goal is no longer just to predict costs but to control them intelligently.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.