I’ve been in dozens of architectural reviews where the conversation follows a predictable, almost rhythmic pattern. The engineering lead beams with pride while demonstrating how their new microservices architecture handles a 10x traffic spike without a single dropped packet. It is a masterpiece of elasticity. But then, the FinOps lead clears their throat, opens a tab labeled "Cloud Bill," and the room goes silent. The "elasticity" that kept the app alive also triggered an unforecasted $40,000 jump in spend because a few hundred pods were set with "safety-first" resource requests that were never actually utilized.

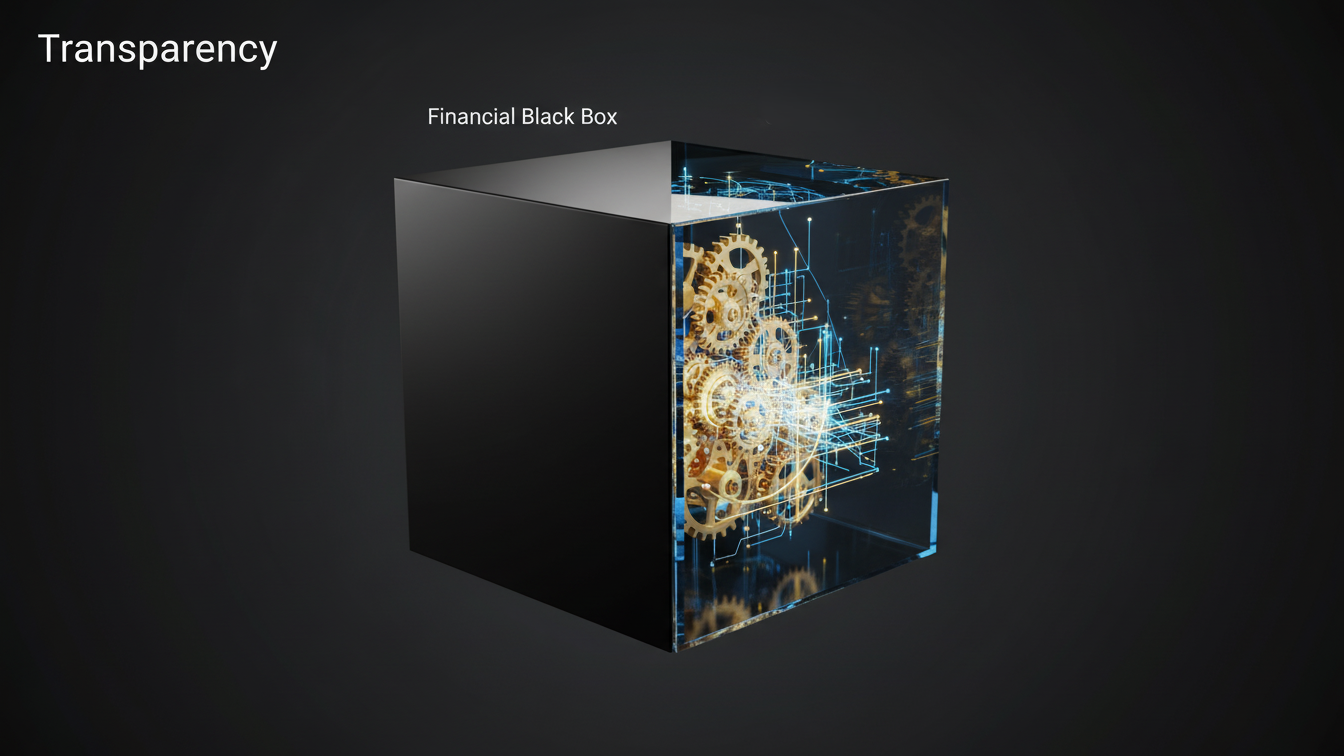

That silence is the sound of the Kubernetes cost management blind spot. While Kubernetes (K8s) is the undisputed champion of container orchestration, it is also a financial black box for most organizations. In our quest for engineering velocity, we’ve built systems that are incredibly good at spending money, but notoriously bad at explaining why. Addressing this is about turning the black box into a glass box where every pod and namespace carries its own weight in value.

The Visibility Gap in the Cloud Bill

The primary reason Kubernetes remains a blind spot is a fundamental architectural disconnect. Your cloud provider (AWS, Azure, or GCP) bills you for infrastructure, the Virtual Machines (Nodes), the storage volumes, and the load balancers. However, Kubernetes thinks in terms of logical abstractions: pods, services, and namespaces. A single EC2 instance on your bill might be hosting fifty different containers belonging to four different engineering teams. When that instance costs $500 a month, your standard cloud billing report cannot tell you which team is responsible for 80% of the utilization.

This lack of granularity leads to "The Guesswork Era" of FinOps. According to the 2025 Kubernetes Cost Benchmark Report, the average CPU utilization across clusters remains a staggering 10%, a 23% decrease year-over-year. This means 90% of the computing power organizations are paying for sitting idle. Without specialized Kubernetes cost management tools, finance teams are forced to use broad estimates, leading to inaccurate chargebacks and a total loss of financial accountability. It’s hard to ask a developer to optimize their code when you can’t show them exactly how much their specific service is costing the company.

The Overprovisioning Crisis: Paying for Peace of Mind

If you ask a developer why they requested 2 CPUs for a service that rarely peaks above 0.2, they’ll give you a very logical answer: "I don't want to get paged at 3 AM." In the world of DevOps, overprovisioning is a survival instinct. Nobody gets fired for a bill that's 20% too high, but they certainly do for a production outage caused by CPU throttling or Out-Of-Memory (OOM) kills. This safety padding is the single largest contributor to cloud waste, with research showing that 99% of Kubernetes workloads are overprovisioned.

The complexity of the Kubernetes scheduler exacerbates this. The scheduler places pods based on requests, not actual usage. If every developer "pads" their request by 50%, the cluster will appear full to the scheduler, triggering the Cluster Autoscaler to spin up new, expensive nodes, even though the existing nodes are mostly idle. This creates a cycle where the "Requests vs. Usage" gap widens every time a new feature is deployed. Solving this requires more than just a dashboard. It requires a culture of economic engineering where resource limits are treated as financial constraints.

Mastering Cost Allocation: From Namespaces to Unit Economics

True maturity in Kubernetes spend isn't about cutting the bill, but it's about accurate attribution. To solve the blind spot, organizations must move beyond "Total Cluster Cost" and embrace unit economics. This means being able to calculate the cost per customer, cost per transaction, or cost per feature. If your "Search" service costs $5,000 a month, is that good or bad? You can’t know until you see that it processed 5 million queries, making it $0.001 per query.

Effective allocation relies on a robust labeling and tagging strategy. By labeling every pod with its associated team, environment, and project, you can use tools to "scrape" these metrics and map them back to your cloud provider's billing data. This is where solutions like Atler Pilot by Cloud Atler become an essential partner. Instead of forcing engineers to spend hours manually reconciling spreadsheets, Atler Pilot provides real-time visibility into spend at the granular level, allowing you to see exactly which namespace is blowing the budget before the monthly invoice even arrives. It bridges the gap between the ephemeral nature of K8s and the rigid nature of corporate finance.

The Automation Frontier: Rightsizing at Machine Speed

The final stage of solving the Kubernetes blind spot is moving from observing waste to eliminating it automatically. Human engineers simply cannot keep up with the dynamic nature of a modern cluster. Traffic patterns change by the hour, and manual "rightsizing" exercises are usually out of date at the moment they are completed. To truly optimize, organizations are turning to automated Horizontal Pod Autoscalers (HPA) and Vertical Pod Autoscalers (VPA) that adjust resources based on real-time telemetry.

Furthermore, leveraging spot instances for K8s workloads can offer average compute cost savings of 59% to 77%. However, managing the volatility of Spot nodes (which can be reclaimed by the provider at any time) is a massive operational burden. Sophisticated platforms now automate this "Spot Orchestration," gracefully moving workloads to On-Demand nodes when capacity is tight and back to Spot when prices drop. This level of automated governance ensures that you are always running on the most cost-efficient "inventory" available without sacrificing the reliability that developers worked so hard to build.

Conclusion

Kubernetes is no longer a niche technology; it is the backbone of the modern enterprise. But as adoption increases among enterprises, the focus must shift from "How do we run it?" to "How do we run it profitably?" The visibility blind spot is a choice, not an inevitability. By combining granular cost allocation with automated rightsizing and a value-based FinOps culture, organizations can finally stop "riding the tiger" of unexpected cloud spend. Also, the goal of Kubernetes cost management isn't just to save money but it's to ensure that every dollar of your cloud budget is a direct investment in your product's success. When you can see through the black box, you stop managing a bill and start engineering a competitive advantage.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.