Modern systems are more observable than ever, at least on the surface. Dashboards are filled with metrics, logs stream in real time, and traces map out request flows across services. It feels like we can see everything.

Yet, when something goes wrong, or when costs spike unexpectedly, teams often find themselves asking the same question: “What are we missing?”

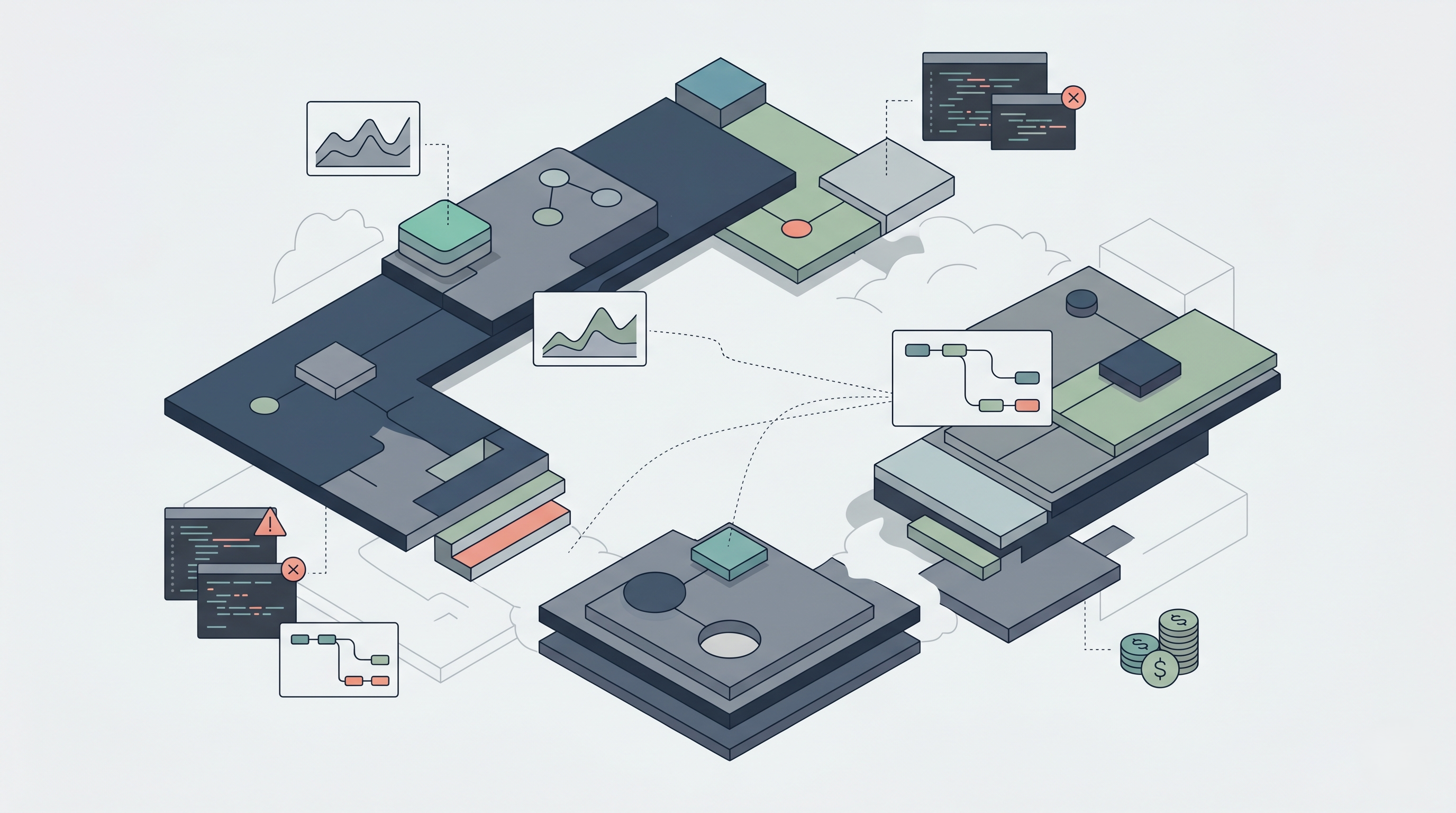

Because the truth is, despite all the tools and data, we rarely have complete visibility. What we have instead is partial visibility and fragments of insight scattered across systems, tools, and layers of abstraction.

Although each tool provides valuable information, none of them tells the full story. And in complex, distributed environments, this gap between what we see and what actually happens becomes a major challenge.

This is not just an observability issue. It is an operational, architectural, and financial problem. So, the real concern is not whether we have data. It is whether we have enough connected context to understand it.

1. What is Partial Visibility?

Partial visibility does not mean a lack of data. In fact, most systems generate more data than teams can realistically process.

Instead, it refers to a situation where insights are incomplete, disconnected, or misaligned. You may have metrics showing CPU usage, logs capturing errors, and traces mapping requests, yet still be unable to answer a simple question like: “Why did this happen?”

This happens because each signal exists in isolation. Metrics show trends, logs show events, and traces show flows, but without correlation, they remain pieces of a puzzle that never fully come together. Although each tool works as intended, the system as a whole remains partially visible.

2. The Explosion of System Complexity

One of the primary reasons for partial visibility is the increasing complexity of modern architectures.

Microservices, serverless functions, containers, and multi-cloud environments have replaced monolithic systems. While these architectures offer scalability and flexibility, they also introduce fragmentation.

A single user request may pass through dozens of services, each with its own logs, metrics, and dependencies. Understanding the full lifecycle of that request requires stitching together data from multiple sources.

Although observability tools attempt to provide this stitching, they often fall short due to scale, latency, or lack of context. As complexity increases, visibility does not scale proportionally.

3. Siloed Observability Tools

Most organizations rely on multiple tools for monitoring and observability. One tool handles metrics, another manages logs, and a third provides distributed tracing.

Although each tool is powerful in its own domain, they are often not deeply integrated. This creates silos where data exists but cannot be easily correlated.

For example, a spike in latency may be visible in a metrics dashboard, but the corresponding logs and traces may reside in separate systems. Connecting these signals requires manual effort and time. This fragmentation slows down investigation and increases the likelihood of missing critical insights.

4. The Context Gap

Data without context is difficult to interpret.

A metric may indicate high CPU usage, but without context, it is unclear whether this is expected or problematic. Similarly, an error log may indicate a failure, but not its impact on the overall system. Context includes factors such as:

Deployment changes

Feature rollouts

Traffic patterns

User behavior

Although these factors significantly influence system behavior, they are often not integrated into observability data. This creates a gap where teams can see what is happening, but not why.

5. Time Lag and Delayed Insights

Another dimension of partial visibility is time. Many systems rely on aggregated data that is updated at intervals. Although this is sufficient for general monitoring, it introduces delays in identifying issues.

By the time a problem becomes visible, the system may have already changed. New deployments may have occurred, traffic patterns may have shifted, and the original context may be lost.

This delay makes it difficult to trace issues back to their source, especially in fast-moving environments.

6. Hidden Dependencies and Cascading Effects

Complex systems are full of hidden dependencies. Services rely on other services, databases, APIs, and external systems.

When one component fails or slows down, it can trigger cascading effects across the system. However, these dependencies are not always visible in observability tools.

A team may see increased latency in one service without realizing that the root cause lies in another service several layers away.

Although distributed tracing helps, it is not always comprehensive, especially in systems with partial instrumentation.

7. Cost Visibility is Even More Fragmented

While operational visibility is challenging, cost visibility is often even more fragmented.

Cost data is typically aggregated and delayed, making it difficult to correlate with real-time system behavior. For example, a deployment may increase resource consumption immediately, but the cost impact may only appear hours or days later.

Additionally, cost is influenced by multiple factors, including compute, storage, and network usage. These factors are often tracked separately, making it difficult to understand the full financial picture.

This creates a situation where teams cannot easily connect system behavior with cost outcomes.

8. The Illusion of Full Observability

With advanced dashboards and visualization tools, it is easy to believe that systems are fully observable. However, this is often an illusion. The presence of data does not guarantee completeness.

Teams may feel confident because they can see many metrics and logs, yet critical gaps remain. These gaps only become apparent during incidents or unexpected cost spikes.

Although tools provide visibility, they do not eliminate uncertainty.

9. Human Limitations in Interpreting Data

Even with perfect data, human limitations play a role in partial visibility. Engineers must interpret large volumes of information under time pressure. Cognitive overload can lead to missed signals or incorrect conclusions.

Additionally, different teams may have access to different parts of the system, leading to a fragmented understanding.

Although collaboration helps, it does not fully solve the problem of interpreting complex, interconnected data.

10. Bridging the Visibility Gap

Addressing partial visibility requires a shift from isolated monitoring to connected observability. This involves:

Correlating metrics, logs, and traces

Integrating deployment and feature data

Linking operational signals with cost data

Reducing data silos through unified platforms

By connecting these elements, teams can move from fragmented insights to a more holistic understanding of system behavior. Although achieving this is challenging, it significantly improves decision-making and response times.

11. The Role of Intelligent Observability Platforms

Modern platforms are evolving to address the problem of partial visibility.

They go beyond collecting data and focus on connecting it. By correlating different signals and providing contextual insights, they help teams understand not just what is happening, but why.

Platforms like Atler Pilot extend this concept to cost observability. They connect system behavior with financial impact, enabling teams to see how changes in architecture or deployment affect spending in real time. This unified approach reduces blind spots and enables more informed decision-making.

12. From Visibility to Understanding

Visibility alone is not the goal. Understanding is.

Having access to data is only useful if it leads to actionable insights. This requires not just tools, but also processes and practices that prioritize context and correlation.

Teams must move from reactive monitoring to proactive analysis, continuously seeking to understand how different parts of the system interact.

Although this requires effort, it transforms visibility into a strategic advantage.

Conclusion

Partial visibility is not a failure of tools. It is a natural consequence of complexity. As systems grow more distributed and dynamic, achieving complete visibility becomes increasingly difficult. However, this does not mean it is impossible to improve.

By focusing on connection, context, and correlation, teams can reduce blind spots and gain a clearer understanding of their systems. The goal is not to see everything in isolation, but to see how everything fits together.

Because in complex systems, the biggest risks and the biggest opportunities often lie in the parts you cannot fully see.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.