If you’ve been working with AI or LLM-powered applications lately, you’ve probably experienced this firsthand: everything works beautifully in development, the model responses are accurate, latency is acceptable, and then the bill arrives.

And suddenly, the real problem isn’t model quality, it’s inference cost.

Although training models used to be the most expensive part of AI, the shift toward production AI has changed the equation. Today, the real, recurring expense comes from running those models' serving predictions, generating responses, and handling user queries.

The tricky part is that inference cost doesn’t always grow linearly. A small increase in users, longer prompts, or slightly more complex workflows can quietly multiply your spend. And before you realize it, what started as a promising feature turns into a cost center that’s hard to justify. So, the real question becomes: How do you scale AI usage without letting inference costs spiral out of control?

Let’s break it down - what drives inference cost, where teams usually go wrong, and how you can reduce it without sacrificing performance.

1. What is Inference Cost?

Inference cost refers to the expense incurred when a trained model processes inputs and generates outputs in real time. This includes compute usage, memory consumption, and often API-based pricing tied to tokens or requests.

Although a single inference call may cost only a fraction of a rupee or a few cents, the scale at which modern applications operate changes everything. A chatbot, for instance, may handle thousands or even millions of requests daily. Each request triggers compute operations that accumulate rapidly.

What makes this particularly challenging is that inference is tied directly to user activity. As your product grows, so does your cost. Unlike infrastructure that can sometimes be optimized in batches, inference cost scales almost linearly with usage, unless you actively optimize it.

2. The Core Drivers Behind LLM Inference Cost

Inference cost is not a single variable. It is influenced by several interconnected factors, and understanding these is key to controlling spend.

Model size plays a major role. Larger models require more compute and memory, which increases the cost per request. Although they often provide better accuracy, the trade-off is higher operational expense.

Token usage is another critical factor, especially for LLMs. Costs are often calculated based on the number of input and output tokens. Longer prompts and verbose responses directly increase spend.

Latency requirements also impact cost. If your application requires low-latency responses, it may need dedicated or high-performance infrastructure, which is more expensive than batch or delayed processing.

Finally, request frequency amplifies everything. Even small inefficiencies become significant when multiplied across millions of requests.

3. Why Inference Costs Are Harder to Control Than Training Costs

Training costs, although high, are predictable. You know when you are training a model, how long it will take, and roughly how much it will cost. Once training is complete, the expense stops.

Inference, on the other hand, is ongoing and dynamic. It depends on user behavior, traffic patterns, and application design. Costs fluctuate throughout the day and can spike unexpectedly with increased usage.

Although monitoring tools provide visibility into usage, they often lack context. Teams may see rising costs but struggle to connect them to specific features, prompts, or user actions.

This makes inference cost not just a technical challenge, but also an operational one.

4. The Hidden Cost of Over-Engineering Prompts

One of the most overlooked contributors to inference cost is prompt design.

In many applications, prompts are written to be highly descriptive and detailed to ensure better model responses. Although this improves output quality, it also increases the number of input tokens.

Similarly, models often generate longer responses than necessary. While this may enhance user experience, it comes at a cost. Every extra token is billed.

Over time, these inefficiencies accumulate. A slightly longer prompt or response may seem insignificant, yet at scale, it can lead to substantial increases in spending.

Optimizing prompts is not about reducing quality, but about finding the right balance between clarity and efficiency.

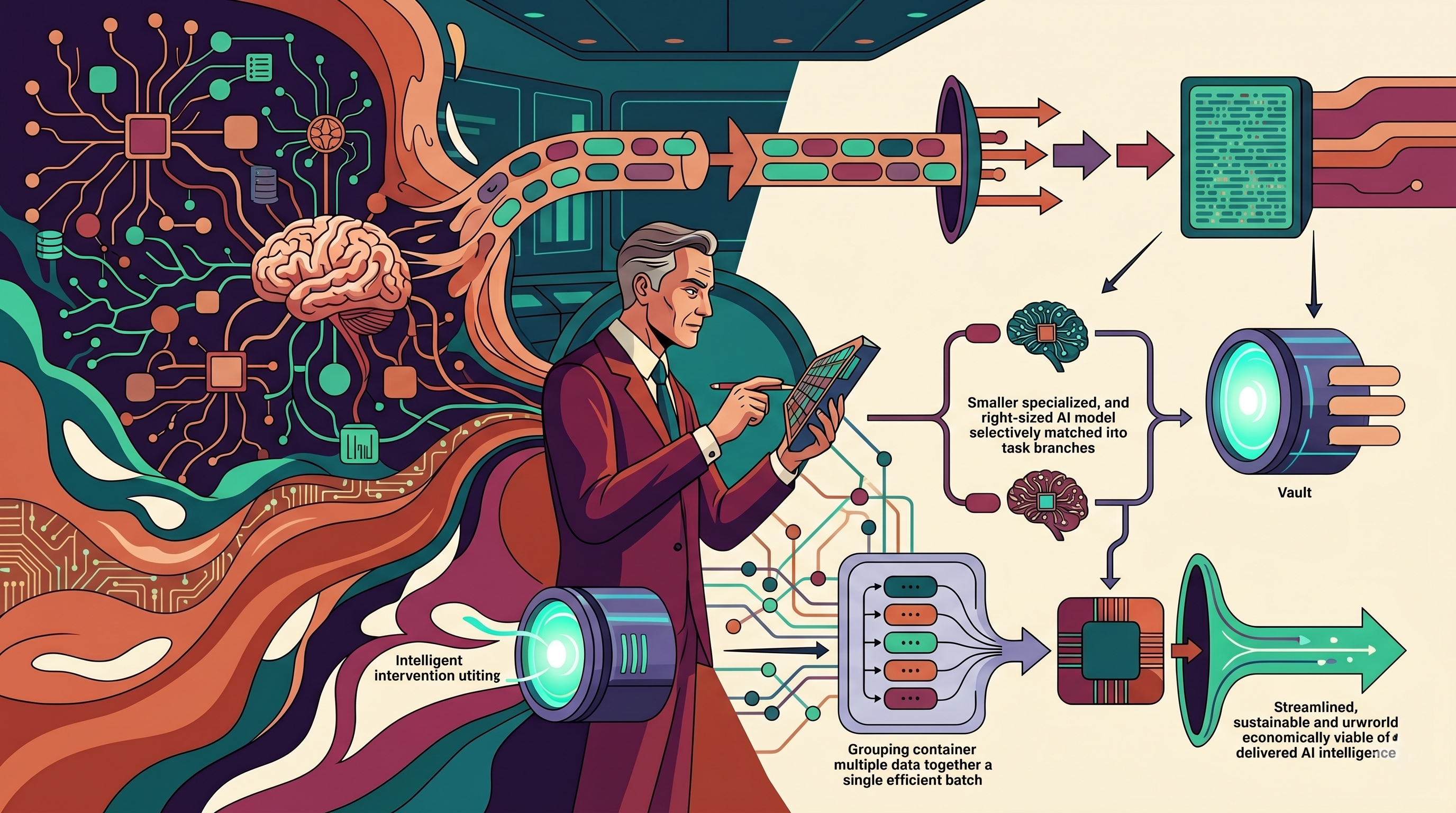

5. Choosing the Right Model for the Job

Not every task requires the most powerful model available.

Many applications default to large, general-purpose models even for relatively simple tasks. However, smaller or specialized models can often achieve similar results at a fraction of the cost.

For example, classification, summarization, or simple question-answering tasks may not require a high-capacity LLM. Using a lighter model for these tasks can significantly reduce inference cost without compromising performance.

Although it may seem safer to use a single model for everything, a more nuanced approach (matching the model to the task) leads to better cost efficiency.

6. Caching and Reusing Responses

A surprisingly effective way to reduce inference cost is to avoid unnecessary computation altogether.

In many applications, the same or similar queries are repeated frequently. Instead of processing each request independently, responses can be cached and reused.

This is particularly useful for FAQs, common prompts, and predictable workflows. By serving cached responses, you eliminate the need for repeated inference calls.

Although caching introduces additional complexity, it can dramatically reduce cost in high-traffic systems.

7. Batch Processing and Asynchronous Inference

Not all inference needs to happen in real time.

For use cases such as analytics, reporting, or background processing, requests can be batched and processed asynchronously. This allows for more efficient use of compute resources and reduces per-request cost.

Batching enables better utilization of hardware, especially GPUs, which are more efficient when handling multiple requests simultaneously.

Although this approach may introduce slight delays, it is often acceptable for non-critical workflows and can lead to significant cost savings.

8. Controlling Output Length and Response Behavior

One of the simplest yet most effective optimizations is controlling how much the model generates.

By setting limits on output length, such as maximum tokens, you can prevent unnecessarily long responses. This ensures that the model remains concise and cost-efficient.

Additionally, guiding the model through structured prompts can reduce verbosity. Clear instructions help the model produce precise outputs, minimizing token usage.

Although it may seem like a small adjustment, controlling output behavior has a direct and measurable impact on cost.

9. Monitoring Cost at the Feature Level

To optimize effectively, you need visibility into where costs are coming from.

Instead of tracking overall inference spend, it is more useful to break it down by feature, endpoint, or user workflow. This allows you to identify which parts of your application are driving the most cost.

For example, a chatbot feature may consume significantly more resources than a recommendation engine. Understanding this distribution helps prioritize optimization efforts.

Although this level of tracking requires additional instrumentation, it provides the clarity needed to make informed decisions.

10. The Role of Intelligent Cost Optimization Platforms

As AI applications scale, manual cost tracking becomes increasingly difficult. Intelligent platforms are designed to provide deeper insights into inference behavior and spending patterns.

These platforms can correlate usage with cost, identify inefficiencies, and recommend optimizations. They help teams understand not just how much they are spending, but why.

Solutions like Atler Pilot enable real-time visibility into inference cost, allowing teams to detect anomalies, optimize prompts, and align usage with budget constraints.

This shifts cost management from a reactive process to a proactive strategy.

11. Balancing Cost with Performance and User Experience

While reducing cost is important, it should not come at the expense of user experience.

Aggressive optimization, such as using overly small models or limiting output excessively, can degrade quality. This may lead to poor user satisfaction and ultimately impact business outcomes.

The goal is to find a balance where the system delivers high-quality responses while remaining cost-efficient. This requires continuous evaluation and iteration.

Although this balance may be challenging to achieve, it is essential for sustainable AI deployment.

Conclusion

Inference cost is not just a technical metric. It is a reflection of how efficiently your AI system operates in the real world.

Although it is easy to focus on model performance and capabilities, long-term success depends on managing the cost of delivering those capabilities at scale.

By understanding the drivers of inference cost and applying thoughtful optimizations, teams can significantly reduce spending without compromising value. The shift is subtle but powerful. Instead of asking, “How many requests are we handling?”, teams begin to ask, “How efficiently are we handling each request?”

Because in the world of AI, efficiency is not just about speed or accuracy. It is about delivering intelligence in a way that is sustainable, scalable, and economically viable.

All in One Place

Atler Pilot decodes your cloud spend story by bringing monitoring, automation, and intelligent insights together for faster and better cloud operations.